Understand the difference between CSAT, NPS, and CES so you can pick the right metric, assign the right owner, and collect feedback that actually gets acted on.

We’ve noticed a common theme across all the startups and SMBs we’ve served over the past decade.

Whenever the team is deciding on a customer service benchmark, they debate NPS vs. CSAT vs. CES. Inevitably, these teams land on "let's use all three." Six months later, the dashboards exist. Nobody knows what to do when they conflict.

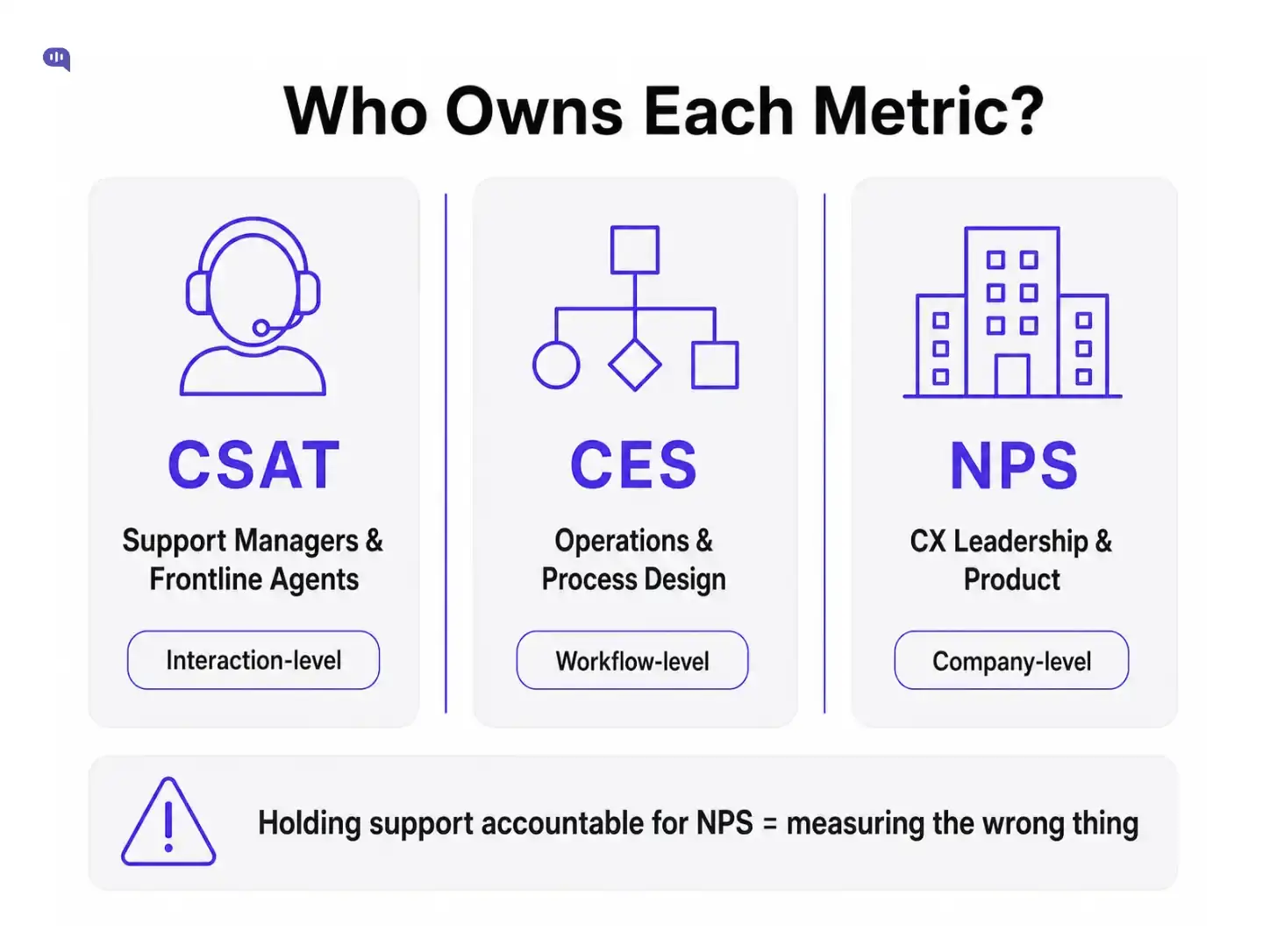

Practically, these debates are not useful. CSAT, NPS, and CES aren't competing metrics.

They're answering fundamentally different questions, on different timelines, for different parts of the business.

Picking the right metric isn't about philosophy; it’s about who can influence it with their day-to-day.

Each of these three surveys asks customers something distinct, and the difference in the question is the difference in what you learn.

CSAT asks: "How satisfied were you with your experience today?" Customers rate on a 1–5 or 1–10 scale.

It's a snapshot of how a specific interaction landed. It’s directly tied to a moment. CSAT scores are high-frequency and perishable. That volatility isn't a flaw. It's the signal.

NPS asks: "How likely are you to recommend us to a friend or colleague?"

Customers score 0–10. Promoters (9–10), Passives (7–8), Detractors (0–6). NPS = % Promoters − % Detractors.

It sounds simple, but what NPS actually captures is the cumulative weight of a customer's entire relationship with your brand.

It should be the north star for the entire company.

CES asks: "How easy was it to resolve your issue today?" Customers rate on a 1–7 scale. CES was developed by Gartner in 2010, and its core finding was counterintuitive: exceeding expectations doesn't build loyalty nearly as much as reducing effort does.

Customers who had to work hard to get help are far more likely to churn, regardless of whether the issue eventually got resolved.

Now that we understand the difference between the metrics, let’s talk about who owns which metric.

It's agent-attributed, interaction-level, and closable in a single conversation. When a CSAT score drops, a support manager can address it: review the transcript, coach the agent, and identify the pattern. This is the only metric in the trio where frontline ownership makes complete sense.

A high-effort score means the process failed the customer:

Those are structural problems. Assigning CES accountability to agents is like measuring a surgeon's performance by the length of the hospital's check-in line.

NPS reflects the sum of every experience a customer has had with your company. If NPS is low, it might be because support is slow, but it's equally likely to be due to pricing, a recent product regression, a poor onboarding experience, or a competitor eating your lunch.

Support can influence NPS. Support cannot own NPS. Holding a support team accountable for a score they have 30% control over is how you build a metric that everyone resents.

We've seen this play out consistently: the teams that get the most value from these metrics are the ones who've answered "who is empowered to act on this number?" before they start collecting it.

Coming to the collection, the survey triggers for each metric also influence its effectiveness.

Timing is as important as the question itself. A well-timed survey gets honest data. A poorly timed one poisons the well.

You should trigger them immediately after the interaction closes, while the experience is fresh.

Waiting 24 hours for transactional feedback is waiting too long; customers have moved on, and the rating becomes ambient sentiment, not interaction recall.

It should never fire immediately after a support contact. Sending NPS right after a customer just waited 45 minutes on hold is asking them to rate the relationship at its lowest point.

NPS cadences vary by business type:

The goal is to catch customers in a neutral or positive state so the score reflects the relationship.

Now, let’s return to our starting question. If your team is debating about which metric to use, we’ve created a small tool that you can use to automate.

We've built a Metric Chooser you can use below. You can answer three questions, and it tells you which metric to start with, who should own it, and what question to put in the survey.

If you want to map it manually, here's the logic:

Once you’ve chosen the metric to start with, the question about whether you should measure all three metrics will come up.

Yes, but sequence matters, and size matters.

They need CSAT scores and a weekly review of low-rated tickets. The overhead of running three concurrent survey programs, managing response rates, and synthesizing conflicting signals is real work. Don't create that infrastructure before you have the team to act on it.

The natural sequence for most support operations:

The teams that get all three right treat them as a system.

For example, low FCR (first contact resolution) will degrade your CES and CSAT scores long before NPS registers the impact.

For a broader look at how these metrics align with operational KPIs such as first response time and average resolution time, see our guide to customer experience KPIs to track.

The "which metric is best?" debate misses the point. CSAT, NPS, and CES aren't competing: they're measuring different dimensions of customer experience on different timelines for different owners. The support manager who's accountable for NPS and the operations team, who are ignoring their CES data, are both measuring the wrong things for the wrong reasons.

Start with the metric that matches the question you're actually asking. Assign clear ownership before you collect a single response. And don't add complexity until you've built the habit of acting on simplicity. The right metric is the one that changes what you do on Monday morning.