Updated on January 21, 2025

Google, Microsoft, OpenAI and Anthropic… 4 of the biggest names in the AI space.

One alliance.

The Frontier Model Forum.

That’s right – the competitors have come together to create what Google is calling the Frontier Model Forum, in an announcement made yesterday.

What is the forum about? Why did it come into existence? And how is it going to affect you and me, who are seemingly sitting on the sidelines as the big guys make all the important decisions?

Before we learn everything about the forum, here is a quick refresher on how generative AI got to this point.

How it all began – OpenAI and ChatGPT – opening the Pandora’s box

Artificial Intelligence was still something that the world thought was a fad among computer scientists. Something that was relegated to science fiction.

Little did the world know that a team of computer researchers had been toiling away in secret. This was a San Francisco based AI research and development company called OpenAI.

ChatGPT came into the world in late November 2022. It became the fastest growing internet service in the history of mankind, reaching 100 million users in January 2023.

ChatGPT was not the result of a few months of development. In fact, large language models, or LLMs as they are popularly known, have been in the works for more than two decades.

Fast forward to today, and there is a clear and present danger of using ChatGPT to do a lot of nasty activities. For instance, within a few weeks of ChatGPT becoming available to the public, there were cybercriminals asking the tool for known security vulnerabilities to banking websites.

People have used ChatGPT to create malware, making the job of security researchers even more difficult.

And before OpenAI could address these issues, the world had got a taste of what a powerful LLM could do.

And the world began to replicate.

Microsoft, Anthropic, Google – Big Names, Bigger Ambitions.

OpenAI had opened Pandora’s box.

ChatGPT was on everyone’s lips, trending on Twitter for weeks, and the big names in the tech industry all started talking about it.

Paul Buccheit, who created Gmail, called ChatGPT the Google killer, and said that if Google didn’t do anything about it, they would be out of business soon.

Google got into fire-fighting mode, issuing a code-red in late December, and soon started working on an LLM of their own.

Google launched Bard, a competitor to ChatGPT, in early February 2023. It was initially run on Google’s Language Model’s for Dialogue Applications (LaMDA), which came into existence around 2 years ago.

Soon, Google made the announcement about the launch of PaLM2, its own LLM during Google’s developer conference, Google I/O 2023.

And it was not like the competition was sitting silently.

By April 2023, Microsoft had roughly invested $13 billion in OpenAI, the team behind ChatGPT. Microsoft began by incorporating ChatGPT technology into its Bing search engine.

In a later statement, Microsoft CEO Satya Nadella said “Every product of Microsoft will have some of the same AI capabilities to completely transform the product.”

And then came Anthropic, a lesser known company that came into existence thanks to two former senior scientists at OpenAI, siblings Daniella Amodie and Dario Amodei.

Anthropic released its own LLM called Claude, which found its application in a few specific business use cases. Claude 2, which came into being in July 2023, and is available to the members of US and UK via a paid API.

The Frontier Alliance – A smart move by the companies or a forced hand by the government?

We have seen how this Artificial Intelligence war, which it is, is heating up with each passing day. We are yet to add one more piece to this puzzle, which also happens to be a very important piece.

Meta – a big tech company that also has deep pockets, made an announcement in mid-July that it is joining the AI race with its own LLM model, Llama2.

Mark Zuckerberg, Facebook’s own CEO in a Facebook post, highlighted how Meta is going to partner with Microsoft. “Meta has a long history of open sourcing our infrastructure and AI work,” meaning that Llama 2 will be open-source, as opposed to other LLMs.

But this again, was seen by many as a dangerous move by the larger public.

Oli Buckley, a professor of cybersecurity at the University of East Anglia, compared open-sourcing LLMs akin to giving people ‘a template to build nuclear bombs.”

But wait? Was someone else watching all this news with interest, and even skepticism.

With any advent in technology, we have one entity that always wants to regulate it and ensure that the general public stays out of harm, and also protect its own geopolitical interests – The Government.

The Government steps in

Uncle Sam was watching with increasing interest how these big tech companies were slowly playing around with technology. Technology that had the power to revolutionize entire industries, disrupting them for better or worse.

And Uncle Sam stepped in.

On Friday, July 21, 2023, the Biden administration made an announcement that seven of the top A.I companies had made a commitment. That there would be “guardrails” in place to ensure that their products were safe before releasing it to the general public.

The US has been at the forefront of trying to regulate the fast, hitherto unchecked development of Artificial Intelligence. If you are interested in learning more, you can read this interesting article on The NewYorker.

The lawmakers across the pond, in the European Union, were not to be left behind twiddling their thumbs.

Heralded as the world’s most far-reaching attempt to check the harmful effects of artificial intelligence, the EU passed a draft law known as the A.I act.

While the final law will take till the end of the year to pass, the EU is ahead of the US and Beijing to curtail what the government’s perceive as “technology that can go really wrong.” in the words of OpenAI’s CEO Sam Altman.

The coming together of the Big 4

Yesterday, Google announced that they are teaming up with Anthropic, OpenAI and Microsoft to form what is called the Frontier Model Forum. The Forum will leverage the combined expertise of all the companies that are part of it.

Google calls it “a public library of solutions to support industry best practices and standards.”

Why are they coming together?

There are 4 core objectives that the forum has, which are listed below.

- First Objective: Advancing AI safety research

- Second Objective: Find out the best practices

- Third Objective: Collaboration among stakeholders for knowledge sharing

- Fourth Objective: Develop applications that solve society’s pressing challenges

The Forum has also set a certain criteria to meet, if you want to be part of it.

You have to be:

- Developing and deploying frontier AI models.

- Demonstrating a “strong commitment to frontier model safety.”

- Will contribute to making sure that the Forum meets its objectives, by “participating in joint initiatives.”

What are they planning to do with the Forum?

Primarily, the aim of the forum is to foster research, and also make sure that AI development takes place at a pace that the world can comprehend. People have been pitting AI trained chatbots against chatbots, and the forum is set to tackle this problem.

AI is increasingly becoming more intelligent, and the forum plans to curb this development by putting in place greater safety measures.

The Forum will also act as a bridge between corporates, governments and all the other stakeholders when it comes to addressing risks and safety issues. Cyber threats form one of the biggest concerns that the forum would address.

So all in all, what we can expect is an umbrella body that is going to govern the development of Artificial Intelligence. Over the coming months, the forum will establish an Advisory board which will dictate the direction it will go. This advisory board will consist of people from various backgrounds, so that there is equal representation.

How does this matter? What do we feel about the formation of the Forum?

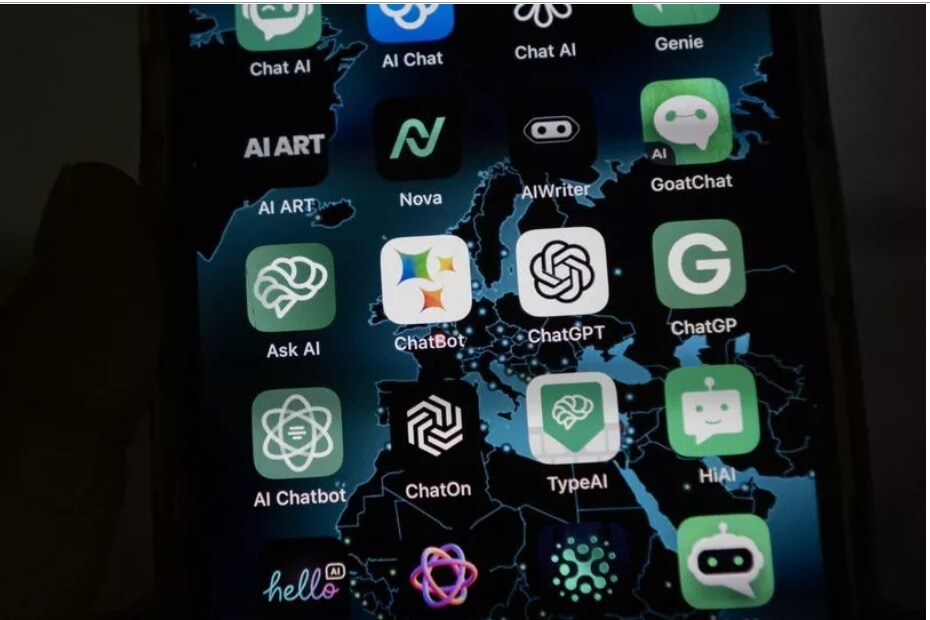

The internet has exploded with ChatGPT clones. There is a brand new AI tool coming out every day, making it even more difficult to separate the wheat from the chaff.

Being in the AI space for a good part of the last decade, we here at Kommunicate understand how critical it is to ensure that this technology is used responsibly. We have seen rapid development in this space for the last few months, and we are sure it might be unnerving for many. However, we firmly believe that a step like this is definitely going to help in creating the right environment for the responsible development and use of AI.

It was the right thing to do by the Big 4 AI companies to come together and lead from the front and ensure that this new piece of technology stays safe for all of us.

We don’t know the kind of data points that they will train new AI models on and if that will ensure safety of your data. Big tech can, and should, act more responsibly when it comes to customer data, before the government steps in and spoils the party for everyone.

So where do we see the Frontier go from here? We see exciting times ahead, with more and more companies joining the Frontier. The emergence of a ‘Superintelligence’ – intelligence that supersedes humans, might still be a distant, dystopian future away.

Yogesh was a former employee at Kommunicate. As the Head of Growth, Marketing & Sales, he was a dynamic and results-driven leader with over 10 years of experience in strategic marketing, sales, and business development.

At Kommunicate, we envision a world-beating customer support solution to empower the new era of customer support. We would love to have you on board to have a first-hand experience of Kommunicate. You can signup here and start delighting your customers right away.