Updated on February 25, 2026

For years, customer support leaders viewed automation through a single, narrow lens: deflection. The goal was to build a digital barrier that kept low-level, repetitive tickets away from expensive human agents. Today, however, that paradigm has fundamentally shifted. Powered by advanced large language models (LLMs) and generative artificial intelligence, chatbots are no longer just basic deflection tools.

They have evolved into sophisticated, high-volume frontline agents capable of navigating complex customer journeys. Modern AI agents autonomously resolve intricate issues, personalize responses, and represent the brand just as visibly as any human employee. In fact, 72% of customer experience (CX) leaders now agree that chatbots must serve as an intelligent extension of a brand’s identity rather than a mechanical Turk.

Despite this massive technological leap, a dangerous “set it and forget it” mentality still plagues many support organizations. While companies meticulously review their human agents for empathy, factual accuracy, and tone, their AI counterparts are often mainly left unmonitored after deployment.

When complex customer needs arise, unmonitored bots frequently fail; recent research indicates that chatbots successfully resolve only 17% of complex billing or dispute queries, often leading to deep customer frustration and churn. To bridge this gap, businesses must recognize a simple truth: because bots now act as frontline agents, they demand human-level scrutiny.

In this article, we’ll address this gap with a guide for chatbot quality assurance. We’ll cover:

2. Which Categories Define Bot Quality?

3. How Should You Weight Scores?

4. What Does Conversation QA Evaluate?

5. Which Metrics Support the Scorecard?

9. What Does Good Quality Assurance Look Like?

10. Conclusion

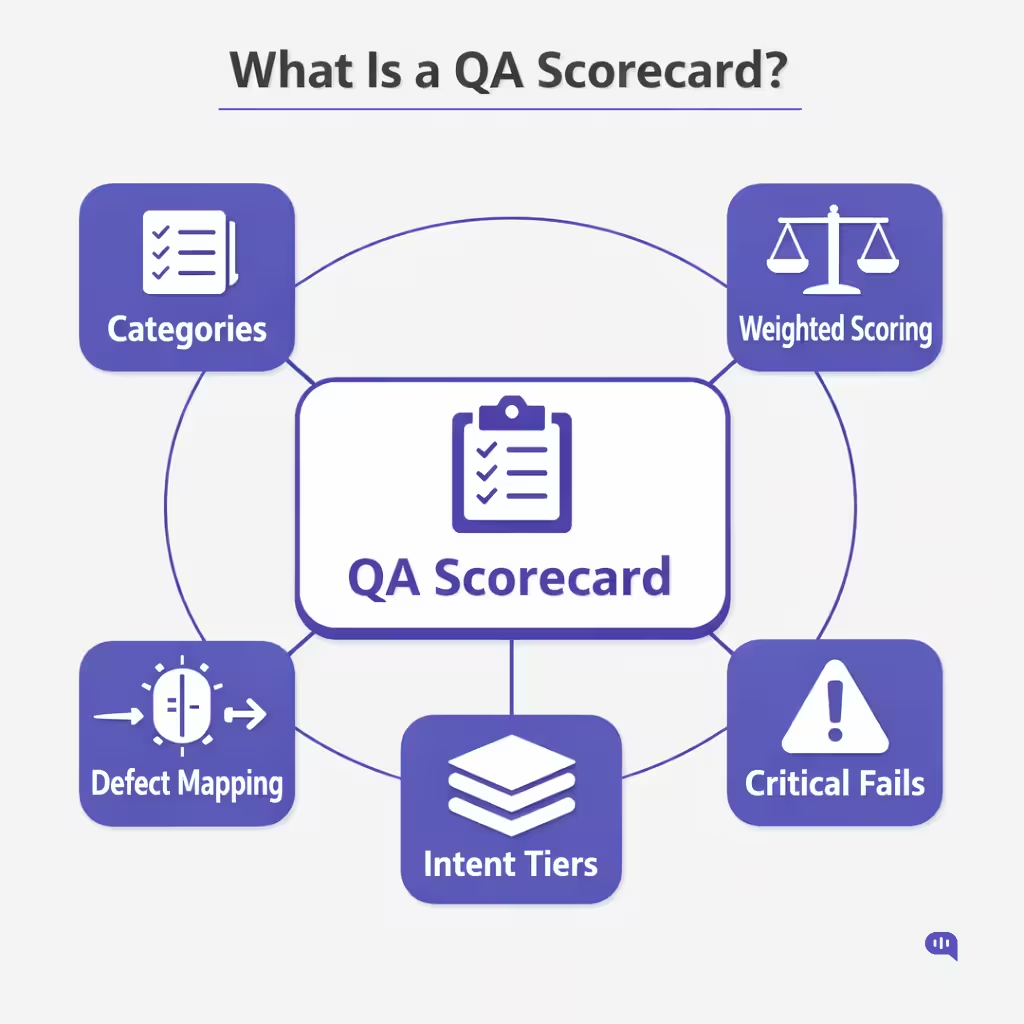

What Is a QA Scorecard?

A QA scorecard is a structured evaluation framework for assessing the quality of individual support conversations against predefined standards. In the context of chatbot quality assurance, it transforms abstract expectations, such as accuracy, helpfulness, and compliance, into measurable, repeatable criteria.

Unlike a dashboard, which aggregates performance indicators, a QA scorecard evaluates the integrity of a single interaction. For bots, this distinction is critical. Automation introduces scale and variability. A QA scorecard introduces control.

At its core, a chatbot QA scorecard must contain seven foundational elements:

1. Clearly Defined Evaluation Categories

A scorecard should break “quality” into specific dimensions. Each category must represent an observable attribute of the conversation. Typical categories for conversation QA include:

- Correctness & Groundedness — Was the response factually accurate and aligned with approved knowledge sources?

- Helpfulness & Clarity — Did the response fully answer the question in a structured, understandable way?

- Policy & Compliance — Were regulatory, legal, or internal policy requirements followed?

- Conversation Control — Did the bot ask clarifying questions where needed and manage context properly?

- Escalation Readiness — Was escalation triggered appropriately and with sufficient context?

Without clearly defined categories, QA devolves into subjective judgment.

2. Observable Scoring Criteria

Each category must include explicit scoring rules. Vague guidance like “Was the answer good?” leads to inconsistent reviews. Strong scorecards define:

- Binary checks (Yes/No)

- Scaled scoring (1–5 or 0–3)

- Defined failure conditions

- Examples of acceptable vs unacceptable responses

For example:

- Correctness = 0 (Fail) if the answer contradicts policy

- Compliance = Critical Fail if sensitive data was requested improperly

This is what makes chatbot evaluation auditable.

3. Weighted Scoring Model

Not all dimensions carry equal risk. A QA scorecard must assign relative importance to categories.

Example weighting:

- Correctness: 30%

- Compliance: 25%

- Helpfulness: 20%

- Conversation Control: 15%

- Escalation Quality: 10%

In regulated environments, compliance may override total scoring entirely. This prevents minor UX strengths from masking severe risk defects.

Weighted models ensure support quality metrics align with business priorities—not just CX aesthetics.

4. Critical Fail Logic

Inevitable failures should override overall scoring. These are “non-negotiables.”

Examples:

- Incorrect financial instructions

- Hallucinated policy statements

- Failure to escalate high-risk scenarios

- Exposure of sensitive information

Critical fail logic protects against automation risk while maintaining scale. In chatbot quality assurance, this is often more important than the average score.

5. Intent-Level Adaptability

A static scorecard is insufficient. Bots handle different types of intents:

- Informational

- Transactional

- Account-specific

- High-stakes (refunds, cancellations, disputes)

The scorecard must support overlays or conditional criteria for each intent category. For example:

- Informational flows may prioritize clarity.

- Financial flows may prioritize compliance and confirmation loops.

- Cancellation flows may prioritize escalation timing.

This ensures the QA instrument evaluates risk proportionally.

6. Defect Classification Layer

A strong QA scorecard doesn’t stop at scoring—it diagnoses root cause. Each failure should map to a defect taxonomy:

- Knowledge gap

- Retrieval error

- Intent misclassification

- Flow design flaw

- Escalation misroute

- Policy misconfiguration

This transforms QA from grading into operational improvement.

7. Documentation and Calibration Standards

To maintain inter-reviewer reliability, the scorecard must include:

- Reviewer guidelines

- Calibration examples

- Escalation dispute resolution process

- Version control (when scoring rules change)

Without calibration, support QA becomes inconsistent across reviewers, especially when evaluating probabilistic AI systems.

What a QA Scorecard Is Not?

It is not:

- A KPI dashboard

- A containment tracker

- A CSAT summary

- A purely automated grading system

Those are measurement tools. A QA scorecard is an evaluation instrument.

Why Do You Need a QA Scorecard for Chatbot Quality Assurance?

Bots operate at scale. A single logic flaw can affect thousands of customers before a metric flags abnormal behavior. A QA scorecard allows teams to detect quality degradation before it becomes visible in escalation spikes or recontact trends.

In modern support environments, conversation QA must function like code review: structured, systematic, risk-aware.

A QA scorecard is the control layer that ensures automation scales resolution.

We’ll evaluate these seven aspects of QA scorecards throughout this article. The first aspect we’ll focus on is bot quality.

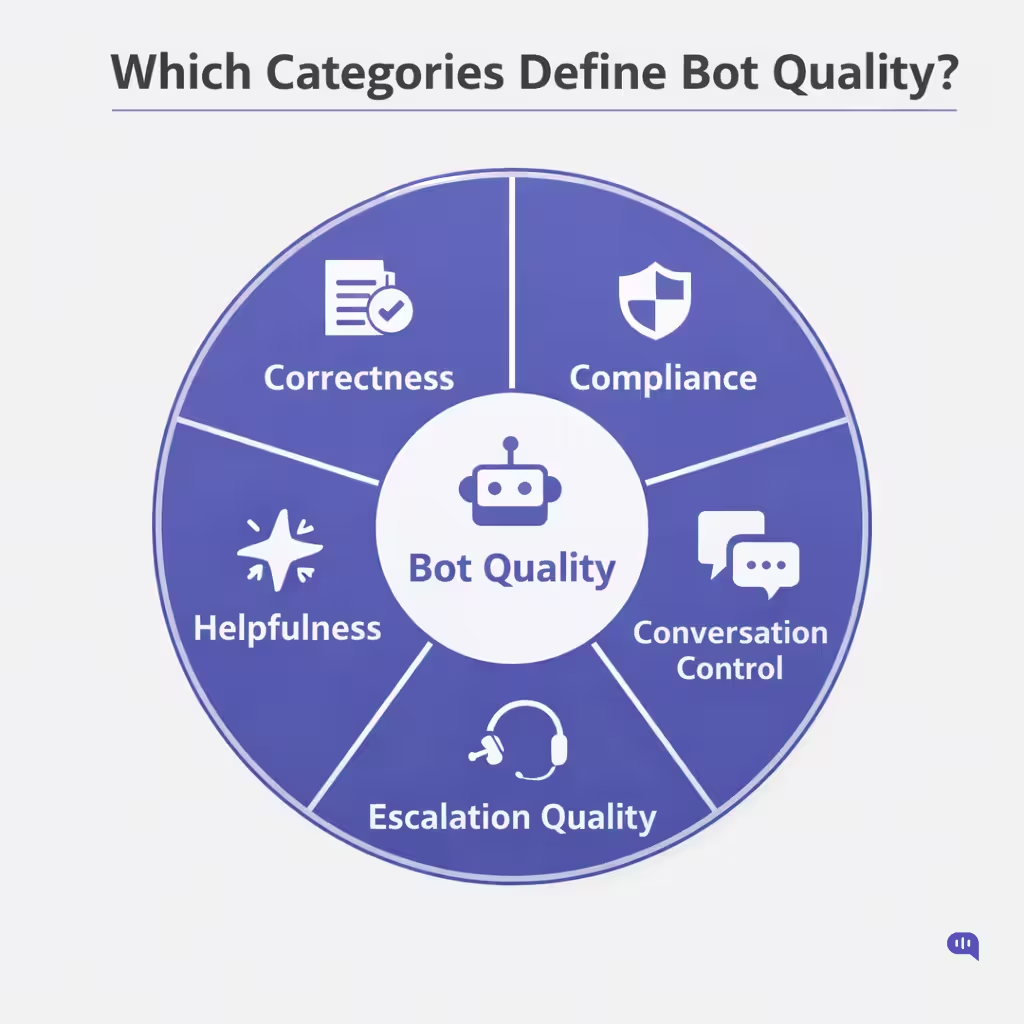

Which Categories Define Bot Quality?

If you cannot define quality, you cannot measure it.

In chatbot quality assurance, “quality” must be decomposed into observable dimensions that reviewers can consistently evaluate across conversations, intents, and risk tiers.

Bot quality is not a single metric. It is a composite of interaction accuracy, risk control, conversational intelligence, and outcome integrity.

Below are the core categories that should define any serious chatbot QA scorecard.

1. Correctness & Groundedness

This is the non-negotiable foundation. A bot response must be:

- Factually accurate

- Aligned with current policy

- Grounded in approved knowledge sources

- Free from hallucinated steps, fees, rules, or claims

In LLM-powered systems, groundedness becomes especially critical. The QA reviewer should ask:

- Did the answer directly address the user’s question?

- Is the information verifiable in the knowledge base?

- Did the bot introduce unsupported assumptions?

Even a polite, well-written answer fails if it is incorrect.

2. Helpfulness & Completeness

Accuracy alone is insufficient. A response can be correct but still unusable.

This category evaluates:

- Whether the answer fully resolves the query

- If steps are logically sequenced

- Whether the response anticipates follow-up friction

- Clarity and readability

Common failures include:

- Partial instructions

- Missing prerequisites

- “Contact support” default responses

- Overly verbose, confusing answers

This evaluation answers a central question: Can a customer realistically resolve their issue using only this response?

3. Policy & Compliance Integrity

Specific intents carry regulatory or legal risk. This category evaluates whether the bot:

- Followed authentication requirements

- Avoided exposing sensitive information

- Applied refund/cancellation rules correctly

- Respected escalation guardrails

- Avoided prohibited claims

In regulated industries, this category may carry critical-fail status. Compliance quality is not optional. It is risk containment.

4. Conversation Control & Context Management

Unlike static FAQs, bots manage multi-turn dialogue. This category assesses:

- Did the bot ask clarifying questions when the intent was ambiguous?

- Did it retain and use context correctly?

- Did it avoid premature answers?

- Did it recover gracefully from confusion?

Strong conversation QA looks for:

- Intelligent disambiguation

- Proper use of confirmations

- Context carryover across turns

Weak conversation control leads to friction, recontacts, and escalation spikes.

5. Escalation Timing & Handoff Quality

Escalation is not failure. Poor escalation design. This category evaluates:

- Was escalation triggered at the right moment?

- Did the bot avoid unnecessary containment?

- Was sufficient context passed to the human agent?

- Was the routing appropriate?

High containment with poor escalation quality creates silent experience debt.

In chatbot quality assurance, escalation quality is often the most under-evaluated dimension.

6. Experience & Tone Consistency

Even in automated systems, perception matters. This includes:

- Tone appropriateness

- Empathy markers (when needed)

- Consistency with brand voice

- Avoidance of robotic repetition

Tone cannot compensate for inaccuracy. But poor tone can damage an otherwise correct interaction.

7. Outcome Integrity

This final category focuses on results, not performance optics.

Ask:

- Did the conversation lead to resolution or deferral?

- Is there a risk of recontact?

- Was the customer left with actionable next steps?

Outcome integrity connects qualitative scoring to support quality metrics like repeat contact rate, escalation reversals, and post-bot CSAT.

The Structural Principle

These categories work together:

- Correctness protects truth.

- Compliance protects risk.

- Conversation control protects flow.

- Escalation protects experience continuity.

- Outcome integrity protects resolution.

When organizations collapse bot evaluation into containment or automation rate, they reduce quality to volume. A structured category model ensures chatbot evaluation measures what actually matters: whether the automation delivered a safe, correct, and complete customer outcome.

Once you receive the scores from this evaluation, add them to a model to calculate weighted scores. This would help you assess your chatbot’s final score.

How Should You Weight Scores?

Weighting determines what your organization truly values.

In chatbot quality assurance, not all quality dimensions carry equal risk or business impact. If every category is scored equally, your QA framework will overemphasize surface-level improvements and underweight high-risk failures. A structured weighting model ensures that the evaluation reflects customer impact, regulatory exposure, and operational cost.

Quality assurance frameworks in contact centers consistently emphasize that QA programs must align scoring with business priorities and risk tolerance. The same principle applies to chatbot evaluation.

1. Start With Risk, Not Aesthetics

The first principle of weighting is consequence-based prioritization.

- A mildly unclear response creates friction.

- An inaccurate policy statement creates misinformation.

- A compliance breach creates legal exposure.

Best practices in QA design recommend assigning greater weight to categories with higher downstream risk or financial impact. In AI-driven systems, correctness and compliance errors scale instantly, amplifying their risk profile.

This is why correctness and compliance should anchor the majority of the total score.

2. Anchor the Model Around Core Dimensions

A practical baseline model for many support environments looks like this:

| Category | Suggested Weight |

| Correctness & Groundedness | 30% |

| Policy & Compliance | 25% |

| Helpfulness & Completeness | 20% |

| Conversation Control | 15% |

| Escalation Quality | 10% |

This distribution reflects two realities:

- Incorrect answers damage trust.

- Compliance failures introduce disproportionate risk.

Industry experts recommend aligning evaluation categories with customer outcomes and policy adherence. In chatbot systems, this alignment becomes more critical as automation scales.

Correctness + Compliance should generally account for at least half of the total weight.

3. Introduce Critical Fail Overrides

Some failures should override weighting entirely. Examples include:

- Hallucinated or fabricated policy statements

- Incorrect financial or medical instructions

- Unauthorized disclosure of sensitive information

- Failure to escalate high-risk cases

AI evaluation frameworks stress the importance of defining non-negotiable evaluation gates, particularly for safety and factual reliability. In chatbot QA, this takes the form of critical fail logic.

This prevents weighted scoring from masking severe risk.

4. Weight by Intent Tier

Not all conversations carry equal exposure.

A general FAQ response differs materially from a financial cancellation or identity verification flow. QA design best practices recommend tailoring evaluation criteria to the interaction type. You can implement tier-based weighting overlays:

Tier 1 — Informational

Emphasis on clarity and correctness.

Tier 2 — Transactional

Increased weight on confirmation and completeness.

Tier 3 — High-Risk / Regulated

- Elevated compliance weighting.

- Expanded critical fail rules.

- Stricter escalation standards.

This allows your chatbot quality assurance framework to scale across intent categories without flattening risk differences.

5. Align Weighting With Support Quality Metrics

Weighting should not exist in isolation from operational data.

If escalation rates decline but QA scores on escalation quality drop, your weighting model should detect the tradeoff early. Similarly, if helpfulness scores decline and repeat contacts rise, the QA system must surface that relationship.

Modern QA programs emphasize connecting qualitative scoring with performance metrics to ensure measurement integrity. Weighting creates the structural link between conversation QA and support quality metrics.

6. Recalibrate Over Time

Weighting is not static. As bots mature:

- Early-stage systems may require heavier correctness weighting.

- Mature systems may shift focus toward conversation flow optimization.

- Expanding into regulated verticals may require higher compliance emphasis.

Evaluation frameworks for AI systems consistently recommend iterative calibration as systems evolve (Codecademy Team). The same discipline applies to chatbot evaluation.

Monthly or quarterly calibration reviews prevent score drift and ensure scoring reflects current risk realities.

Strategic Principle

Weighting answers one core question:

Which failures create the most significant downside for your organization?

Chatbot quality assurance must prioritize exposure over aesthetics. A properly weighted QA scorecard ensures that automation scales resolution and safety.

The two topics above provide a primary scorecard. But to evaluate chatbot conversations, you need to zoom in on how conversations should be structured.

What Does Conversation QA Evaluate?

Conversation QA focuses on the integrity of individual interactions, ensuring they respond correctly, safely, and in a way that preserves resolution quality. Unlike aggregate support quality metrics, conversation QA evaluates how decisions were made within the dialogue itself.

Below is a structured view of what conversation QA should assess in a chatbot quality assurance program:

| Evaluation Dimension | What It Examines | Why It Matters |

| Intent Accuracy | Whether the bot correctly understood the user’s request | Misclassification drives incorrect answers and unnecessary containment |

| Response Correctness | Factual accuracy and alignment with approved knowledge sources | Prevents misinformation and hallucinations |

| Completeness | Whether the answer fully resolves the question | Partial answers increase repeat contacts |

| Clarity & Structure | Logical sequencing, readability, and actionable steps | Reduces friction and follow-up questions |

| Policy & Compliance | Adherence to authentication, legal, or regulatory requirements | Protects against risk and liability |

| Context Management | Proper use of previous conversation turns | Avoids repetitive or irrelevant responses |

| Clarification Behavior | Whether the bot asked follow-up questions when needed | Prevents premature or incorrect answers |

| Escalation Timing | Whether the bot escalated at the appropriate moment | Balances containment with resolution |

| Handoff Quality | Accuracy and completeness of context passed to agents | Prevents customers from repeating information |

| Outcome Integrity | Whether the conversation likely led to a real resolution | Connects qualitative QA to operational metrics |

Conversation QA evaluates decision quality at the interaction level. When applied consistently within a structured QA scorecard, it ensures chatbot evaluation measures outcome integrity. To determine a chatbot’s outcomes, you need to measure it against chatbot and customer service metrics.

Which Metrics Support the Scorecard?

A QA scorecard evaluates the quality of individual conversations. Metrics determine whether that quality sustains at scale. In chatbot quality assurance, the goal is alignment: improvements in conversation QA should correlate with measurable operational gains.

Below are the core support quality metrics that should sit beside your QA scorecard:

- Actual Resolution Rate: Percentage of bot conversations that end in confirmed issue resolution.

- Containment Rate: Percentage of conversations entirely handled by the bot without human intervention.

- Escalation Rate (by intent tier): Percentage of conversations routed to agents, segmented by risk category.

- Recontact Rate (24h / 7d): Percentage of users who return with the same or related issue after a bot interaction.

- Fallback Rate: Percentage of responses that trigger generic “I can’t help,” or failure flows.

- Post-Bot CSAT: Customer satisfaction score collected immediately after automation.

- Handoff Context Completeness: Percentage of escalations where intent, summary, and prior steps are passed accurately to agents.

- Misroute Rate: Percentage of escalations routed to the wrong queue or team.

- Policy Violation Rate: Incidents involving unsafe guidance or compliance breaches.

- QA Pass Rate (by intent tier): Percentage of reviewed conversations meeting your defined QA score threshold.

Metrics do not replace a QA scorecard. When score improvements lead to higher resolution, lower recontacts, and fewer compliance incidents, chatbot evaluation moves from theoretical grading to operational accountability.

Now that we’ve established the theoretical base for our evaluations, let’s start talking practically about how the review process works.

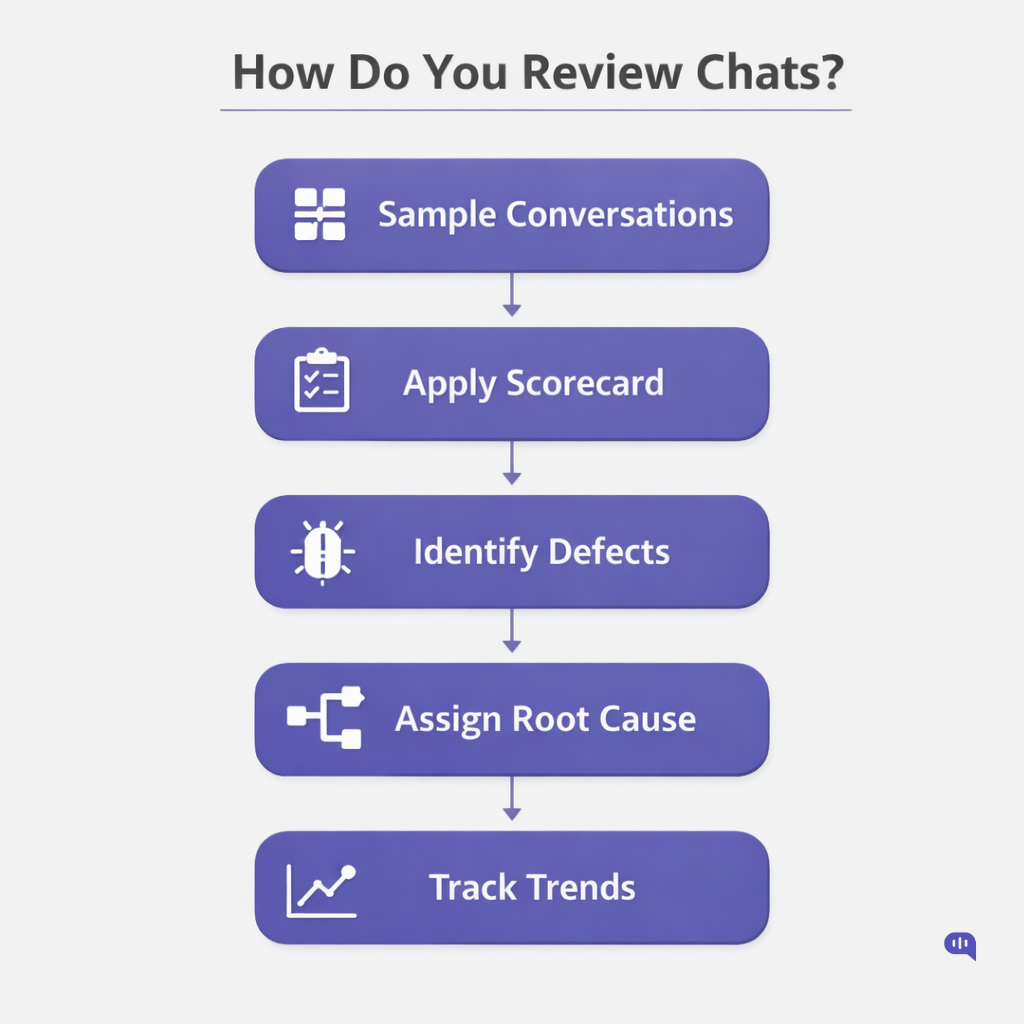

How Do You Review Chatbot Conversations?

Reviewing bot conversations requires structure, sampling discipline, and calibration. Unlike agent QA, chatbot quality assurance evaluates the quality of system decisions. The review process must therefore be systematic, risk-aware, and repeatable.

Below is a practical framework for conducting conversation QA reviews.

1. Define a Sampling Strategy

You cannot review every conversation. Sampling must be intentional. Use a mix of:

- Risk-based sampling: High-stakes intents (refunds, cancellations, compliance-sensitive flows)

- Volume-based sampling: Top 10–20 highest traffic intents

- Regression sampling: Conversations after KB or prompt updates

- Random sampling: Detect unknown failure patterns

Sampling should represent both high-frequency and high-risk interactions.

2. Segment by Intent Tier

Before reviewing, categorize conversations:

- Informational

- Transactional

- Account-specific

- High-risk / regulated

This ensures the QA scorecard is applied proportionally. A password reset should not be evaluated with the same level of strictness as a financial dispute.

3. Apply the QA Scorecard Consistently

For each conversation:

- Score each defined category (correctness, compliance, clarity, escalation, etc.)

- Flag critical failures immediately

- Document defect type (knowledge gap, retrieval error, misroute, etc.)

- Note whether the resolution was likely achieved

Consistency matters more than speed. Conversation QA must be defensible.

4. Review the Full Conversation Flow

Do not evaluate single responses in isolation. Assess:

- Initial intent detection

- Clarification steps

- Context carryover

- Escalation timing

- Handoff quality

In chatbots, many failures occur across turns.

5. Record Root Cause, Not Just Score

Scoring alone does not improve quality. Each failure should map to a defect category:

- Intent misclassification

- Missing knowledge

- Retrieval mismatch

- Prompt logic flaw

- Escalation rule error

This transforms chatbot evaluation into operational improvement.

6. Calibrate Reviewers

To maintain scoring reliability:

- Conduct monthly calibration sessions

- Review borderline cases as a group

- Align on critical fail definitions

- Update scoring guidance when necessary

Without calibration, support QA becomes subjective, especially in probabilistic AI systems.

7. Close the Loop

After review:

- Share defect trends with AI and CX teams

- Prioritize fixes based on risk and volume

- Re-audit affected intents after changes

- Track QA pass rate trends over time

QA should reduce defect density over successive review cycles.

Effective chat review is about diagnosing system behavior at scale. When appropriately structured, conversation QA becomes the control mechanism that ensures automation scales resolution, not hidden defects.

Now that we know how to review chatbot outputs, let’s explore what to do when the review fails.

How Do You Fix QA Defects?

Identifying defects through chatbot quality assurance is only the first step. The real value of conversation QA lies in remediation. Each failed scorecard category should map to a clear root cause and a defined corrective action.

Below is a practical mapping of QA categories to common defect types and corrective actions.

| QA Category | Common Defects Identified | Likely Root Cause | Corrective Action |

| Correctness & Groundedness | Hallucinated facts, outdated policy references, incomplete answers | Weak retrieval logic, outdated knowledge base, over-permissive generation | Update KB content, tighten grounding rules, improve retrieval ranking, and add citation enforcement |

| Helpfulness & Completeness | Partial instructions, vague next steps, overlong responses | Poor prompt structure, missing edge-case coverage | Refine response templates, add structured step formatting, and expand intent playbooks |

| Policy & Compliance | Missing authentication steps, incorrect financial guidance, unsafe disclosures | Missing guardrails, compliance logic gaps | Add hard validation checks, introduce compliance filters, and implement critical fail triggers |

| Conversation Control | Failure to clarify ambiguous intent, repetitive loops, and lost context | Weak disambiguation prompts, context window mismanagement | Add clarification flows, improve context memory handling, and redesign multi-turn logic |

| Escalation Timing | Over-containment of complex cases, premature escalation | Aggressive containment rules, unclear risk thresholds | Adjust escalation triggers, introduce intent-level thresholds, and review fallback logic |

| Handoff Quality | Missing summaries, incorrect routing, and lost customer state | Poor integration mapping, incomplete metadata transfer | Standardize escalation payload fields, enforce required context blocks, and validate routing logic |

| Outcome Integrity | High QA score but rising recontacts, unresolved edge cases | Gaps in resolution confirmation, weak follow-up prompts | Add confirmation checkpoints, implement the resolution validation step, and refine the closing prompts |

Once defects are categorized:

1. Assign ownership (AI team, CX ops, compliance, content)

2. Prioritize by risk and volume

3. Ship fixes in controlled releases

4. Re-sample affected intents post-fix

5. Track defect density trends

The goal is to reduce systemic patterns.

QA defects are signals of system behavior under real-world pressure. Fixing them requires structured diagnosis, cross-functional ownership, and iterative release discipline. When chatbot evaluation is paired with a clear remediation framework, support QA evolves from reactive auditing into continuous quality engineering.

Now that you know what the chatbot quality assurance team does and how it works, let’s formulate a quick launch plan for evaluating a chatbot.

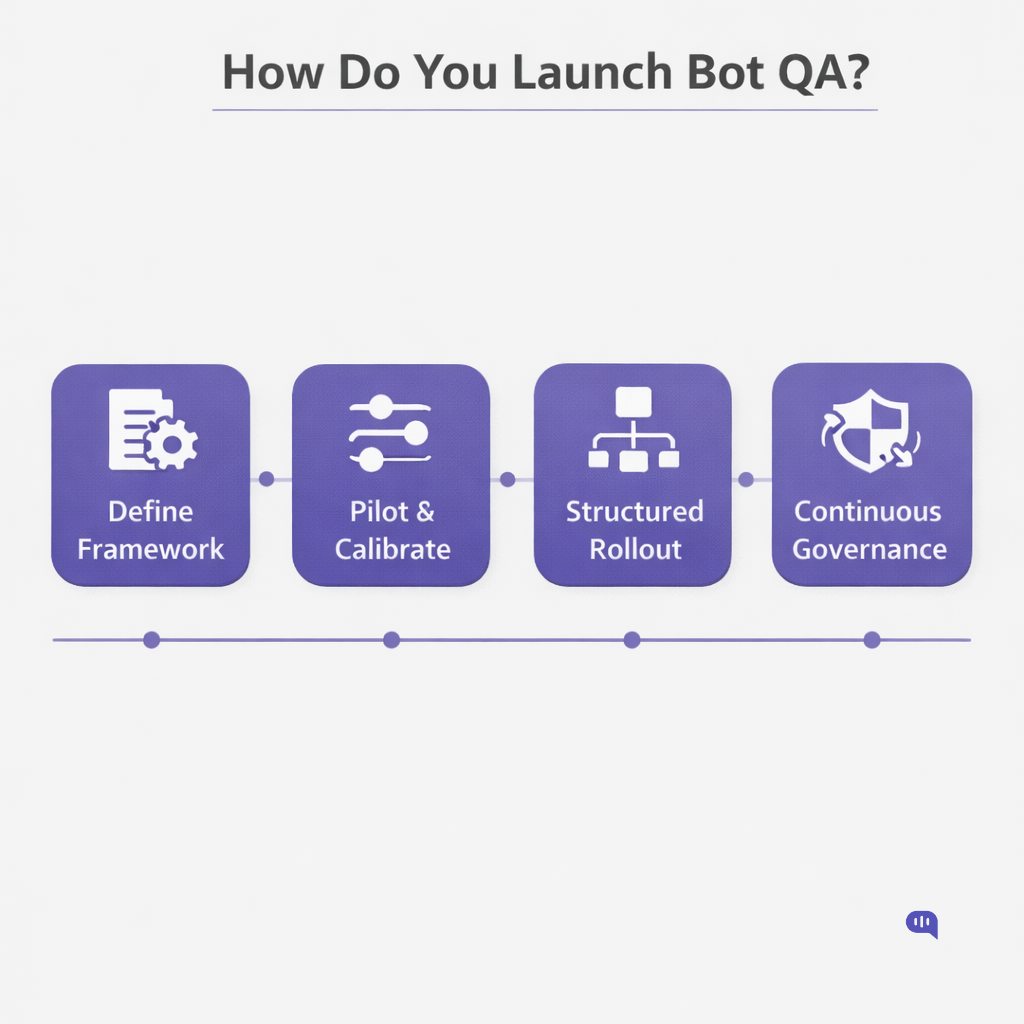

How Do You Launch Bot QA?

Launching chatbot quality assurance requires sequencing, not speed. You are building an evaluation discipline that must scale with automation. The rollout should move from definition to calibration to expansion to continuous governance.

Below is a practical four-stage launch model, including timeline expectations and concrete deliverables at each stage.

Stage 1: Define the Framework (Weeks 1–2)

Objective: Establish evaluation standards before scaling reviews.

What Happens Here

- Define QA categories (correctness, compliance, conversation control, escalation, etc.)

- Design a weighted scoring model

- Define critical fail rules

- Segment intents by risk tier

- Align stakeholders (Support Ops, AI, Compliance, CX)

This stage ensures that chatbot evaluation standards are clear before sampling begins.

Deliverables

- ✅ Approved QA Scorecard (v1)

- ✅ Category Definitions & Scoring Guide

- ✅ Critical Fail Matrix

- ✅ Intent Risk Tier Framework

- ✅ Governance Owner Matrix

Stage 2: Pilot & Calibration (Weeks 3–4)

Objective: Test the scorecard in real-world conditions and align reviewers.

What Happens Here

- Sample 100–200 conversations across top intents

- Apply the scorecard consistently

- Conduct calibration sessions

- Identify ambiguous scoring areas

- Refine weightings and definitions

Calibration is critical in chatbot quality assurance because AI responses can vary across similar prompts.

Deliverables

- ✅ Calibration Report (inter-reviewer variance analysis)

- ✅ Updated Scorecard (v1.1)

- ✅ Defect Baseline (defect density by category)

- ✅ Initial QA Pass Rate Benchmark

Stage 3: Structured Rollout (Weeks 5–8)

Objective: Operationalize conversation QA as a recurring process.

What Happens Here

- Establish recurring sampling cadence (weekly or biweekly)

- Introduce risk-weighted sampling (high-risk + high-volume)

- Build a QA reporting dashboard (qualitative + metrics alignment)

- Create defect taxonomy tracking

At this stage, QA moves from pilot to program.

Deliverables

- ✅ Recurring QA Sampling Plan

- ✅ Defect Taxonomy Tracker

- ✅ QA Dashboard (Pass Rate, Critical Fails, Trends)

- ✅ Escalation Quality Monitoring Report

Stage 4: Continuous Optimization & Governance (Ongoing)

Objective: Embed QA into release cycles and performance management.

What Happens Here

- Tie QA review to bot releases and KB updates

- Introduce regression reviews post-deployment

- Monitor defect density trends over time

- Adjust weightings as risk profile evolves

- Integrate QA findings into roadmap planning

At maturity, chatbot quality assurance functions like software QA—every release passes through evaluation gates.

Deliverables

- ✅ Release QA Gate Checklist

- ✅ Quarterly QA Trend Analysis

- ✅ Risk Recalibration Review

- ✅ Continuous Improvement Backlog

Timeline Overview

- Weeks 1–2: Framework design

- Weeks 3–4: Pilot and calibration

- Weeks 5–8: Structured rollout

- Ongoing: Governance and optimization

Most organizations can establish foundational bot QA within 60 days. Maturity, however, comes from sustained iteration.

Launching bot QA is not a project milestone—it is the installation of a control system. When evaluation becomes embedded in release cycles, sampling cadence, and defect remediation, chatbot quality assurance moves from reactive grading to disciplined quality engineering.

Finally, we’re going to talk about what a well-rounded QA process looks like.

What Does Good Quality Assurance Look Like?

High scores or low escalation rates do not define reasonable chatbot quality assurance. It is characterized by consistency, risk control, and measurable improvement over time. Strong support for QA programs creates clarity: everyone understands what quality means, how it is measured, and how defects are fixed. Weak programs produce dashboards. Strong programs produce discipline.

Below are the observable traits of a mature conversation QA system.

- Clear, Documented Scorecard – Defined categories, weighted scoring, and critical fail logic that are understood across teams.

- Risk-Based Sampling Discipline – High-risk and high-volume intents are reviewed regularly—not just random chats.

- Consistent Reviewer Calibration – Monthly alignment sessions to maintain scoring reliability across evaluators.

- Defect Taxonomy With Ownership – Every QA failure is mapped to a root cause and assigned to a responsible team.

- Critical Fail Enforcement – Compliance or correctness violations immediately trigger remediation, not debate.

- Escalation Quality Monitoring – Handoff context completeness tracked alongside containment.

- Alignment With Operational Metrics – Improvements in QA scores correlate with higher resolution and lower recontacts.

- Release Gates for Bot Updates: Prompt, KB, or routing changes must pass QA validation before full rollout.

- Trend Monitoring Over Time – Defect density and pass rates are reviewed monthly to detect drift.

- Continuous Improvement Loop – QA findings feed directly into backlog prioritization and system refinement.

Strong chatbot evaluation programs do not chase automation optics. They protect outcome integrity at scale. When QA is structured, calibrated, and tied to remediation, automation becomes sustainable. Good quality assurance is not about grading bots. It is about building trust in how they operate.

Conclusion

Chatbot quality assurance is the control layer that determines whether automation strengthens or weakens customer experience. Dashboards can show containment and volume, but only structured conversation QA reveals whether interactions were accurate, compliant, and genuinely resolved. A well-designed QA scorecard, properly weighted and consistently applied, turns subjective judgment into enforceable standards. When defects are classified, owned, and remediated through disciplined review cycles, chatbot evaluation becomes a system of continuous improvement rather than periodic auditing.

The shift from agent-only support QA to unified human-and-bot quality governance is structural, not tactical. Automation now creates first-contact outcomes, which means it must meet the same rigor once reserved for agents.

Organizations that treat QA as a release gate, a calibration discipline, and a risk-management mechanism build AI systems that scale safely. Those who rely solely on metrics risk optimizing for optics rather than for resolution. In modern support environments, quality is what the scorecard defends.

Adarsh Kumar is the CTO & Co-Founder at Kommunicate. As a seasoned technologist, he brings over 14 years of experience in software development, artificial intelligence, and machine learning to his role. His expertise in building scalable and robust tech solutions has been instrumental in the company’s growth and success.