Updated on April 29, 2026

TL;DR

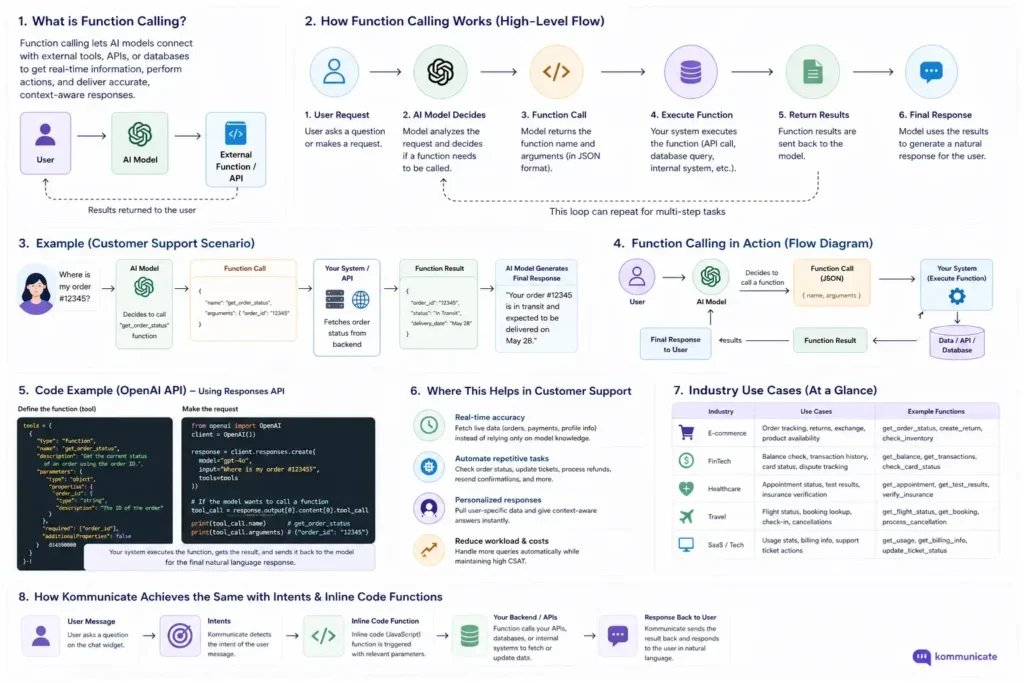

OpenAI function calling (also called tool calling) lets a model decide which of your code functions to run and what arguments to pass — but your backend does the actual execution. The model returns structured JSON, you run the function, and the result goes back so it can write a natural reply. That five-step loop is what turns a chatbot that talks into an agent that acts.

For production, three features matter most: strict: true guarantees the arguments match your schema, parallel_tool_calls handles bundled questions in one turn, and tool_choice controls when the model is allowed to act. Combine them with clear function descriptions and argument validation, and you have the foundation for AI agents that can resolve real requests, not just talk about them.

Most AI chatbots look impressive during demos. They can answer questions, be conversational, and summarize information.

But the moment customers ask them to do an actual task, the experiences start to break.

Questions like:

- “Where is my order?”

- “Freeze my debit card.”

- “Can I reschedule my appointment?”

These questions can’t be answered with FAQs and Knowledge Bases. These questions need you to use tools and functions. This is where function calling (also known asTool Calling) is important because it allows your AI agent to interact with systems instead of just generating text.

In this article, we’re going to take you through the OpenAI function calling process, and cover:

- What is OpenAI function calling?

- Function calling vs tool calling

- How function calling actually works – 5-Step Flow

- Structured Outputs and strict mode

- Parallel function calling

- tool_choice parameter

- Best practices and common pitfalls

- Use cases in customer support

- Final thoughts

- FAQs

What Is OpenAI Function Calling?

Function calling is the layer that allows AI to interact with systems instead of just generating text.

Instead of responding with generic information, the AI can decide:

- Which backend action should happen

- What information needs to be sent

- When a tool should be triggered

The function calling capability with Open AI API helps you perform actions. Let’s simplify this with a mental model so that you can visualize this process.

Simple Mental Model

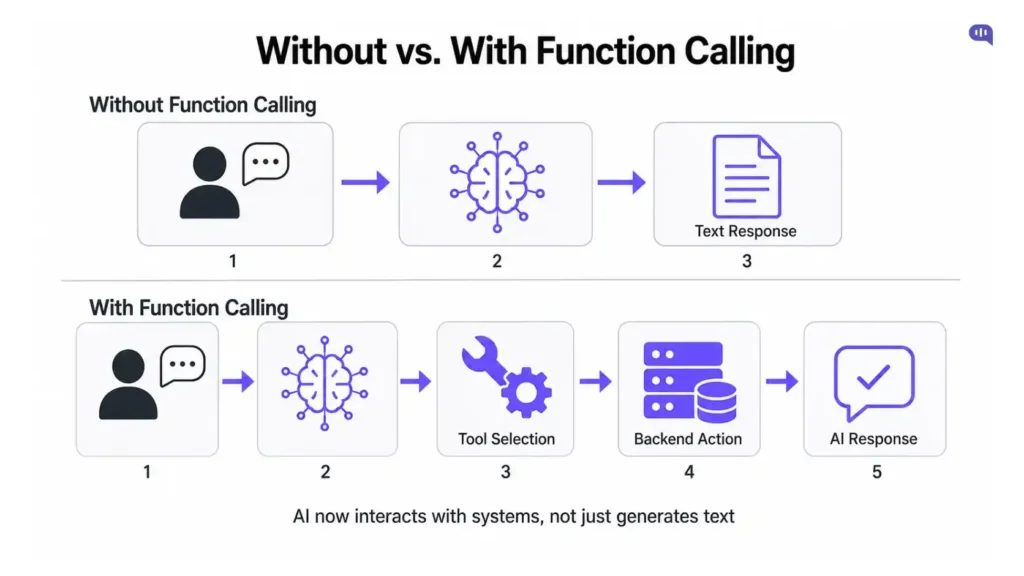

If you build an AI agent without function calling: Customer → AI → Text response

And with function calling: Customer → AI → Tool selection → Backend action → AI response

Now, most developers talk about tool calling and function calling in the same breath when they describe AI agent workflows. Let’s understand how these two processes work differently before talking about how function calling works.

Function calling vs tool calling

If you’ve spent any time reading OpenAI documentation, blog posts, or community threads, you’ve likely seen both terms used. So which is correct?

The short answer: they refer to the same thing.

OpenAI’s official documentation states it directly: function calling (also known as tool calling) provides a powerful and flexible way for OpenAI models to interface with external systems and access data outside their training data. The two terms are interchangeable in modern usage.

But there is a story behind why both names exist, and understanding it helps you read older tutorials, GitHub repos, and Stack Overflow answers without getting confused.

The original API: functions

When OpenAI first introduced this capability in June 2023, the parameter was literally called functions, and the related parameter was function_call. Developers could describe functions to gpt-4-0613 and gpt-3.5-turbo-0613, and have the model intelligently choose to output a JSON object containing arguments to call those functions.

This is why the feature is still commonly referred to as “function calling.”

The rename: tools and tool_choice

A few months later, OpenAI broadened the concept. Instead of just functions, the model could call different types of tools — functions, retrieval, code interpreter, web search, MCP servers, and more. To reflect this, the API parameters were renamed.

The functions and function_call parameters have been deprecated with the release of the 2023-12-01-preview version of the API. The replacement for functions is the tools parameter. The replacement for function_call is the tool_choice parameter.

So in modern code, you’ll see:

tools: [ { type: "function", name: "get_order_status", ... }]And not:

functions: [ { name: "get_order_status", ... }]The newer tools parameter also unlocked a major capability that the original functions parameter couldn’t handle: parallel calls. With the introduction of tool_calls, OpenAI provided a more robust framework enabling more complex integrations and interactions. This update significantly enhances the model’s utility by allowing parallel function calls and potentially integrating a broader range of tool types in the future

| Functions (Legacy) | Tools (Current) | |

| Parameter for selection | function_call | tool_choice |

| Supports parallel calls | No | Yes |

| Supports non-function tools | No | Yes (web search, file search, MCP, etc.) |

| Introduced | June 2023 | Late 2023 |

| Status | Deprecated | Available |

Function calling vs tool use (across providers)

There’s one more layer of confusion worth clearing up. Different AI providers use different names for the same idea. OpenAI calls it “function calling” while Anthropic calls it “tool use,” but the implementation is nearly identical. Both use JSON schemas to define tools and return structured outputs.

So if you’re comparing platforms or migrating between them:

- OpenAI → “function calling” / “tool calling”

- Anthropic (Claude) → “tool use”

- Google (Gemini) → “function calling”

- Microsoft (.NET / Azure) → “tool calling”

The mechanics are essentially the same: define a JSON schema, the model returns structured arguments, your code executes the action, and the result goes back to the model.

What this means for your code

For practical purposes:

- If you’re writing new code today, use the tools parameter and tool_choice.

- If you see “function calling” anywhere in articles, docs, or this guide: it’s referring to the same capability.

- If you’re maintaining older code with functions and function_call, it still works, but you should plan to migrate. The transition from function_calls to tool_calls marks a significant improvement in how developers can leverage OpenAI’s API to build more dynamic, efficient, and complex applications

Throughout the rest of this article, we’ll use “function calling” since that’s what most developers still search for, but, every code example uses the modern tools syntax.

You should have a clearer view of the tool_use v/s function_use confusion, so, let’s talk about how function calling works.

How function calling actually works – 5 step flow

OpenAI’s model does not execute your function.

It only decides which function should be called and what arguments to pass. Your backend does the actual execution. The model’s job is to translate natural language into structured JSON. Your job is to do something with it and send the result back.

OpenAI’s official documentation describes the flow as a multi-step conversation between your application and a model via the OpenAI API, broken into five high-level steps. Let’s walk through each one with a practical customer support example where a customer is asking: “Where is my order?”

You can explore OpenAI’s official guide to find the entire API documentation:

Step 1: Define your function schema

You describe each available function as a JSON object inside the tools parameter. The schema includes three things:

- A name (what the model will call)

- A description (natural language the model uses to decide when to call it)

- A parameters block (JSON Schema definition of the inputs)

const tools = [

{

type: "function",

function: {

name: "get_order_status",

description: "Returns the current shipping status for a customer's order. Use this when a customer asks where their order is, when it will arrive, or about delivery.",

parameters: {

type: "object",

properties: {

order_id: {

type: "string",

description: "The customer's order ID, e.g. 'ORD-12345'"

}

},

required: ["order_id"],

additionalProperties: false

},

strict: true

}

}

];A few things worth noting:

- The description is doing real work. The model uses it to decide whether this function is the right one to call. Vague descriptions will lead to wrong function calls.

- Function descriptions count against your token budget: they’re part of the prompt on every request.

- strict: true enables Structured Outputs, which guarantees the model’s arguments match your schema. We’ll cover this in the next section.

Step 2: Send the request with the user’s message

You call the Chat Completions (or Responses) API with the user’s message and your tools list.

import OpenAI from "openai";

const openai = new OpenAI();

const messages = [

{ role: "user", content: "Where is my order ORD-12345?" }

];

const response = await openai.chat.completions.create({

model: "gpt-5-nano",

messages,

tools

});Step 3: The model returns a tool call (not an answer)

This is the part that surprises most developers the first time. The model doesn’t reply with text. Instead, it returns a structured tool_calls array.

The response has an array of tool_calls, each with an id (used later to submit the function result) and a function containing a name and JSON-encoded arguments.

Here’s what the structure looks like:

{

"type": "function_call",

"call_id": "call_abc123",

"name": "get_order_status",

"arguments": "{\"order_id\":\"ORD-12345\"}"

}Two things to notice:

- The arguments field is a JSON string, not an object. You’ll need to parse it.

- Keep the call_id — you’ll need it in Step 5 to pair the result back to the right call.

Once you have the tool call, your code takes over for Steps 4 and 5. Here’s the full handler that does both:

// =========================

// Backend Functions

// =========================

async function getOrderStatus({ order_id }) {

return {

order_id,

status: "Out for delivery",

estimated_delivery: "Tomorrow"

};

}

async function freezeCard({ card_id }) {

return {

card_id,

status: "Frozen"

};

}

// =========================

// Function Registry

// =========================

const functionMap = {

get_order_status: getOrderStatus,

freeze_card: freezeCard

};

// =========================

// Handle Function Calls

// =========================

async function handleFunctionCalls(response, client) {

// Find all function calls returned by OpenAI

const toolCalls = response.output.filter(

item => item.type === "function_call"

);

// No function calls → exit early

if (!toolCalls.length) {

console.log("No function calls found.");

return;

}

// Execute all functions dynamically

const toolOutputs = await Promise.all(

toolCalls.map(async (toolCall) => {

const functionName = toolCall.name;

// Validate function existence

const selectedFunction = functionMap[functionName];

if (!selectedFunction) {

throw new Error(`Unknown function: ${functionName}`);

}

// Parse arguments safely

let args = {};

try {

args = JSON.parse(toolCall.arguments || "{}");

} catch (err) {

throw new Error(

`Invalid JSON arguments for function: ${functionName}`

);

}

// Execute backend function

const result = await selectedFunction(args);

// Return tool output for OpenAI

return {

type: "function_call_output",

call_id: toolCall.call_id,

output: JSON.stringify(result)

};

})

);

// Send function results back to OpenAI

const finalResponse = await client.responses.create({

model: "gpt-5-nano",

previous_response_id: response.id,

input: toolOutputs

});

// Final assistant response

console.log(finalResponse.output_text);

return finalResponse;

}

const response = await client.responses.create({

model: "gpt-5-nano",

input: "Where is my order 12345?"

});

// Handle tool execution loop

await handleFunctionCalls(response, client);That single handler covers everything left in the loop. Let’s break down what each part is doing.

Step 4: Execute the function in your code

This happens inside the Promise.all block. Three things are going on:

- Function lookup. The functionMap object maps each tool name to its real backend implementation. When OpenAI returns name: “get_order_status”, your code looks up functionMap[“get_order_status”] and gets the actual JavaScript function. This is what keeps the model decoupled from your backend — you can rename, refactor, or swap the implementation without touching the model.

- Argument parsing. JSON.parse(toolCall.arguments) converts the JSON string the model returned into a real object you can pass to your function. Wrapping it in a try/catch is important even with strict: true, because edge cases (truncated responses, parse errors) still happen in production.

- Execution. await selectedFunction(args) is where your real backend runs — a database query, an internal API call, a third-party service. The model never sees this code path.

This is also where the real-world impact lives. Be aware of the real-world impact of function calls that you plan to execute, especially those that trigger actions such as executing code, updating databases, or sending notifications. Implement User Confirmation Steps: Particularly for functions that take actions, we recommend including a step where the user confirms the action before execution.

Step 5: Send the result back to the model

This is the second half of the same handler. Each function output is wrapped in a function_call_output object that carries two things:

{

type: "function_call_output",

call_id: toolCall.call_id, // ← pairs result to the original call

output: JSON.stringify(result) // ← stringified function result

}

The call_id is critical. It’s what tells the model which of its requested calls this result belongs to. With one call it doesn’t matter much; with parallel calls (covered later), without the IDs the model can’t pair results to requests and you’ll get garbled or hallucinated final responses.

Then comes the second API call:

const finalResponse = await client.responses.create({

model: "gpt-5-nano",

previous_response_id: response.id, // ← reuses the conversation state

input: toolOutputs

});Two things worth flagging here:

- previous_response_id vs. messages array. This example uses the Responses API, where conversation state is server-side, and you just pass the previous response’s ID. If you’re using the older Chat Completions API, you’d instead append the assistant’s tool call message and a role: “tool” message with the result to your messages array, then call chat.completions.create again.

- tools not passed in the second call. When you use previous_response_id, OpenAI carries the original tools forward automatically. With Chat Completions, you’d pass the tools list again.

The model now has both the original question and the real data, so it can write a natural, accurate response — something like “Your order ORD-12345 is out for delivery and should arrive tomorrow.” That’s finalResponse.output_text.

This is the step where the system becomes complete.

The lifecycle now becomes:

- User Question

- OpenAI selects a function

- Backend executes the logic

- Function result sent back

- AI generates final response

We’ve also created a full workflow that you can refer to more information.

Structured outputs and strict mode

If you’ve used function calling for any length of time, you’ve probably hit this frustration: the model mostly returns valid JSON that matches your schema, but sometimes, it doesn’t. Maybe it adds an extra field. Maybe it drops a required one. Maybe it returns a string where you asked for a number. In production, “mostly” isn’t good enough.

This is the problem Structured Outputs solves.

OpenAI introduced Structured Outputs as a new API feature designed to ensure model-generated outputs will exactly match JSON Schemas provided by developers. It’s a hard guarantee, not a best effort.

The difference is dramatic. On OpenAI’s own evaluations of a complex JSON schema following, gpt-4o-2024-08-06 with Structured Outputs scores a perfect 100%. In comparison, gpt-4-0613 scores less than 40%.

That’s the gap between “the model usually does what you asked” and “the model is guaranteed to do what you asked.” For anything you’re shipping to customers, that gap matters.

How to enable it for function calling?

You enable Structured Outputs on a function definition by adding strict: true:

const tools = [

{

type: "function",

function: {

name: "get_order_status",

description: "Returns the current shipping status for a customer's order.",

parameters: {

type: "object",

properties: {

order_id: { type: "string" }

},

required: ["order_id"],

additionalProperties: false

},

strict: true // ← this is the whole feature

}

}

];That single flag changes the model’s behavior. When Structured Outputs are enabled, model outputs will match the supplied tool definition. The model is constrained at the token-generation level.

JSON mode vs Structured Outputs

You may have used JSON mode (response_format: { type: “json_object” }) before. It’s not the same:

| Feature | JSON Mode | Structured Outputs (strict: true) |

| Guarantees valid JSON? | Yes | Yes |

| Guarantees schema adherence? | No | Yes |

| Required fields enforced? | No | Yes |

| Field types enforced? | No | Yes |

| Recommended for production? | No (Legacy) | Yes |

Microsoft’s documentation puts it cleanly: structured outputs make a model follow a JSON Schema definition that you provide as part of your inference API call. This is in contrast to the older JSON mode feature, which guaranteed valid JSON would be generated, but was unable to ensure strict adherence to the supplied schema.

If you’re starting new code today, use Structured Outputs. JSON mode is essentially a legacy feature kept around for backward compatibility.

The rules you have to follow for Structured Content

strict: true isn’t free — there are a few constraints OpenAI enforces in exchange for the guarantee:

1. Every field must be in required.

This trips developers up constantly. With strict mode, you can’t have optional fields. If a property is in properties, it must also appear in required.

If you genuinely need a field to be optional, the workaround is to allow null as a type:

properties: {

tracking_number: {

type: ["string", "null"] // explicitly nullable

}

}2. additionalProperties: false is required.

You have to explicitly tell the schema that no extra properties are allowed. This is what prevents the model from inventing fields.

3. Not all JSON Schema features are supported.

OpenAI notes that while Structured Outputs supports much of JSON Schema, some features are unavailable either for performance or technical reasons.

Things like pattern, format, minLength, maxLength, minimum, and maximum aren’t enforced. If you need those, validate them in your code after the model returns.

4. Strict mode disables parallel function calling.

This is the catch most developers don’t notice until production. Structured outputs are not supported with parallel function calls. When using structured outputs you need to set parallel_tool_calls to false.

So you get reliability or parallelism, not both — at least within a single tool definition. We’ll cover when that trade-off matters in the next section.

Why should you turn Structured Content on by default?

For customer support use cases, the calculus is simple:

- A customer asks: “Where is my order?”

- The model needs to call get_order_status({ order_id: “…” })

- Without strict mode, there’s a small but non-zero chance the model returns { orderId: “…” } (wrong key), or { order_id: 12345 } (wrong type), or { order_id: “…”, customer_email: “…” } (extra field your function doesn’t expect).

- With strict mode, none of those failure modes can happen.

In a demo, you’d never notice. In a system handling 10,000 conversations a day, you’d catch the failures in your error logs and spend a week trying to figure out why your bot occasionally crashes.

OpenAI’s own recommendation, and a common one across SEO and developer guides, is to enable strict mode unless you have a specific reason not to. The most common reason to leave it off is if you need parallel function calling.

Defining JSON Schemas with Pydantic (Python) or Zod (TypeScript)

A small but useful detail: instead of writing JSON schemas by hand, OpenAI’s SDKs let you define them as Pydantic or Zod objects, which they convert automatically. This is the recommended pattern.

from pydantic import BaseModel

from openai import OpenAI

client = OpenAI()

class GetOrderStatus(BaseModel):

order_id: str

tools = [openai.pydantic_function_tool(GetOrderStatus)]The benefit: your TypeScript/Python types and your function schema can never drift out of sync. If you change the type, the schema changes with it. OpenAI strongly recommends this approach: to prevent your JSON Schema and corresponding types in your programming language from diverging, we strongly recommend using the native Pydantic/zod sdk support.

One more thing worth knowing. When strict mode is on, the model may still refuse to comply with a request.

OpenAI added a separate refusal field on the API response so you can detect this programmatically. When the response does not include a refusal and the model’s response has not been prematurely interrupted (as indicated by finish_reason), then the model’s response will reliably produce valid JSON matching the supplied schema.

So in production, your error handling should check three things in this order:

- Did the model refuse? (handle gracefully and show a fallback message)

- Was the response cut off? (retry or extend max_tokens)

- Otherwise, you’re guaranteed valid, schema-conformant output.

That’s the practical contract Structured Outputs gives you. However, you can’t use Structured Output while using parallel function calling.

Let’s see when parallel function calling might be important.

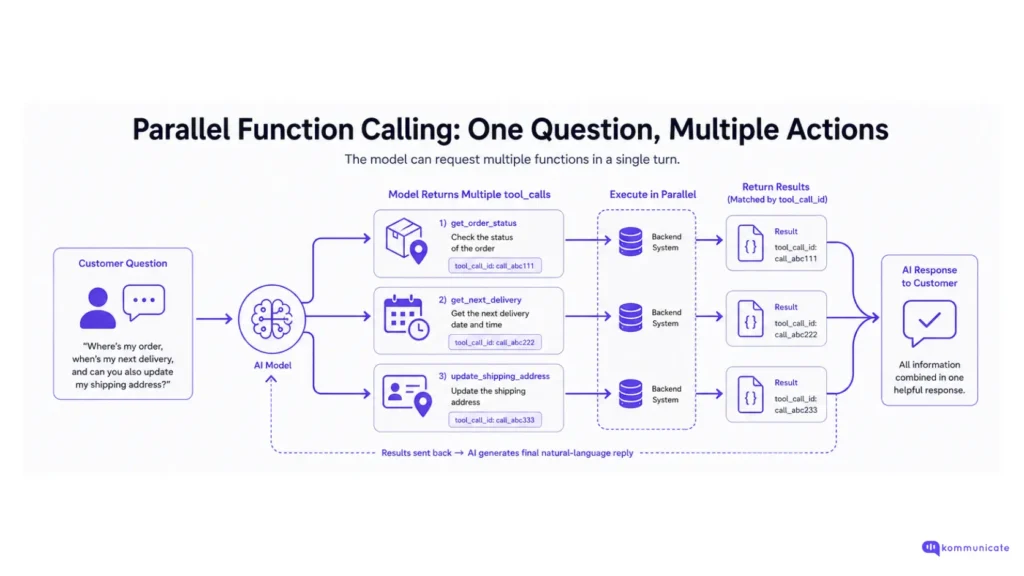

Parallel function calling

Up to this point, we’ve described function calling as if the model only ever picks one tool per turn. That’s the simple case. But real customer questions are often bundled. A customer doesn’t ask one thing, they ask three:

“Where’s my order, when’s my next delivery, and can you also update my shipping address?”

Without parallel calls, the model has to pick one of those, run it, send the result back, then repeat. Three round-trips, three latency hits. With parallel calls, the model can request all three at once.

What “parallel” actually means

When parallel function calling is on, the model can return multiple tool_call entries inside a single response. Your application then executes all of them — ideally in parallel on your end too — and sends every result back in the next turn.

OpenAI describes it directly: the model may choose to call multiple functions in a single turn. You can prevent this by setting parallel_tool_calls to false, which ensures exactly zero or one tool is called.

Note the default. On most modern models (gpt-4o, gpt-4.1, gpt-4.5), parallel_tool_calls defaults to true. When parallel_tool_calls is false, the model will only request one tool call at a time instead of potentially multiple calls in parallel.

So you don’t have to enable it: you have to decide whether you want to disable it.

What does a parallel response look like?

Here’s what comes back when a customer asks the bundled question above:

{

"role": "assistant",

"tool_calls": [

{

"id": "call_abc111",

"type": "function",

"function": {

"name": "get_order_status",

"arguments": "{\"order_id\":\"ORD-12345\"}"

}

},

{

"id": "call_abc222",

"type": "function",

"function": {

"name": "get_next_delivery",

"arguments": "{\"customer_id\":\"CUS-998\"}"

}

},

{

"id": "call_abc333",

"type": "function",

"function": {

"name": "update_shipping_address",

"arguments": "{\"customer_id\":\"CUS-998\",\"address\":\"...\"}"

}

}

]

}Three calls, three IDs. Your job now is to execute all three and return three matching tool messages: each tagged with the right tool_call_id.

Handling them in code

Here’s the pattern. Use Promise.all (or your language’s equivalent) to execute the calls in parallel, then push every result back as a separate role: “tool” message:

const toolCalls = response.choices[0].message.tool_calls;

// Run them in parallel

const results = await Promise.all(

toolCalls.map(async (call) => {

const args = JSON.parse(call.function.arguments);

const result = await dispatchFunction(call.function.name, args);

return { call_id: call.id, result };

})

);

messages.push(response.choices[0].message);

for (const { call_id, result } of results) {

messages.push({

role: "tool",

tool_call_id: call_id,

content: JSON.stringify(result)

});

}

// Now ask the model for the final natural-language reply

const final = await openai.chat.completions.create({

model: "gpt-5-mini",

messages,

tools

});The matching by tool_call_id is critical. Without it, the model has no way to know which result corresponds to which request — and you’ll get garbled or hallucinated final responses.

When should you use parallel calling?

Parallel calling is most useful when:

- The customer’s question genuinely requires data from multiple sources (get_order + get_invoice + get_return_policy)

- The calls are independent of each other (the result of one doesn’t determine whether you should make another)

- You care about latency — three sequential round trips can take 6–9 seconds, while three parallel calls take roughly the time of the slowest one

E-commerce dashboards, multi-account banking views, and “summarize my last week” queries all benefit heavily from this.

When not to Parallel Function Calling?

There are three scenarios where you should set parallel_tool_calls: false:

1. When calls have ordering dependencies.

If function B needs the result of function A. For example, you have to look up a customer_id before you can fetch their orders, parallel execution will misfire. The model sometimes still tries to parallelize calls that should be sequential.

2. When you need strict mode (Structured Outputs).

This is the trade-off we flagged in the previous section. Currently, if you are using a fine-tuned model and the model calls multiple functions in one turn, then strict mode will be disabled for those calls.

More broadly, OpenAI has documented that strict schema guarantees aren’t honored across parallel calls, so if reliability matters more than parallelism (it usually does in customer support), turn parallel off.

3. For destructive or side-effect-heavy actions.

If a function call freezes a card, cancels a subscription, or refunds a payment, you don’t want the model deciding to fire three of those at once because the customer’s question was ambiguous. Either turn off parallel mode for those tools or gate them behind a confirmation step before execution.

Disabling parallel calls

Disabling is one parameter:

const response = await openai.chat.completions.create({

model: "gpt-5-nano",

messages,

tools,

parallel_tool_calls: false // ← model returns at most one call per turn

});A note on model support: not every model accepts this parameter. Some models, like o3 and 4o fail when called with parallel_tool_calls=True as an unsupported parameter.

Reasoning models (o-series) handle multi-step tool use differently they chain calls through their internal reasoning loop rather than emitting them all at once. If you’re using a reasoning model and pass parallel_tool_calls, check the model’s docs first.

A note for production

Best practice from OpenAI’s own guide: since model responses can include zero, one, or multiple calls, it is best practice to assume there are several. The response has an array of tool_calls, each with an id (used later to submit the function result) and a function containing a name and JSON-encoded arguments.

In other words, even if you’ve never seen a parallel call in testing, write your handler as a loop, not a single tool_calls[0] access. The day a customer asks a compound question is the day a single-element handler crashes.

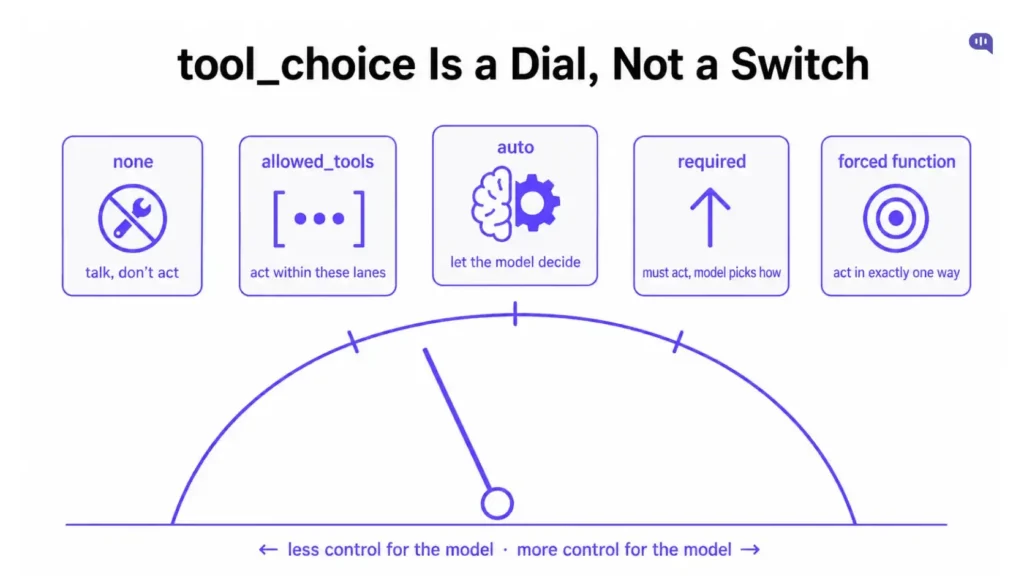

The tool_choice parameter

By default, the model decides whether to call a tool, which one, and how many. That’s good behavior for most cases, but sometimes you need to take the wheel. Maybe you want to guarantee a function call. Maybe you want to prevent one. Maybe you want the model to pick from a narrow subset of your tools.

That’s what tool_choice is for. It’s the second half of how function calling actually works in production, the first being your function definitions, and this being your control over when they fire.

The four modes for tool_choice

OpenAI’s documentation lists four behaviors:

- Auto: (Default) Call zero, one, or multiple functions. tool_choice: “auto”.

- Required: Call one or more functions. tool_choice: “required”.

- Forced Function: Call exactly one specific function. tool_choice: {“type”: “function”, “name”: “get_weather”}.

- Allowed tools: Restrict the tool calls the model can make to a subset of the tools available to the model.

You can also turn function calling off entirely: you can also set tool_choice to “none” to imitate the behavior of passing no functions.

So in practice, you’re choosing between five behaviors:

| Value | What it does | When to use it |

| “auto” (default) | Model decides whether to call a tool, which one, and how many | General-purpose conversations where some queries need tools and others don’t |

| “required” | Model must call at least one tool — cannot return plain text | Workflows where every turn must produce a structured action |

| { type: “function”, name: “…” } | Forces the model to call a specific function | Single-purpose endpoints (e.g., entity extraction, classification pipelines) |

| “allowed_tools” | Restricts the model to a predefined subset of tools | Multi-tenant systems where capabilities differ by user or role |

| “none” | Disables all tool calls | Chat-only fallback, summarization, or when tool execution is not desired |

A practical mental model

Think of tool_choice as a dial, not a switch.

- On one end is none: the model can talk but not act.

- On the other is a forced function: the model can only act, in one specific way.

- In between sits auto (let it decide), required (it must act, but choose how), and allowed_tools (it can act, but only within these lanes).

Most production systems start with auto and dial in tighter constraints only where they need to. That’s the right default.

You’re building a system that needs to handle a huge variety of customer messages, and the model’s judgment about when to call tools is usually pretty good, especially with well-written function descriptions. Reach for the other modes when judgment isn’t enough and you need a hard guarantee.

Next, we’ll talk about some of the best practices and common pitfalls that come with this function.

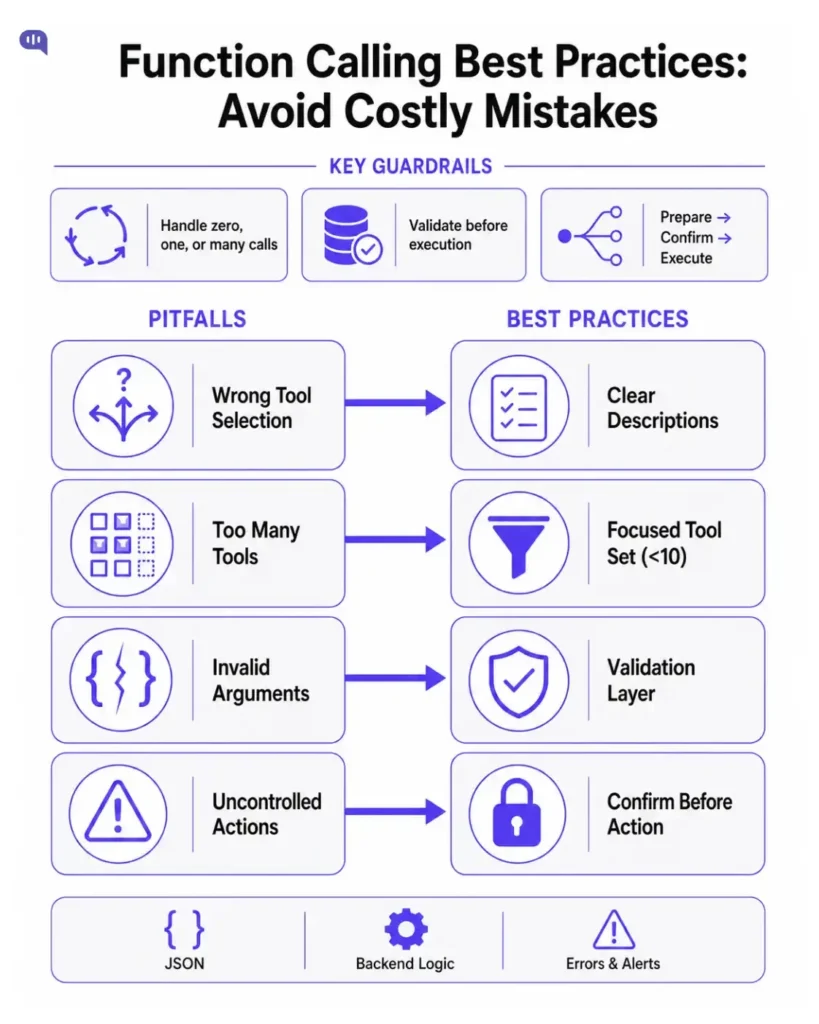

Best practices and common pitfalls

You can read the OpenAI docs end-to-end and still ship a function-calling system that misbehaves in production. The schema is right, the code compiles, the demo works — and then a real customer asks something slightly weird and the bot calls the wrong function. Or fires three calls instead of one. Or hallucinates an argument.

Most of these failures aren’t bugs in the API. They’re predictable mistakes that come from skipping a few rules of thumb. Here are the ones that matter most.

1. Write function descriptions like documentation, not labels

This is the single highest-leverage change you can make. The model decides which function to call based almost entirely on the description field, and a vague description means a guessing model.

Bad:

{

name: "get_order_status",

description: "Gets order status."

}Good:

{

name: "get_order_status",

description: "Returns the current shipping status, carrier, and estimated delivery date for a customer's order. Use this when a customer asks where their order is, when it will arrive, whether it has shipped, or about delivery delays. Do NOT use this for refund or return inquiries."

}Notice the second one tells the model when to call it and when not to. The same applies to parameter descriptions. order_id: { type: “string”, description: “The customer’s order ID, e.g. ‘ORD-12345′” } is the difference between the model passing “12345” (wrong) and “ORD-12345” (right).

2. Keep your tool list short and focused

There’s no hard cap on how many tools you can define, but the more you give the model, the more likely it is to confuse them. A developer building an Assistant with around twenty custom function calls hit exactly this problem: there are somewhat complex logic to figure out the set of functions to call, and ~20 custom function calls as tools. Even though I pass in some of that logic into the run instructions, the model still sometimes doesn’t follow those instructions.

Practical guidance:

- Aim for under 10 tools per request if accuracy matters more than coverage.

- If you have more, group them by use case and only pass the relevant subset per request.

- For very large catalogs, consider OpenAI’s tool search feature (gpt-5.4+), which defers tool definitions until the model decides it needs them.

Every tool description is part of your prompt and consumes input tokens — so a bloated tool list is also a slow, expensive one.

3. Set roles and rules in the system prompt

A common question is: “Do I need to describe my tools again in the system prompt if I’ve already declared them in tools?”

The answer is no: the model already sees the tool definitions. Repeating them wastes tokens. But the system prompt is the place to set boundaries on tool use. OpenAI’s o-series guide gives a clean example:

“Use tools when: the user wants to cancel or modify an order, return or exchange a delivered product please update their address. Do not use tools when: the user asks a general question like ‘what’s your return policy?’, or asks something outside your retail role.”

That’s the right pattern: describe the role and when tool use is appropriate, not what each tool does. The descriptions handle the latter.

4. Validate arguments before executing

strict: true guarantees the model returns valid JSON matching your schema. It does not guarantee the values are sensible.

A model can return { order_id: “ORD-99999999” } for an order that doesn’t exist. Or { refund_amount: 99999.00 } when the customer asked for a $20 refund. Schema validation catches malformed shape; you still need business validation.

A few things to validate before you execute:

- Existence: Does the order/customer/card actually exist?

- Authorization: Does the current customer own this resource?

- Bounds: Is the refund amount within the original transaction?

- Side-effect risk: Is this a destructive call that should require confirmation?

OpenAI itself flags this for reasoning models: validate arguments against the format before sending the call; if you are unsure, ask for clarification instead of guessing. Your backend should treat the model’s arguments as untrusted input, because that’s what they are.

5. Add a human-in-the-loop step for destructive actions

For anything irreversible, don’t let the model trigger it directly. You should implement User Confirmation Steps: Particularly for functions that take actions, we recommend including a step where the user confirms the action before execution.

A common pattern: split destructive actions into two functions.

- prepare_refund(order_id, amount) → returns a draft, no money moves

- execute_refund(refund_token) → only fires after the customer confirms

The model can call prepare_refund freely. execute_refund requires the customer to say “yes, do it.” This protects against ambiguous customer questions, prompt injection attacks, and the model occasionally being overly enthusiastic.

6. Always handle the “zero, one, or many” case

A lot of bugs in production come from code that assumes tool_calls[0] will always exist, or that there will only ever be one call. Neither is safe.

Since model responses can include zero, one, or multiple calls, it is best practice to assume there are several. The response has an array of tool_calls, each with an id (used later to submit the function result) and a function containing a name and JSON-encoded arguments.

Write your handler as a loop from day one:

const toolCalls = response.choices[0].message.tool_calls || [];

if (toolCalls.length === 0) {

// Model replied with plain text -- handle the content field

return response.choices[0].message.content;

}

for (const call of toolCalls) {

// execute and append a tool message

}7. Return errors as tool results, not exceptions

When a function fails, your instinct might be to throw an exception and bail. Don’t. Send the error back to the model as a tool result and let it explain to the customer.

const result = await fetchOrderFromDatabase(args.order_id)

.catch(err => ({ error: "ORDER_NOT_FOUND", message: err.message }));

messages.push({

role: "tool",

tool_call_id: call.id,

content: JSON.stringify(result)

});The model will see the error and respond with something like “I couldn’t find an order with that ID, could you double-check it?” That’s a far better customer experience than an error page or a generic “something went wrong.”

8. Watch the strict + parallel trade-off

We covered this in the structured outputs and parallel calling sections, but it’s worth restating because it’s the single most common production gotcha:

Strict mode and parallel function calls don’t fully play together. If reliability matters more than throughput (and in customer support, it usually does), set parallel_tool_calls: false.

The exception is when your tools are genuinely independent, and the cost of one occasionally returning a slightly off-schema response is acceptable. For most support workflows, it isn’t.

9. Treat tool definitions like API contracts

Once your function calling system is in production, your tool definitions are an API contract. Treat them that way:

- Version them. Don’t rename get_order_status to fetch_order_state casually. The model has to relearn the mapping, and any prompt-cached requests break.

- Don’t change parameter names without testing. Renaming order_id to orderId looks harmless. It isn’t.

- Generate schemas from types, not by hand. To prevent your JSON Schema and corresponding types in your programming language from diverging, we strongly recommend using the native Pydantic/zod SDK support.

- Test additions don’t break old behavior. Adding a new tool can change which tool the model picks for an old prompt. Run your eval suite when you add tools.

Common pitfalls – Quick checklist

A summary you can scan before shipping:

| Pitfall | What it looks like | Fix |

| Vague function descriptions | Model picks the wrong tool | Clearly describe when and when not to use each function |

| Too many tools (20+) | Inconsistent tool selection | Limit to <10 tools per request or implement tool search |

| Repeating tools in system prompt | Token waste, no accuracy gain | Define roles and rules instead of listing every tool |

| No argument validation | Hallucinated IDs, out-of-bounds values | Validate existence, authorization, and input bounds before execution |

| Direct destructive calls | Wrong refunds, accidental cancellations | Split into prepare → confirm → execute steps |

| Assuming one tool call | Crashes on parallel responses | Iterate over tool_calls, default to an empty array |

| Throwing on tool errors | Generic 500 errors reach users | Return structured error objects as tool results |

| Strict + parallel together | Schema drift in multi-call scenarios | Choose one approach (usually strict for reliability) |

| Hand-written JSON schemas | Type/schema inconsistencies | Use schema generators like Pydantic or Zod |

None of this is exotic. It’s the same kind of discipline you’d apply to any external API integration, except the “client” calling your functions is a probabilistic model rather than a deterministic system, which means the failure modes are weirder and your error handling has to be a little more forgiving.

Now that we understand how function calling works, we should talk about some use cases we use it for across Kommunicate.

Customer support use cases

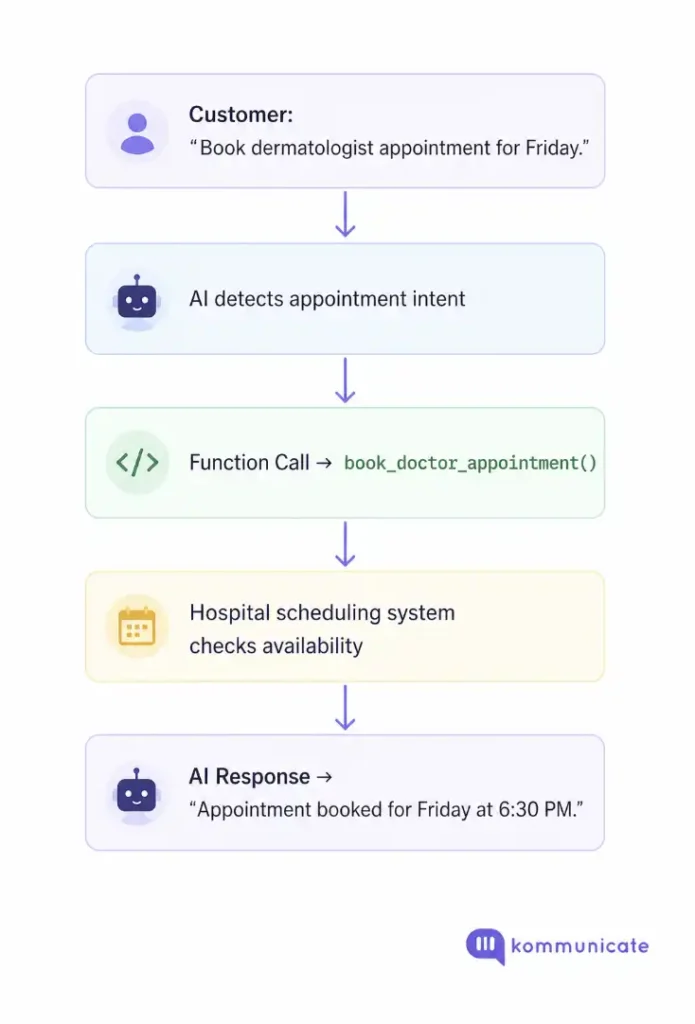

Imagine a patient asking: “Book a dermatologist appointment for Friday evening.”

The AI does not guess availability. Instead, it triggers a backend function.

book_doctor_appointment()

Your system checks availability. Then AI replies: “Dr. Scott is available Friday at 6:30 p.m. Your appointment has been booked.”

This feels conversational. But underneath, it is structured system orchestration.

This can be used across different industries.

Industry-Wise Use Cases

| Industry | Customer Request | Function Call |

| E-commerce | Where is my order? | get_order_status() |

| FinTech | Freeze my card | freeze_card() |

| Healthcare | Book appointment | book_doctor_appointment() |

Customer support is full of operational requests. This means that your AI agent for support needs to deliver outcomes, and not just answers.

E-commerce: Order Tracking, Returns, and Refunds

E-commerce support teams deal with repetitive operational requests.

Customers ask:

- Where is my order?

- Can I return this product?

- Has my refund started?

- Can I change my shipping address?

Without function calling, AI can only give static information.

With function calling, AI can interact with live systems.

Imagine a customer checking delivery status late at night.

No agent is online.

The AI calls: get_order_status(order_id)

Then responds: “Your package left the warehouse this morning and should arrive tomorrow.”

That feels helpful because it is connected to real data.

FinTech: Payments, Cards, and Sensitive Actions

FinTech support requires you to provide:

- Accuracy

- Trust

- Compliance

Using backend systems in FinTech with data privacy and security intact necessitates good function-calling practices.

So, when customers ask:

- Why was my payment declined?

- Show my last transactions

- Freeze my card

- Check loan eligibility

Your AI agent should query the real backend systems.

For example, if a customers asks an AI agent to: “Freeze my debit card immediately.”

The AI triggers: freeze_card(card_id)

The banking backend validates identity and the AI responds: “Your card has been temporarily frozen.”

Healthcare: Appointments and Patient Operations

Healthcare support is one of the most demanding spaces to innovate. Since patients are often looking for specific outcomes, you need to configure function calling.

If patients ask to:

- Book appointments

- Reschedule visits

- Check test reports

- Confirm doctor availability

The AI should be able to connect to the backend systems and give out an accurate response,

Example: “Book a dermatologist appointment this Friday.”

AI calls: book_doctor_appointment()

Backend checks available slots.

Then AI replies: “Dr. Scott is available on Friday evening, at 6:30 p.m.”

This helps the customer get the accurate information, and they are able to perform the required actions they want to.

Final Thoughts

Function calling is often presented as a technical feature. But in reality, it is one of the most important building blocks for practical AI.

This is the tool that helps your AI interact with real backend systems. In customer support, this shift is important because it’s the only way to deliver outcomes to customers.

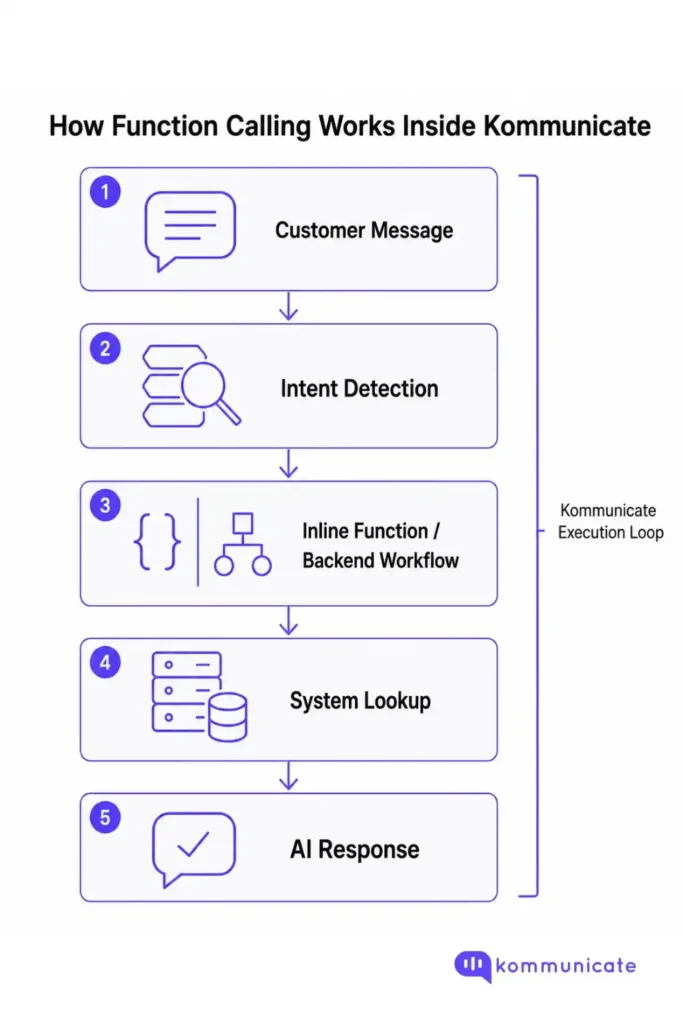

How does this work inside Kommunicate?

We use function calling inside the Kommunicate ecosystem as well.

While OpenAI exposes this capability through function calling and tools, Kommunicate approaches the problem through:

- Intents

- Inline code functions

- Backend integrations

- Workflow automation

For example: If a customer asks: “Where is my order?”

Inside Kommunicate, the AI first detects the intent. Then an inline function or backend workflow can be triggered.

That function may: fetch_order_status(order_id)

The system retrieves real-time data and the AI agent responds naturally.

This creates a familiar execution loop:

The important idea is this:

The principles behind tools, function calling, intents, and workflows are the same, i.e., AI becomes valuable when it can interact with systems.

This is what makes most production AI agents (including Kommunicate) actually useful.

If you want to see how function calling works in the enterprise scale, book a demo with us.

FAQs

Function calling is the feature that lets an OpenAI model decide which of your code functions to run and what arguments to pass.

The model itself doesn’t execute the function. It returns a structured JSON request, your backend runs the actual code (a database lookup, an API call, an internal action), and then the result goes back to the model so it can write a final natural-language reply.

Yes. Function calling (also known as tool calling) provides a powerful and flexible way for OpenAI models to interface with external systems and access data outside their training data.

OpenAI originally called the feature “function calling” when it launched in June 2023 with a functions parameter, then renamed the API to tools and tool_choice in late 2023 to support more types of tools (web search, file search, MCP servers, etc.). The terms are interchangeable today.

No. The model only outputs a JSON object describing which function it wants called and what arguments to pass. Your application is responsible for executing it.

This separation is intentional: it keeps your authentication, your database, and your business logic on your own servers, where the model never has direct access.

They solve different problems and often work together.

Function calling is about connecting the model to actions and data. Use it when the model needs to fetch order data, freeze a card, or trigger a workflow.

Structured Outputs is about guaranteeing the shape of the model’s reply. It can be used inside function calling (via strict: true) to guarantee the function arguments match your schema, or used standalone via response_format to force the final user-facing reply into a specific JSON structure.

If you are connecting the model to tools, functions, data, etc in your system, then you should use function calling. If you want to structure the model’s output when it responds to the user, then you should use a structured response_format.

Function calling is supported on essentially every modern OpenAI chat model. Structured Outputs with function calling is available on all models that support function calling in the API. This includes our newest models (gpt-4o, gpt-4o-mini), all models after and including gpt-4-0613 and gpt-3.5-turbo-0613, and any fine-tuned models that support function calling.

The newer GPT-4.1, GPT-5 family, and o-series reasoning models all support it as well, though reasoning models handle multi-step tool use through their internal reasoning loop rather than emitting parallel calls.

There’s no separate fee for function calling itself, but your function definitions count as input tokens on every request. A bloated tool list with twenty verbose function descriptions can quietly add thousands of tokens to every call.

The two practical levers for cost: keep descriptions specific but concise, and only pass the tools relevant to the current conversation rather than your entire catalog.

parallel_tool_calls controls whether the model can return multiple tool calls in a single response. It defaults to true on most modern models. You should turn it off (parallel_tool_calls: false) when:

You need strict: true reliability guarantees (the two don’t fully play together).

Your functions have ordering dependencies (function B needs function A’s result).

You’re triggering destructive actions and don’t want the model to fire several at once on an ambiguous request.

For read-only support queries where calls are independent — “where’s my order and what’s my next delivery?” — leaving it on saves significant latency.

The mechanics are nearly identical. Both let you define tools as JSON Schemas and return structured arguments. The naming is the main difference:

OpenAI → “function calling” / “tool calling”

Anthropic (Claude) → “tool use”

Google (Gemini) → “function calling”

If you’re migrating between providers, the schemas look very similar, but the response shapes and parameter names differ. Cross-provider abstraction libraries (LangChain, LiteLLM, Vercel AI SDK) can normalize these for you.

In almost every production use case, yes. Setting strict to true will ensure function calls reliably adhere to the function schema, instead of being best effort. We recommend always enabling strict mode

The exceptions are narrow: when you absolutely need parallel function calling, when you’re using a fine-tuned model that doesn’t support it, or when your schema relies on JSON Schema features (pattern, format, minLength, etc.) that strict mode doesn’t enforce.

Almost always, the answer is your function descriptions are too vague. The model uses the description field to decide which tool to use. A description like “Gets order info” gives the model very little to work with, especially when several functions overlap.

A description that says when to use and when not to use the function — “Use this when a customer asks about delivery status. Do NOT use for refunds or returns.” — dramatically improves accuracy.

The other common cause is having too many tools. With 20+ functions in a single request, even a well-prompted model struggles to pick the right one consistently.

Yes. The Responses API supports function calling with the same tools parameter, but the response format is slightly different — tool calls and tool outputs are separate items rather than embedded in a message.

Each tool output must reference the original call_id to pair it with the right call. Most newer OpenAI guides default to the Responses API, but Chat Completions continues to work and is what most existing tutorials still use.

Start with three things:

Pick one high-volume support query that needs live data — usually “where is my order?” — and define a single function for it.

Add strict: true and validate arguments before executing.

Wrap the whole thing in a platform that handles intent detection, fallback, and human handoff for you, so you’re not building the orchestration layer from scratch.

That last part is what platforms like Kommunicate handle out of the box: detecting intent, triggering the right backend workflow, and falling back to human agents when the model can’t help.