Updated on April 7, 2026

Indian NBFCs have quietly become one of the fastest-growing pillars of the credit ecosystem. Recent estimates place the industry’s assets above ₹48 lakh crore, with annual growth projected at 15-17% through FY2028.

As digital-first NBFCs expand into personal loans and microfinance, their portfolios and borrower bases are scaling at double-digit CAGRs, with digital NBFC personal loan books alone expected to reach ₹3.6 lakh crore by FY2030.

Every additional crore in AUM translates into thousands of onboarding, servicing, and collections interactions across web, app, and WhatsApp, creating a structural surge in support volumes that traditional customer service teams were never designed to absorb.

Legacy IVR systems force borrowers through maze-like menus, misroute calls, and make it difficult to reach the right human, all of which directly erode satisfaction. First-generation FAQ chatbots are not much better: they can recite static policy answers but rarely authenticate users, retrieve account-level details, or trigger actions such as sending a statement or rescheduling an EMI.

Meanwhile, modern AI banking bots are already demonstrating that they can provide 24/7 support, handle simple transactions, and offer personalized guidance across channels.

AI-powered customer service offers a way out of this bind. AI chat and voice agents can sit on top of an NBFC’s core systems, understand natural-language intent in multiple Indian languages, authenticate customers, and then either resolve the request end-to-end or hand it off to a human with full context. In this article, we’ll explain how AI can automate NBFC customer service end-to-end. We’ll cover:

- Why do NBFCs Need Improved Customer Service?

- What does AI in Customer Service mean for NBFCs?

- Which parts of the Lending Life Cycle can AI Automate?

- Which Channels Should you Deploy AI Agents in?

- How to Build Compliance into AI Agents for NBFCs?

- How to Design AI Journeys to Cater to Borrower Psychology?

- What are the Potential Drawbacks and Risks of AI Agents in NBFCs?

- Conclusion

Why do NBFCs Need Improved Customer Service?

India’s NBFC sector is in a classic “good problem to have” phase: credit is expanding fast, portfolios are tilting toward small-ticket, retail-heavy products, and RBI is tightening the screws on how quickly and fairly customers must be heard. NBFCs are lending more to a broader range of individuals across multiple channels. And this is turning customer service into a structural bottleneck rather than a back-office function.

At the same time, digital-first borrowers now expect instant, personalized answers and escalate quickly when those expectations aren’t met. This creates an urgent need for improved customer service that delivers ROI at scale.

NBFCs need to address three problems urgently:

Increased Ticket Volumes

NBFCs have expanded their credit books significantly through FY2024, with regulators noting robust growth alongside improved asset quality and capital buffers.

Fintech NBFCs, in particular, are operating small-ticket, high-volume models; a recent report shows that active loans for fintech NBFCs are growing by about 25 percent year-on-year, outpacing traditional players.

Nearly half of NBFC credit exposure is now to the retail segment, which naturally generates dense traffic in onboarding, servicing, and collections journeys.

For COOs and support heads, this means millions of low-value but high-frequency interactions that legacy call centers and FAQ bots struggle to absorb.

Increased Regulations

On the regulatory side, the RBI’s Fair Practices Code requires NBFC boards to establish robust grievance redressal mechanisms and periodically review the effectiveness of customer complaint resolution.

The Integrated Ombudsman Scheme further codifies a hard 30-day window: if a regulated entity does not resolve a complaint within 30 days, customers can escalate directly to the RBI ombudsman.

In 2025, the RBI Governor explicitly urged NBFC leaders to ensure fair customer treatment and implement mechanisms for prompt grievance redressal.

Increased Customer Expectations

While regulation is raising the floor, customer expectations are raising the ceiling. Recent consumer research indicates that tolerance for friction and waiting is declining sharply, while expectations for speed and quality of service are increasing across various categories, including financial services.

In banking and NBFC-like journeys, 50-70% of customers now prefer digital self-service because of the perceived convenience and speed.

For NBFCs, the crunch is therefore threefold: ballooning interaction volumes, stricter RBI scrutiny, and a customer base that increasingly judges them on responsiveness and personalization. But, financial institutions need to have a unique, privacy-first perspective on agentic AI. We’ll address this in the next section.

What does AI in Customer Service mean for NBFCs?

For NBFCs, AI in customer service means replacing a thin layer of FAQ automation with an intelligent, regulated “frontline” that can actually understand borrower intent, view account data (with guardrails), and take action across various channels, including web, app, WhatsApp, and voice. Instead of just answering “what is your EMI policy?”, AI systems can answer “what is my EMI, can I reschedule it, and can you send me the link now?” in real time.

In practice, this usually shows up as a stack of AI capabilities:

- AI Chatbots and Voice Bots that use NLP and machine learning to converse in natural language, authenticate users, and assist with tasks like checking balances, tracking EMIs, sending statements, and answering policy questions 24/7.

- Omnichannel Automation, where the same AI brain responds consistently on web, mobile apps, WhatsApp, and other messaging channels, so borrowers don’t get different answers on different platforms.

- Agent Assist Tools, which read chats and suggest the best actions, summaries, and compliance-friendly responses, helping human agents resolve cases faster and with fewer errors

For NBFCs specifically, AI customer service maps directly to the loan lifecycle:

- Onboarding flows that guide customers through eligibility and KYC

- Servicing journeys that give instant clarity on due dates, charges, and statements

- Collections journeys where bots nudge, collect promises to pay, and even negotiate basic restructuring options

- Support for back-office functions like document verification and fraud checks.

Done well, this doesn’t just cut costs; it directly supports RBI’s push for faster, fairer grievance handling by reducing turnaround times and creating auditable logs of every interaction.

Strategically, then, AI in customer service for NBFCs means three big things:

- Automation of L1/L2, so a large share of repetitive queries never reach human agents, freeing them for high-risk and high-emotion cases. Real-world deployments report automation of 60–80% of routine queries while still allowing seamless handover to humans.

- Personalized, Proactive Support, where AI doesn’t just wait for tickets but predicts and prevents issues: nudging borrowers before a missed EMI or flagging friction in a digital application journey.

- Data-Driven Frontline, where every response can be logged, searched, and audited, and where AI helps surface patterns in complaints and service failures, is precisely what the RBI is now urging financial institutions to do with AI.

In short, for NBFCs, AI in customer service is not “a chatbot on the website.” It’s a redesign of the entire support layer into an intelligent, always-on, and regulation-aware system that can scale with digital loan books while still keeping borrowers on your side.

This approach can helpyou automate different parts of the lending lifecycle.

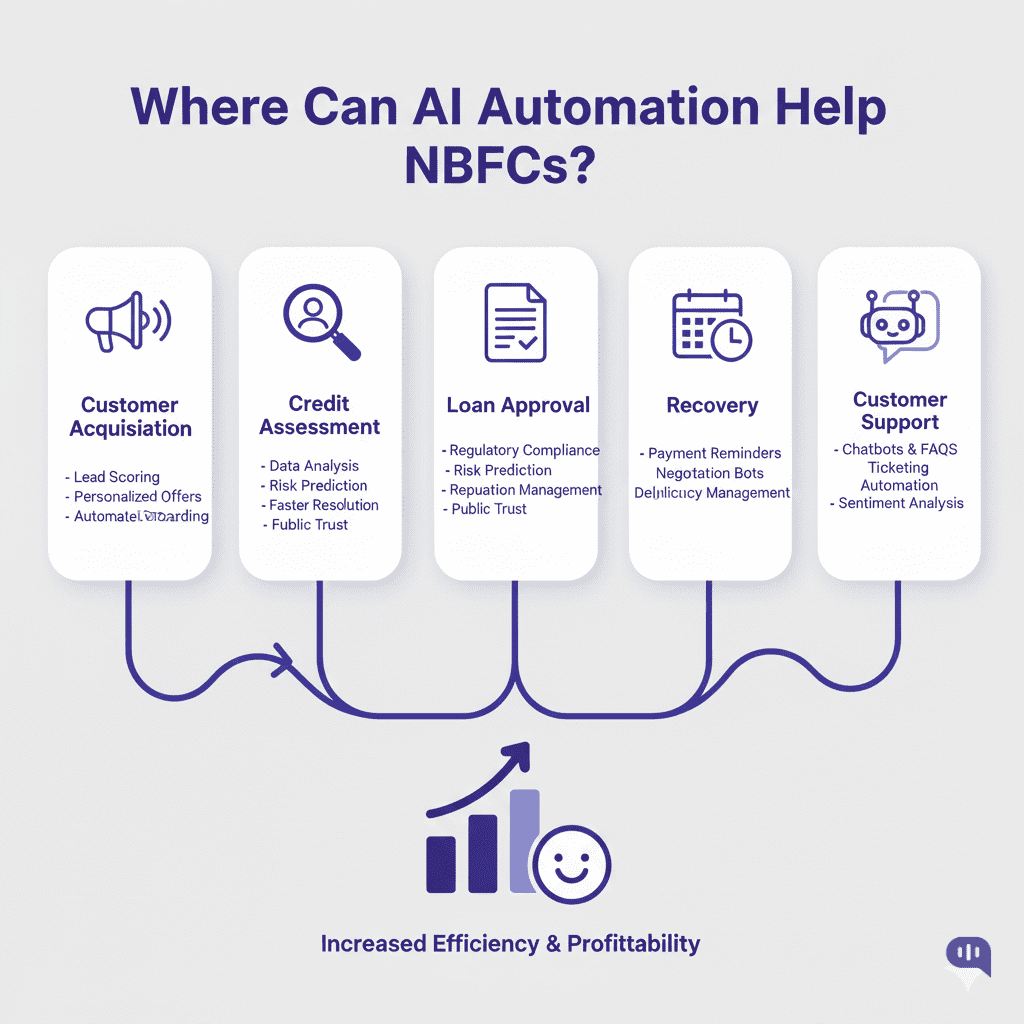

Which parts of the Lending Life Cycle can AI Automate?

AI can now touch almost every stage of the lending life cycle for an NBFC: from the first click on an ad to the last recovery reminder.

The RBI breaks the loan life cycle into

- Customer acquisition

- Credit assessment

- Loan approval, disbursement

- Recovery

- Associated customer service

And each of these stages now has mature AI use cases. Below is how AI maps to each part of that life cycle.

1. Customer Acquisition and Pre-Qualification

AI can automate top-of-funnel tasks, such as scoring leads, responding to website or WhatsApp queries, and conducting instant “soft checks” before a customer begins a complete application. Chatbots and AI agents can answer product questions, calculate indicative EMIs, and nudge borrowers into a complete application without agent intervention.

2. Onboarding, KYC, and Documentation

Once a borrower starts the journey, AI can guide them through onboarding and KYC end-to-end. Computer vision and OCR can be used to read.

- ID proofs

- Bank statements

- Payslips

Facial recognition can be used to support video KYC, and rule engines can be used to check for document completeness and consistency.

3. Credit Assessment and Underwriting

Credit assessment is one of the wealthiest areas for AI automation. Machine learning models now evaluate bureau history, income patterns, cash-flow data, and alternative data (e.g., utility payments, digital footprints) to generate risk scores and recommend limits or pricing within seconds.

For NBFCs doing high-volume, small-ticket loans, this is what makes “instant” or “same-day” credit decisions feasible.

4. Approval, Agreements, and Disbursement

After a decision is made, AI can streamline the approval and disbursement process. Document generation tools auto-populate loan agreements; e-signature flows are triggered automatically; and bots can verify key conditions (e.g., KYC complete, mandates registered) before green-lighting disbursal.

AI-driven checks also help NBFCs comply with the RBI’s digital lending guidelines on data usage, consent, and disbursement flows to borrower accounts, rather than third-party pools.

5. Loan Servicing and Ongoing Customer Support

Once the loan is live, AI agents can automate a significant portion of day-to-day servicing, including answering questions about outstanding balances, next EMI dates, interest rate changes, statements, foreclosure charges, and address updates.

AI can also trigger proactive notifications so customers don’t have to call in at all.

6. Collections, Restructuring, and Recovery

Collections is where many NBFCs feel the most pain, and AI is increasingly embedded here. Predictive models can segment borrowers by risk and willingness to pay, helping teams prioritize who should receive digital nudges, who requires agent follow-up, and who should proceed quickly to legal channels.

Conversational AI on SMS and WhatsApp can send reminders, collect “promise to pay” dates, trigger payment links, or offer structured options, such as short-term moratoriums or tenure extensions.

7. Analytics, Risk Monitoring, and Compliance

Finally, AI can automate the “meta-layer” of lending, including monitoring portfolio health, detecting early warning signals, and surfacing compliance issues. Lenders are already utilizing AI to track stress at a segment level, identify unusual patterns such as potential fraud, and generate dashboards for management and regulators.

In short, AI can automate every significant stage of the lending life cycle for NBFCs while also strengthening the oversight layer of analytics and compliance. The art is in deciding where to go “full automation” and where to keep humans firmly in the loop.

For each of these parts of the lifecycle, there are different channels you can deploy AI agents in. In the next section, we will examine the AI agent’s channel fit matrix.

Which Channels Should you Deploy AI Agents in?

Here’s a channel-by-channel view of where AI agents make the most sense for an NBFC and what each is best at.

| Channel | How AI Shows Up | Most Effective For | What to Watch Out For |

| Website & Mobile App Chat | AI chatbot inside your site/app widget, often tied to login and core systems. | – Logged-in servicing: EMI dates, outstanding balance, statements, charges, foreclosure estimates – Guided onboarding: eligibility checks, product comparison, application help – Cross-sell/upsell during self-service journeys | Needs tight integration with LOS/LMS/CRM for account-specific answers; UX should allow smooth handover to human chat |

| WhatsApp Business (Chat) | AI assistant on WhatsApp handling conversational flows, reminders, and links. | – EMI reminders, payment links, mini-statements – Simple loan services: status checks, basic KYC follow-ups- Quick customer support for FAQs and low-risk servicing queries | Meta now bans general-purpose AI bots; flows should be narrow, task-specific, and policy-compliant (no open-ended chat). Also, be careful with PII and consent |

| SMS & Rich Messaging (RCS) | AI decides who to nudge, when, and with what message; links lead to web/app/WhatsApp journeys. | – One-way or low-friction use cases: EMI reminders, due/overdue alerts, KYC expiry alerts – Triggering self-service flows via smart links or short codes | Great for reaching out, but limited for complex conversations; keep content concise, compliant, and avoid sharing sensitive details directly in SMS. |

| Email (AI-Assisted) | AI triages, drafts replies, summarizes threads, and powers smart auto-responses. | – Complex servicing where a written trail is important (disputes, restructuring, complaints) – Regulatory and ombudsman-facing communication where precise language matters – Internal case routing and prioritization | Keep a human in the loop for high-risk decisions and log AI-generated content for audit purposes. Useful more as “agent-assist” than fully autonomous in NBFCs. |

| Social Messaging (Facebook/Instagram) | Lightweight chatbots and AI templates handling public/DM queries, then moving customers to secure channels. | – Brand-level queries, branch info, generic product FAQs – Capturing complaints and redirecting to secure web/app/WhatsApp/voice journeys | Don’t handle full KYC or account-specific actions here; treat as top-of-funnel + complaint capture, with quick routing to more secure channels |

| Agent Desktop (Internal “AI Copilot”) | AI suggests replies, fetches account context, summarizes calls/chats, and recommends next-best-actions. | – Faster resolution on complex tickets across all channels – Reducing AHT, training time, and compliance risk for human agents – Post-call summaries and tagging for RBI audits and internal QA | Not customer-facing, but often the highest ROI AI deployment. Needs deep integration with CRMs and telephony, plus clear guardrails for what agents can/can’t send |

For NBFCs, start with website/app chat and WhatsApp as your core customer-facing AI channels, and back them up with an agent-assist copilot on the contact center side. Then layer in SMS, email, and social as orchestration channels that your AI uses to route customers into the right, secure journey rather than trying to do everything everywhere.

Another thing to consider is that NBFCs must comply with regulations at every level. You can build a compliance-first AI agent by following a few principles.

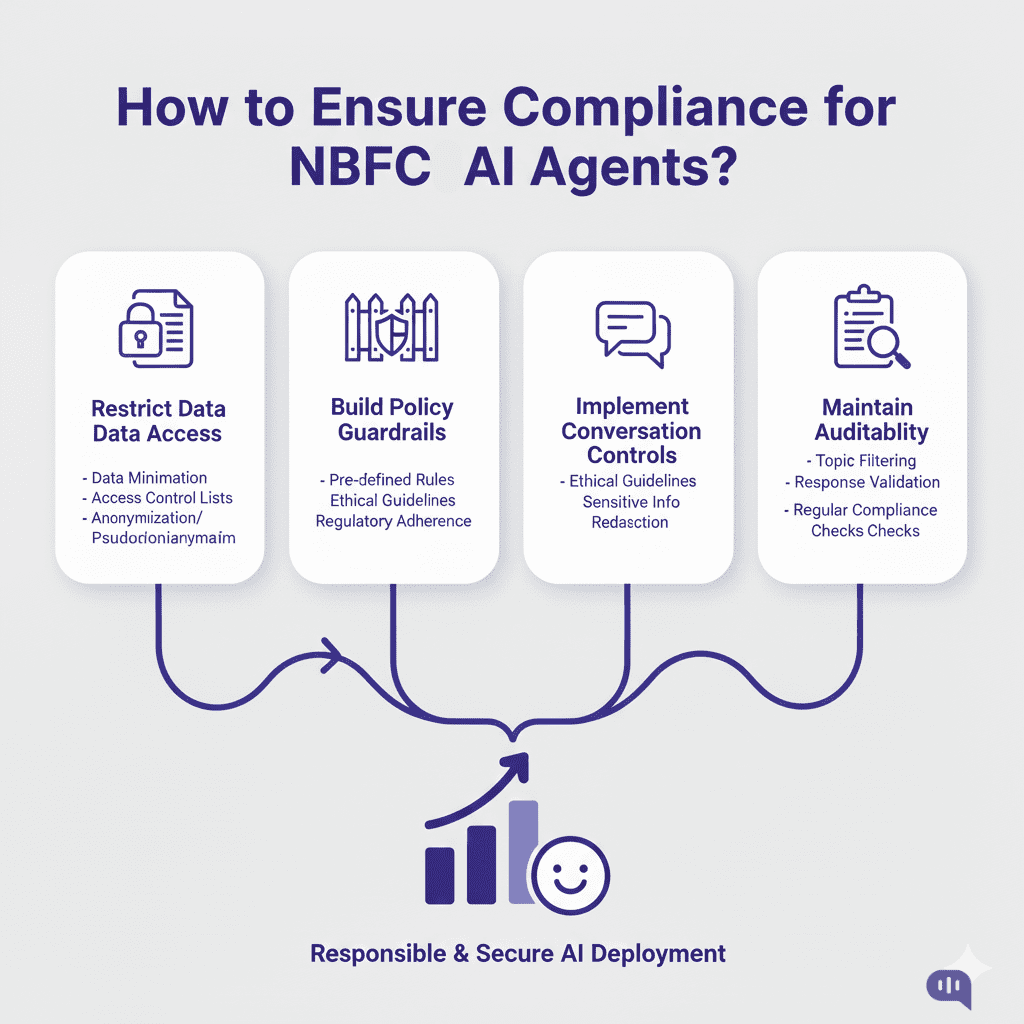

How to Build Compliance into AI Agents for NBFCs?

Designing AI agents for NBFCs is not just a UX or productivity exercise; it is a compliance exercise with RBI looking over your shoulder. The central bank’s digital lending guidelines, Fair Practices Code, and Integrated Ombudsman Scheme all assume that customer communication will be transparent, auditable, and fair—regardless of whether the “frontline” is a human or an AI. If AI agents handle onboarding, servicing, or collections, NBFCs must treat them as regulated touchpoints and incorporate compliance into the architecture from the outset, rather than as an afterthought.

A practical approach to this is in four layers: data access, policy guardrails, conversation controls, and auditability.

Data Access: Principle of Least Privilege

First, AI agents should only be presented with the minimum data necessary to complete a task. The RBI’s digital lending framework explicitly emphasizes the importance of purpose limitation, consent, and the secure handling of customer data throughout digital journeys. Instead of giving an AI model raw access to your core banking or LOS/LMS database, expose a narrow set of APIs that enforce business rules: “get EMI schedule for this authenticated customer,” “generate statement,” “update contact details,” etc.

Policy Guardrails: Encode RBI and Internal Rules

Second, build your regulatory and internal policies into the agent’s brain and plumbing. RBI’s Fair Practices Code requires that NBFCs communicate interest rates, charges, and terms in a transparent and non-misleading manner and maintain a robust grievance redressal mechanism. The digital lending guidelines also prohibit specific fee structures, mandate clear disclosure of key facts, and discourage over-aggressive collection practices. In an AI context, this means:

- Hard-Coding No-Go Zones: for example, the agent must not offer restructuring terms that fall outside approved templates, or commit to interest rates without first obtaining the official offer.

- Maintaining Policy Libraries: up-to-date RBI circulars, internal product notes, and collections scripts that the AI can reference but not override.

- Using Configurable Flows for sensitive journeys (e.g., restructuring, settlements, hardship assistance) so business and legal teams approve the exact sequence and language.

Conversation Controls

Third, control how the agent speaks and what it’s allowed to say. RBI has repeatedly reminded lenders to avoid harassment, unfair practices in collections, and opaque communication. For AI agents, this translates into:

- Scripted rails for high-risk contexts, such as collections, complaints, and ombudsman-related interactions. The AI can personalize phrasing within a template, but core statements, disclaimers, and options remain fixed.

- Tone and language guidelines that encode empathy and non-coercive phrasing, especially when customers indicate financial distress. Some NBFC-focused AI providers now offer sentiment detection and “tone check” features, allowing responses to be softened when necessary.

- Fallback-to-human rules: If a customer disputes a transaction, alleges mis-selling, mentions harassment, or uses specific trigger phrases (“RBI complaint,” “legal notice,” etc.), the bot should escalate the case immediately to a trained human agent and clearly state that the case is being handed over.

Auditability and Record-Keeping

Finally, treat every AI conversation as if it were a potential exhibit in front of an auditor or ombudsman. The RBI’s Integrated Ombudsman Scheme expects clear records of how a complaint was handled and within what timeline. That means your AI stack must:

- Log Every Interaction (prompt, response, back-end calls) with timestamps and IDs.

- Tie Logs to Customer Profiles and Ticket IDs so that you can reconstruct the whole journey.

- Provide Replay and Search Tools for compliance and risk teams to review specific conversations, spot patterns in grievances, and refine guardrails.

Some NBFC solution providers (like Kommunicate) have started bundling “conversation intelligence” that auto-summarizes chats, tags potential risk terms, and surfaces systematic issues in processes or scripts.

In practice, building compliance into AI agents for NBFCs looks like this:

- Start with narrow, well-defined journeys (e.g., EMI queries, basic account servicing) and integrate through hardened APIs.

- Layer in policy guardrails that encode RBI and internal rules; keep sensitive flows template-driven.

- Enforce conversation-level controls (tone, escalation triggers, banned promises) and keep humans in the loop for high-risk scenarios.

- Invest in logging, monitoring, and review tooling, so that every AI interaction is auditable and improves your controls over time.

Done this way, AI agents stop being a compliance risk and become an asset. You can also enhance this asset by designing it to align with borrower psychology.

How to Design AI Journeys to Cater to Borrower Psychology?

AI journeys must be designed not only for operational efficiency but also to minimize emotional friction and foster trust, particularly in collections and restructuring processes.

Below are four practical design principles that translate borrower psychology into AI journey design.

1. Make It Safe: Reduce Shame and Fear in Every Interaction

Debt-related shame and stigma can be so intense that borrowers avoid calls, ignore emails, and delay engaging with lenders, even when they want to regularize their accounts.

AI agents should operationalize that by design:

- Use neutral, non-judgmental language (“You have an overdue EMI of ₹X. Let’s look at your options”) instead of blame (“You failed to pay on time”).

- Offer private, self-service channels (web, app, WhatsApp) where borrowers can view their dues, explore options, and pay without speaking to a representative, which evidence suggests reduces embarrassment and increases their willingness to engage.

- Avoid public-facing nudges that could expose a borrower’s situation to others; keep sensitive content behind authenticated journeys.

When your AI feels like a calm, private guide rather than a loudspeaker, you remove one of the most significant psychological barriers to repayment: fear of being judged.

2. Nudge, Don’t Nag

Behavioural science shows that subtle “nudges” can meaningfully reduce delinquency without coercion. For AI journeys, that means:

- Use specific, time-bound prompts (“Can you make a payment by Friday, 7 PM?”) that help borrowers form concrete plans, which research links to better follow-through.

- Test social norm framing where appropriate (“Most customers in your situation choose to clear at least the overdue EMI this week”) while staying within RBI’s guidelines on non-coercive communication.

- Let borrowers choose among options so they feel in control rather than cornered.

The AI’s job is to help borrowers do what many already want to do by closing the intent–action gap, not by escalating pressure.

3. Design for Context and Timing

A borrower’s state of mind at 9 a.m. on salary day is very different from 11 p.m. at the end of the month. Digital collections research emphasizes that automated, contextual messages across preferred channels shorten the time from first contact to resolution and improve cash flows. To align with borrower psychology, AI journeys should:

- Use behavioural segmentation (e.g., salary credit patterns, past repayment behaviour) to time nudges when borrowers are most able and willing to pay.

- Respect quiet hours and cultural norms; RBI has repeatedly warned against odd-hour calls and intrusive contact, and the same principles should govern automated outreach.

- Choose the right channel for the moment: a soft WhatsApp reminder before the due date, an in-app banner on login, or an email summary for customers who prefer longer-form communication.

Think of your AI not as a single bot, but as a coordinated system of touchpoints tuned to when borrowers are most receptive.

4. Build Restructuring into the UX

AI journeys that only repeat “You are overdue, pay now” amplify helplessness. NBFCs should design flows where, after acknowledging distress, the AI can:

- Screen for hardship (“Are you facing a temporary income issue?”) using simple, stigma-free questions.

- Present policy-compliant options like short-term payment holidays, tenure extensions, or structured part-payments, where allowed under RBI and internal rules.

- Clearly explain the consequences and benefits of each option (including the impact on credit score, additional interest, and restoration of regular status), so borrowers can make informed choices rather than panicked ones.

Digital debt collection frameworks are increasingly emphasizing that empathetic, solution-oriented journeys preserve long-term relationships and improve recovery, rather than treating every case as a zero-sum confrontation.

5. Close the Loop with Feedback and Human Touch

Finally, borrower psychology is not static. Emotions shift as situations change. AI journeys should leave room for:

- Easy Escalation to Humans whenever the borrower says they are confused, distressed, or wish to speak to “someone.” RBI guidance on harassment and fair practices makes it clear that respectful human engagement is non-negotiable

- Post-interaction feedback (“Was this helpful?” “Do you feel your issue was understood?”) that informs continuous improvement loops.

- Real-time Monitoring of sentiment and outcomes enables compliance and CX teams to refine messages, scripts, and options based on what actually reduces stress and delinquency.

An AI journey that listens and adapts over time will feel more like a long-term partner than a script.

Now that you’re ready to handle AI implementation at NBFCs, let’s talk about some drawbacks of the technology.

What are the Potential Drawbacks and Risks of AI Agents in NBFCs?

AI agents can transform NBFC operations, but they also present specific risks that require deliberate management.

- Regulatory non-compliance if bots deviate from RBI guidelines or misstate rates, charges, or eligibility.

- AI hallucinations leading to confidently wrong answers on sensitive topics like EMIs, restructuring options, or due dates.

- Data privacy and security risks arise when agents are connected to LOS/LMS/CRM systems without strict access controls in place.

- Biased or unfair treatment of specific customer segments based on skewed training data or flawed logic.

- Over-automation that traps distressed or complex cases in bot loops without clear escalation to humans.

- Poorly designed tone or language in collection journeys can be perceived as harassing or insensitive to borrowers.

- Operational fragility from heavy dependence on a single AI vendor, model, or proprietary workflow stack.

- Loss of institutional knowledge if teams rely blindly on AI and stop investing in agent training and process clarity.

- Inconsistent experiences across channels when AI journeys are not aligned with human-assisted and branch workflows.

- Reputational damage if customers perceive AI as opaque, untrustworthy, or “creepy” in how it uses their data.

Handled well, these risks are manageable, but if NBFCs ignore them, AI agents can scale problems faster than they scale benefits.

Conclusion

AI customer service is no longer a side project for NBFCs; it is the only realistic way to keep up with surging volumes, tighter RBI scrutiny, and borrowers who expect instant, personalised answers on their channel of choice. When you combine lifecycle automation (from acquisition to recovery) with channel fit, compliance-first guardrails, and journeys tailored to borrower psychology, AI agents stop being “just another chatbot” and start functioning as a resilient frontline. Done right, they reduce cost to serve, improve collections, and make every interaction more transparent and auditable for both customers and regulators.

The opportunity, however, lies in thoughtful execution: starting with a few high-impact journeys, keeping humans in the loop where it matters, and treating compliance and empathy as design inputs rather than afterthoughts. If you want to see what this looks like in practice, you can book a 15-minute demo with the Kommunicate team, or go hands-on and sign up for an account to start building your own NBFC-ready AI agents today.

Devashish Mamgain is the CEO & Co-Founder of Kommunicate, with 15+ years of experience in building exceptional AI and chat-based products. He believes the future is human and bot working together and complementing each other.