Updated on March 18, 2026

An AI customer support RFP template should cover functional capabilities, escalation design, security requirements, SLA commitments, and a risk-aware scoring rubric. This guide includes a complete vendor evaluation framework with red flags and a pilot testing structure.

Most AI customer support RFPs fail before a single demo is scheduled.

This is because procurement departments treat AI customer service vendor selection like a SaaS purchase: compare features, check pricing, watch a polished demo, pick the one that feels most capable. Then they sign, deploy, and discover six months later that their bot is fabricating refund policies, looping tickets between AI and human agents without resolving either, or quietly funnelling conversation data into a shared training model they never explicitly authorized.

This guide is not just a template. It is a buyer’s evaluation system for selecting AI customer support vendors safely, and we’re covering:

1. Why do Most AI Chatbot RFPs miss the Real Risks?

2. What Should You Gather Before You Write the RFP?

3. RFP Template: Which Features to Ask for and Why?

4. RFP Template: Security Requirements: Our Two-Layer Framework

5. RFP Template: SLA Requirements

6. Risk-Aware Scoring Rubric for AI Customer Service

7. Red Flags That Should Lower or Eliminate a Vendor’s Score

8. How can you run a Meaningful Pilot?

9. Conclusion

Why do Most AI Chatbot RFPs miss the Real Risks?

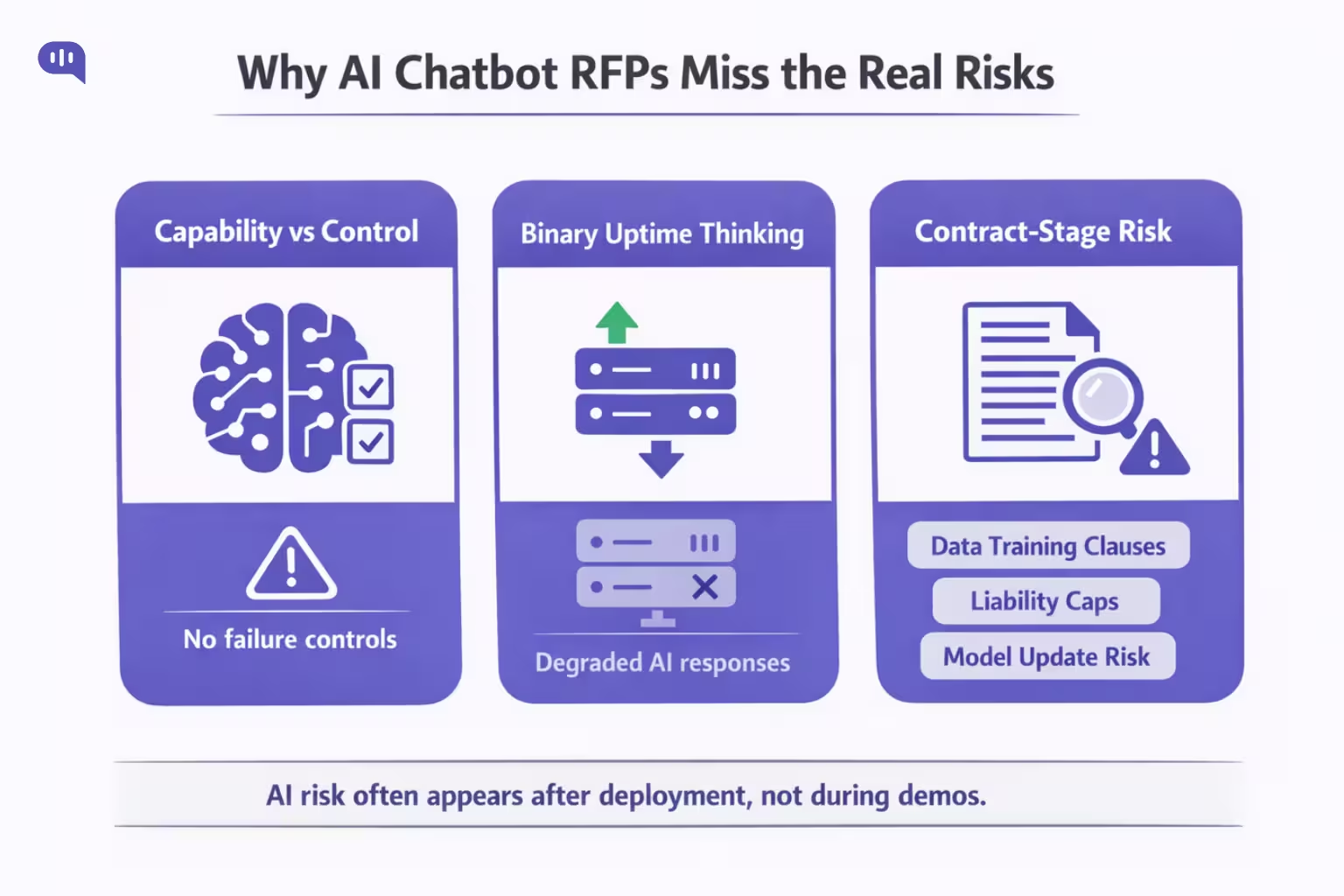

Standard RFP evaluation misses three things specific to AI tools:

They Score Capability and not Control – A vendor can demonstrate impressive accuracy on a curated demo dataset while having no documented process for handling confident-sounding hallucinations or adversarial user inputs. A feature score of 5/5 for “natural language understanding” tells you nothing about what the system does when a customer deliberately tries to manipulate it.

They Ignore the Third State – Traditional SaaS has an uptime risk: the system is either running or it isn’t. AI, however, can be available but provide degraded performance. A live model that produces stale, inaccurate, or tone-deaf responses is worse than downtime because it actively damages customer trust before anyone notices.

They miss the Risk from Contact Stage – The most consequential risks in AI vendor selection aren’t visible during evaluation. Clauses that allow vendors to train shared models on your conversation data, liability caps that leave you unprotected after a breach, and rollback restrictions that lock you into a model version you cannot revert when a vendor update degrades performance.

For buyers in this space, the lack of control or degraded performance can result in bad service delivery, which can cascade into bad revenue outcomes. This is why procurement teams should be ready before making the request.

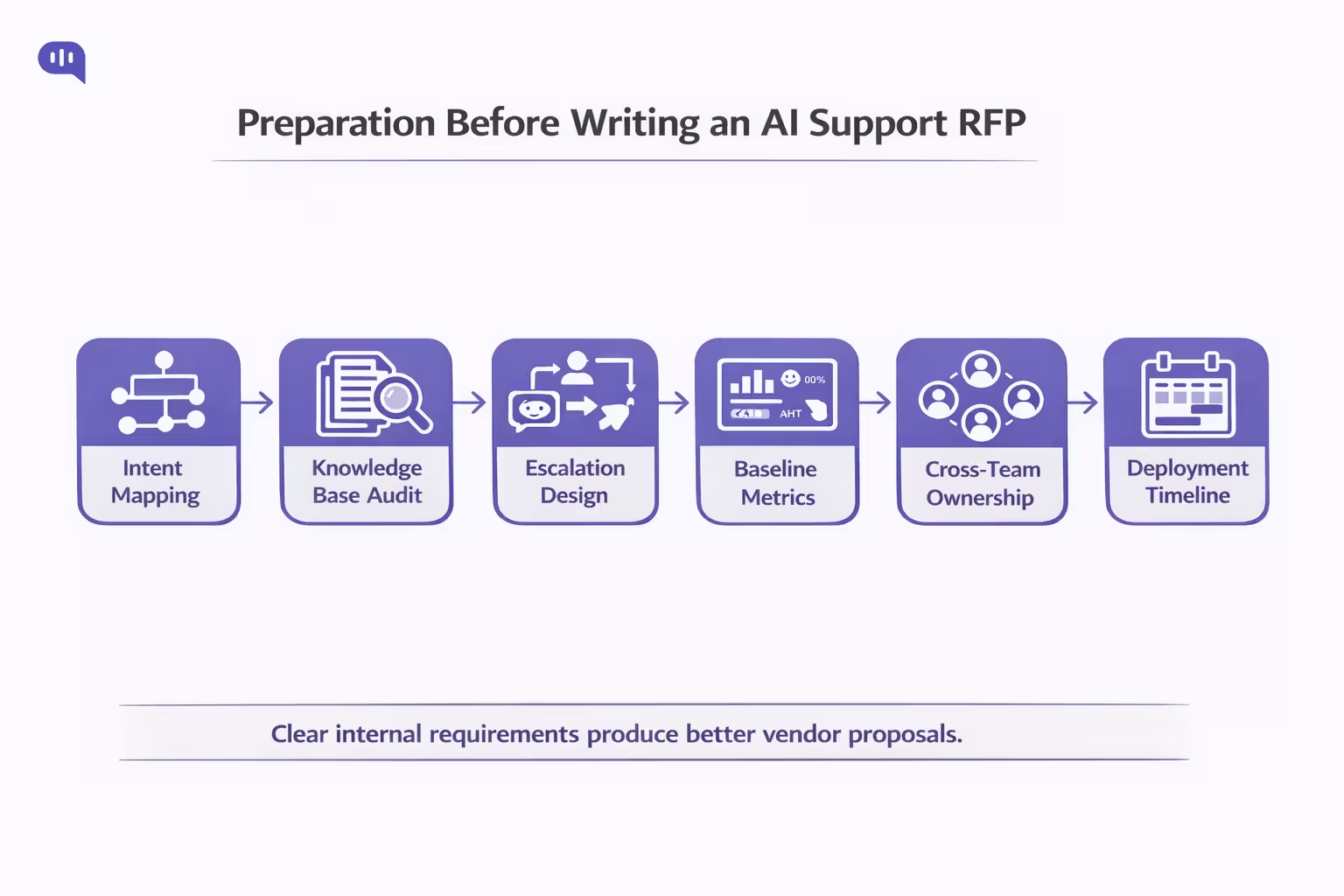

What Should You Gather Before You Write the RFP?

The quality of your RFP is bound by how clearly you’ve defined your own requirements. Before you issue an RFP, you should have the following:

- Map your top 10–15 support intents and separate what the AI should resolve autonomously, assist agents with, and route directly to humans

- Audit your knowledge base — Gartner research found 43% of self-service failures happen because customers cannot find relevant content. The AI amplifies your KB quality; it does not replace it.

- Define escalation logic before asking vendors to design it: escalation triggers, warm vs. cold transfer, context passed at handoff, and a hard limit on AI-to-human-to-AI transitions per ticket.

- Establish baseline metrics: current CSAT, AHT, deflection rate, and first-contact resolution rate; you need these to write meaningful pilot success criteria.

- Assign owners across support ops, IT, security, and legal before issuing; vendors can only respond to what is asked, and if legal isn’t in the room, data training clauses won’t be in the RF.P

- Activation Timeline – Vendors often promise deployments in 2-4 weeks. Public evidence suggests enterprise teams need one to three months for a meaningful production rollout, and longer when integrations, governance, and change management are involved.

Once you have this settled, you can start building your RFP document in earnest. We’ll talk about that next.

RFP Template: Which Features to Ask For and Why?

Kommunicate has worked with enterprise customers in the AI customer service space for over half a decade. This means we have a detailed list of features that fit into different industry verticals.

The most common requests we see are as follows:

1. Functional Capabilities

Ask vendors to describe how they measure containment rate, deflection rate, and resolution rate, and to provide their methodologies.

The AI industry has no standard definition of containment rate. Some vendors count a conversation as “contained” if the customer doesn’t request a human. Others require a confirmed resolution. A vendor citing 90% containment under the first definition and another citing 65% under the second may be performing identically.

Ask what the system does when it cannot determine intent or cannot find a reliable answer. Vendors that claim the system “always escalates appropriately” without defining what appropriate means are not answering the question.

2. Escalation Design

Require vendors to describe the maximum number of AI-to-human-to-AI transitions allowed per ticket before forcing a permanent human path. When an AI escalates to a human, the agent applies a template that re-triggers routing logic, the AI flags low confidence and re-escalates, and the SLA clock runs on every ticket instance throughout.

This escalation loop pattern burns SLA budget, creates duplicate tickets across channels, and eliminates accountability for resolution. Your RFP should require vendors to address how their systems prevent it explicitly.

3. Multilingual Requirements

Ask vendors to distinguish between languages that the system can parse and generate text for, languages with production-validated accuracy, and languages with active enterprise customers in production today.

In February 2026, Washington State’s Department of Licensing deployed an AI phone system that reportedly delivered English spoken with a heavy Spanish accent to callers who chose the Spanish option — a consequence of confusing technical language capability with production-grade fluency.

“We support X languages” is not an acceptable answer without evidence of production performance.

4. Agentic Capabilities

If the vendor’s platform can take actions, it requires a complete list of every action the AI can execute without human approval. Publicly documented cases of support AI issuing the wrong refund or modifying the wrong account are still scarce.

The better-evidenced failure pattern is upstream: bots fabricate policies, make false commitments, and deliver unsafe advice. In 2024, a Canadian court held Air Canada liable after its chatbot told a passenger he could claim a bereavement refund retroactively. The bot didn’t execute the refund: it created a false service commitment with financial consequences.

That failure becomes more operationally severe once action-taking permissions are added. Cursor’s AI support bot offers a more recent example: it invented a company policy about account logins, causing customer confusion and account cancellations.

In addition to these features, you should request specific security capabilities so your customer service AI agent is production-ready out of the box.

RFP Template Security Requirements: Our Two-Layer Framework

AI vendors introduce risk at two layers: the standard SaaS layer and the AI-specific layer. Both must be addressed explicitly.

Standard Layer (Baseline)

- SOC 2 Type II (not Type I): Type I attests that controls are designed correctly at a point in time. Type II attests they operated effectively over a minimum six-month audit period. For a vendor holding live customer conversation data, Type I is insufficient.

- SSO/SAML 2.0, MFA enforced for all admin access, RBAC with least-privilege defaults

- Encryption at rest and in transit with documented key management

- Published subprocessor list with contractual change notification (not just a website update)

- Breach notification: require a specific timeframe in the contract: 72 hours is the GDPR standard. Reject any contract using “timely notification” or “promptly” without a numeric commitment.

- Independent penetration testing annually; requires a shareable summary under NDA.

AI-Specific Layer

The OWASP Top 10 for LLM Applications identifies the failure modes unique to language model deployments. Five apply directly in customer support contexts:

1. Prompt Injection: An attacker crafts a customer message that overrides system instructions.

Ask vendors: have you conducted red-team exercises specifically designed to break your system prompt through customer inputs? What is logged when an anomalous input is detected?

2. Insecure Output Handling: Acute in agentic deployments. When AI output is passed to another system without validation, it can lead to unintended downstream actions. Ask: How is AI-generated output validated before it reaches integrated systems?

3. Sensitive Information Disclosure: LLMs trained on customer conversation data can inadvertently reproduce fragments of that data in responses to other customers — account details, complaint history, personal information.

Ask: What technical controls prevent cross-tenant data leakage at the model and retrieval layers?

4. Excessive Agency: The AI has been granted permissions beyond what its function requires, and that excess becomes a vector for harm when the system behaves unexpectedly.

Ask: What is the principle of least privilege applied to AI actions, and what human approval gates exist before irreversible actions are executed?

5. Overreliance: Teams treat AI outputs as authoritative without maintaining human oversight mechanisms.

Ask: What accuracy audit mechanisms do you provide that are independent of the AI’s own confidence scoring?

The Most Important Security Question

Will our conversation data be used to train your AI models, and will those improvements be shared with other customers?

Do not accept a general privacy policy reference. Do not accept “we take data privacy seriously.” Require this commitment in the Master Service Agreement — not the Terms of Service, not a help center article. The answer to this question belongs in the document that governs the commercial relationship.

Watch for these specific clause patterns in ToS language: “we may use your data to improve our AI models,” “aggregate and anonymized data may be used to train,” and “usage data may be used to enhance service performance.” If any of these exist without an explicit opt-out right in the MSA, your conversation data may be used to train a shared model without your customers’ knowledge.

Finally, once the basics of features and security have been settled, you can set some concrete terms for the SLA.

RFP Template: SLA Requirements

Standard SLA frameworks assume the service is binary. AI introduces a third state: available but degraded. So, you need to establish some rules that protect your customers.

Accuracy SLAs: During the pilot, establish a documented accuracy baseline for your specific use case. Require the vendor to commit contractually to maintaining accuracy within a defined tolerance. Perform regular tests to monitor how this works.

This is not yet standard practice in the market. That absence is precisely why it needs to be in your RFP.

Model drift clauses: AI performance degrades over time as customer language patterns, product offerings, and policy language evolve.

Ask vendors how they monitor for accuracy degradation between major model updates, and what remediation they commit to if accuracy falls below the agreed baseline.

Rollback rights: When a vendor pushes a model update that degrades performance, you need the contractual right to revert to the previous version.

Require minimum notice periods before model updates (48–72 hours minimum), a documented rollback procedure with a defined execution timeframe, and a freeze period option during business-critical seasons.

AI-Specific Severity Tiers

The standard P1–P4 definitions weren’t designed for AI failure modes. Define them explicitly:

- P1: Mass hallucination event, customer PII exposure in responses, complete AI containment failure, safety policy violation at scale

- P2: Accuracy degradation exceeding the agreed threshold, integration outage affecting live support

- P3: Declining accuracy trend within tolerance, non-critical feature failure

- P4: Reporting discrepancies, cosmetic issues

What to reject: Any SLA using “commercially reasonable efforts,” “best endeavours,” “timely notification,” or “promptly” without a numeric target. This language isn’t a concrete commitment. Replace every instance with specific numbers.

Remedies: Service credits as the only remedy give vendors a financial incentive structure where missing SLAs is simply a cost of doing business. Require escalating credits for repeated misses and termination rights after a defined breach threshold — for example, three P1 incidents in any 90 days.

Now that we’ve established a core framework for the AI customer service RFP template, let’s create a scoring rubric that you can use to evaluate vendors.

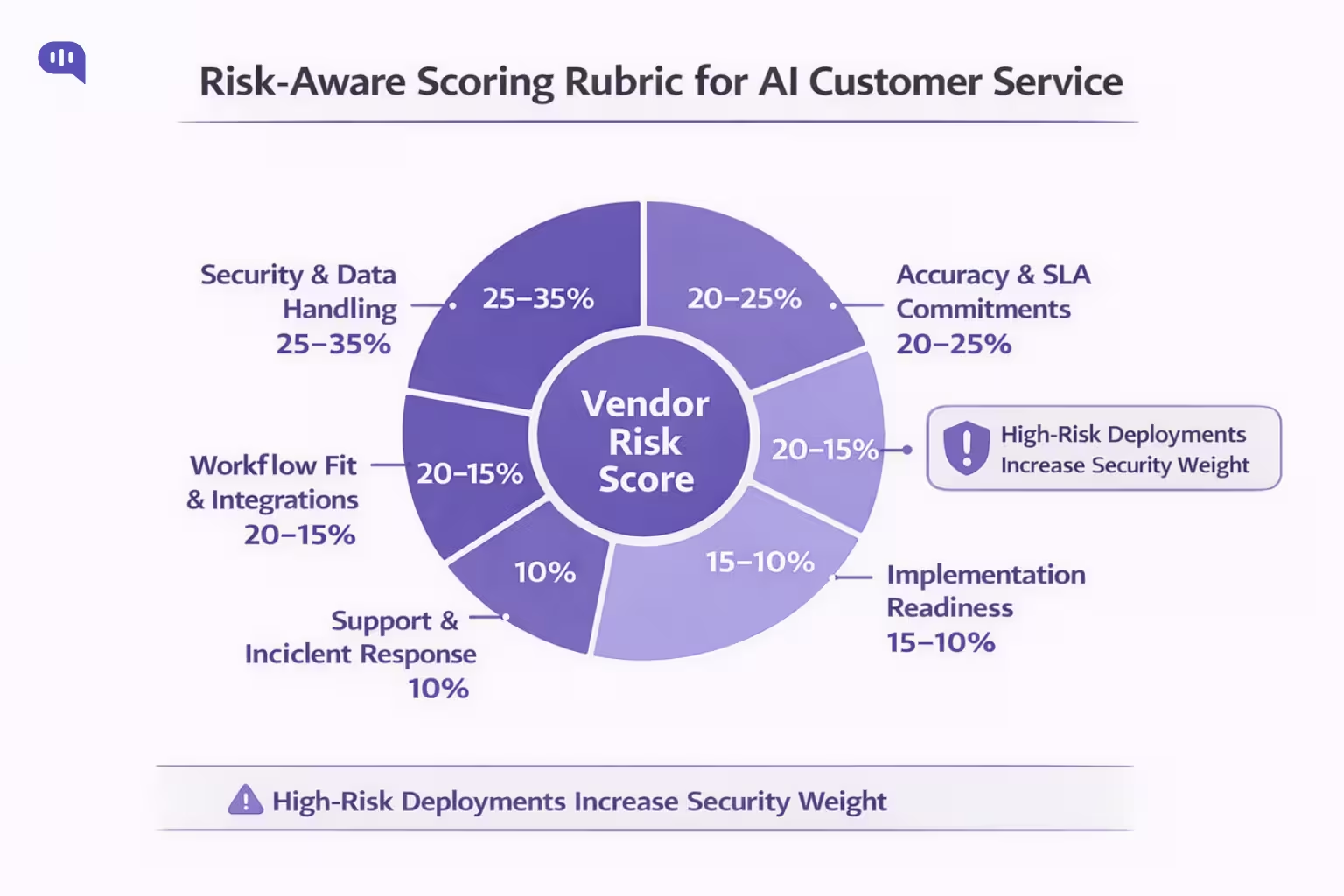

Risk-Aware Scoring Rubric for AI Customer Service

Use two layers: weighted scoring for comparison and hard disqualification thresholds that override the weighted total.

| Category | Standard Weight | High-Risk Weight* |

| Security, Data Handling & AI-Specific Controls | 25% | 35% |

| Accuracy, Controllability & SLA Commitments | 20% | 25% |

| Workflow Fit, Escalation Design & Integrations | 20% | 15% |

| Implementation Readiness & Vendor Maturity | 15% | 10% |

| Support Quality & Incident Response | 10% | 10% |

| Pricing and Commercial Terms | 10% | 5% |

High-Risk Applies When: You process data for regulated populations; you deploy agentic AI with action-taking permissions; your support volume is high enough that a degraded AI has immediate customer-visible impact.

Hard Disqualification Rules

Disqualify any vendor that cannot confirm in writing that your conversation data will not train any shared model.

- Provide documented incident response procedures

- Explain what the system does when it cannot confidently answer a question

- Share any security documentation under NDA

- Commit to specific numeric SLA targets for P1 incidents

These are not negotiating points. They are the minimum threshold for a vendor to be considered a responsible steward of your customer data.

Alongside this rule, there are several red flags to watch out for as you go through the proposals that land on your desk.

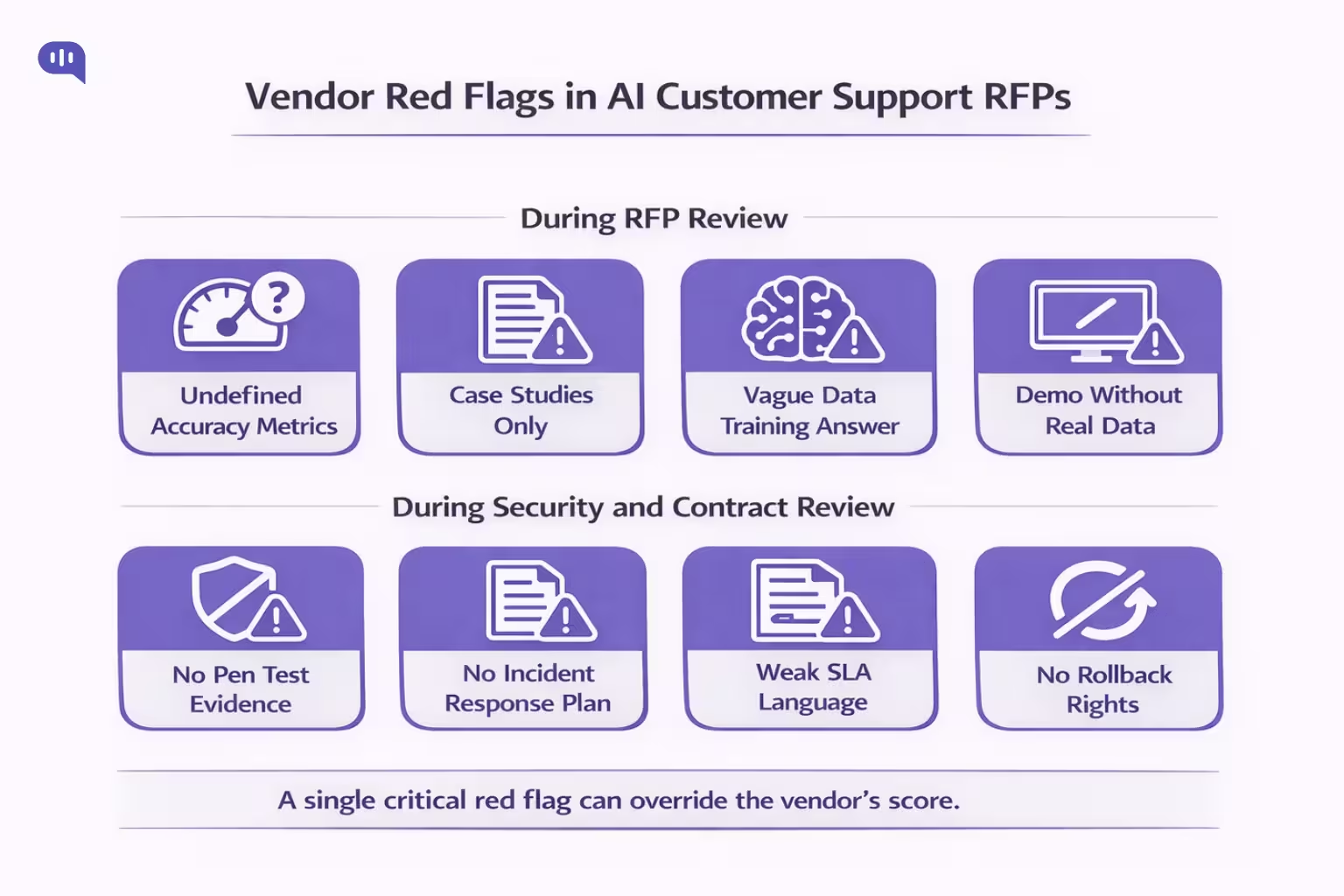

Red Flags That Should Lower or Eliminate a Vendor’s Score

Every stage of proposal review has some risk factors that you can use to eliminate possible candidates:

During the RFP response:

- Accuracy metrics without methodology — a number without a definition is a marketing claim

- Only case studies offered as references; no production customers willing to speak directly

- Vague data training answers (“we are GDPR compliant” is not an answer to whether your data training shared models)

- Demo environments that don’t test against your actual integrations, knowledge base, or intent categories

During security review:

- Cannot share pen test results under any conditions — even under NDA

- No documented incident response plan, or one that has to be created in response to your request

- No practiced answer on prompt injection defences

In the contract:

- “Commercially reasonable efforts” SLA language

- Data training consent in ToS rather than an explicit opt-out right in the MSA

- Liability caps below meaningful levels — especially for data security incidents

- No rollback right after model updates; vendor has sole discretion over model changes

- Proprietary data formats with no meaningful export pathway — vendor lock-in on exit

In the implementation proposal:

- No knowledge base audit in the onboarding scope

- Timeline under 8 weeks for enterprise deployment

- No defined pilot phase with written, measurable success criteria before full rollout

- No named implementation lead with relevant experience

This should help you narrow down your options and identify the vendor that best fits your AI customer service tool needs. Finally, we’ll bring the above sections together and give you a possible implementation plan.

How can you run a Meaningful Pilot?

Most buyers schedule vendor demos before they’ve finished scoring written proposals. That sequencing works against you. A polished live presentation reliably overrides careful analysis of written commitments.

Evaluate proposals on paper first, rank them against your rubric, then use demos to pressure-test your top two, not to form your first impression.

Once you’ve selected a finalist, structure the pilot to measure what demos cannot.

| Phase | Timeline | What to Do |

| Define Scope | Week 1 | Lock intent categories, channels, success thresholds, and volume floor in writing before the pilot begins |

| Set Your Volume Floor | Weeks 2–8 | Target 500–1,000 resolved conversations per intent category. Below this, the accuracy conclusions are not statistically valid |

| Test Failure Modes | Throughout | Design scenarios for recently changed policies, edge-case language inputs, adversarial inputs, and restricted topics |

| Audit Outputs Independently | Weeks 6–8 | Do not rely on the vendor’s confidence scores. Run a human review sample against your own accuracy criteria |

| Go/No-Go Decision | End of Pilot | Compare results against your pre-agreed thresholds. If the pilot cannot fail, it is not measuring anything |

| Negotiate the Contract | Post-Pilot | Use your actual accuracy baseline, not vendor benchmarks, to anchor SLA commitments in the final agreement |

A vendor that performs well only when customers are cooperative is a risk you haven’t measured yet.

Conclusion

Selecting an AI customer support vendor is a risk management decision. The buyers who get it wrong don’t usually pick bad technology. They pick good technology without the contractual protections, security validation, or implementation structure to deploy it safely.

They skip the knowledge base audit, accept “commercially reasonable efforts” SLA language, miss the data training clause buried in the ToS, and find themselves six months in with a degraded model, no rollback right, and a vendor whose liability cap doesn’t cover the damage. This framework exists to close those gaps before you sign, not after.

The RFP is where you set the terms of the relationship. The rubric is how you stay objective when a demo impresses you more than it should. The red flag list is what your legal team needs to review before approving the MSA. Use them together, run a pilot that can actually fail, and base your final contract on what you measured in your environment.

Kommunicate helps support and operations teams deploy AI that works reliably in production. This framework reflects the questions we hear from buyers who’ve been through a failed deployment and the protections every enterprise buyer deserves before signing.

Frequently Asked Questions (FAQs)

An AI customer support RFP (Request for Proposal) is a structured document that enterprise buyers use to evaluate and compare AI chatbot vendors. Unlike standard SaaS RFPs, it must address AI-specific risks including hallucination controls, data training clauses, model drift, escalation design, and accuracy SLAs — not just features and pricing.

An AI customer support RFP should include functional capability requirements, escalation design specifications, multilingual support criteria, security requirements covering both standard SaaS and AI-specific controls, SLA commitments with numeric targets, a risk-aware vendor scoring rubric, and a structured pilot phase with measurable success criteria.

Evaluate AI customer service vendors using a weighted scoring rubric across six categories: security and data handling, accuracy and SLA commitments, workflow fit and escalation design, implementation readiness, support quality, and pricing. Apply hard disqualification rules for vendors that cannot confirm your conversation data won’t train shared models, lack documented incident response procedures, or refuse to commit to numeric SLA targets.

At minimum, require SOC 2 Type II certification (not Type I), SSO/SAML 2.0 with MFA enforced, encryption at rest and in transit, a published subprocessor list, and a contractual breach notification commitment of 72 hours or less. For AI-specific security, vendors should also have documented defences against prompt injection, cross-tenant data leakage controls, and a principle of least privilege applied to all AI actions.

Structure the pilot across six phases: define scope in writing before it begins, set a volume floor of 500–1,000 resolved conversations per intent category, test failure modes including adversarial inputs and recently changed policies, audit outputs independently without relying on vendor confidence scores, make a go/no-go decision against pre-agreed thresholds, and use your actual accuracy baseline — not vendor benchmarks — to anchor SLA commitments in the final contract.

Adarsh Kumar is the CTO & Co-Founder at Kommunicate. As a seasoned technologist, he brings over 14 years of experience in software development, artificial intelligence, and machine learning to his role. His expertise in building scalable and robust tech solutions has been instrumental in the company’s growth and success.