Updated on February 13, 2026

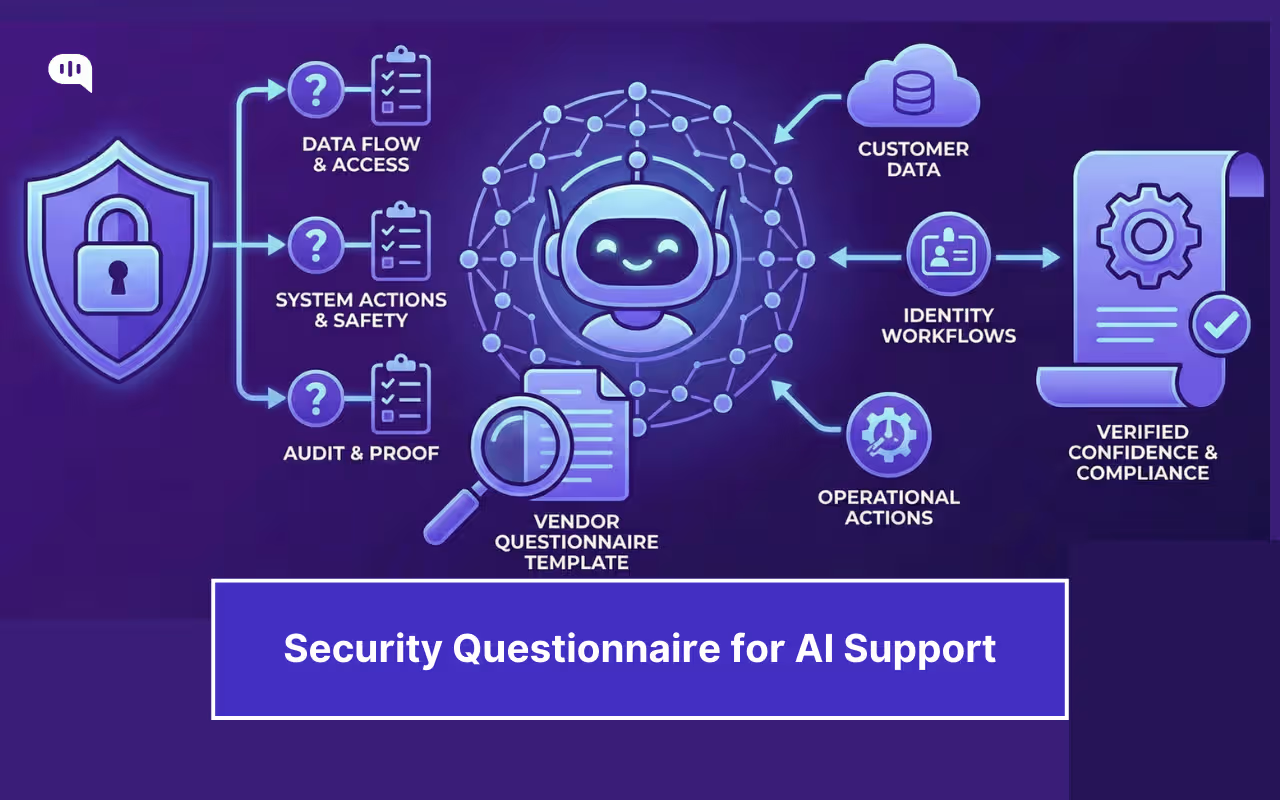

AI support automation sits in the middle of your customer data, identity workflows, and operational actions. The right diligence is not a 200-question checkbox exercise. It is a structured way to answer three risk questions:

1. Where does our data go, and who can touch it?

2. What can the system do, and what stops unsafe actions?

3. If something goes wrong, can we prove what happened?

Below is a copy-pasteable security questionnaire template designed for how people actually search “security questionnaire template” today: standardized baselines (SIG, CAIQ/STAR) plus the AI-specific controls that generic vendor questionnaires do not cover. (Shared Assessments).

We’ll cover:

1. What Automation Are We Enabling?

2. What Baseline Evidence Proves Trust?

3. Who Owns Security Governance Here?

4. Where Does Customer Data Go?

5. Who Can Access What Internally?

6. How Secure Is the Platform?

7. How Will Incidents Be Handled?

11. How Do You Prevent Hallucinations?

12. How Do You Stop Prompt Injection?

13. How Do We Audit Everything?

14. How Do We Validate Answers?

15. Conclusion

What Automation Are We Enabling?

You cannot assess vendor risk until you scope the automation. Keep this short and force clarity.

Ask

- Which channels are in scope (web chat, WhatsApp, email, voice)?

- Which systems are integrated (CRM, ticketing, order system, identity)?

- What data classes are processed (PII, payment, auth artifacts, attachments)?

- What actions can the agent execute (read-only, draft-only, transactional changes)?

- Which regions and residency requirements apply?

Evidence to provide

- High-level data flow diagram + list of integrations and requested permissions.

Once you’ve defined the scope, you can ask for proof that the vendor’s security program is real, current, and relevant to the product you will deploy.

What Baseline Evidence Proves Trust?

Start with standardized artifacts so your review is comparable across vendors.

Ask

- Provide SOC 2 Type II and/or ISO 27001 with explicit scope.

- Provide CSA CAIQ (STAR Level 1) answers, or an equivalent mapping for cloud controls transparency.

- If your process uses Shared Assessments, can the vendor support SIG responses (Standardized Information Gathering responses) or mapping?

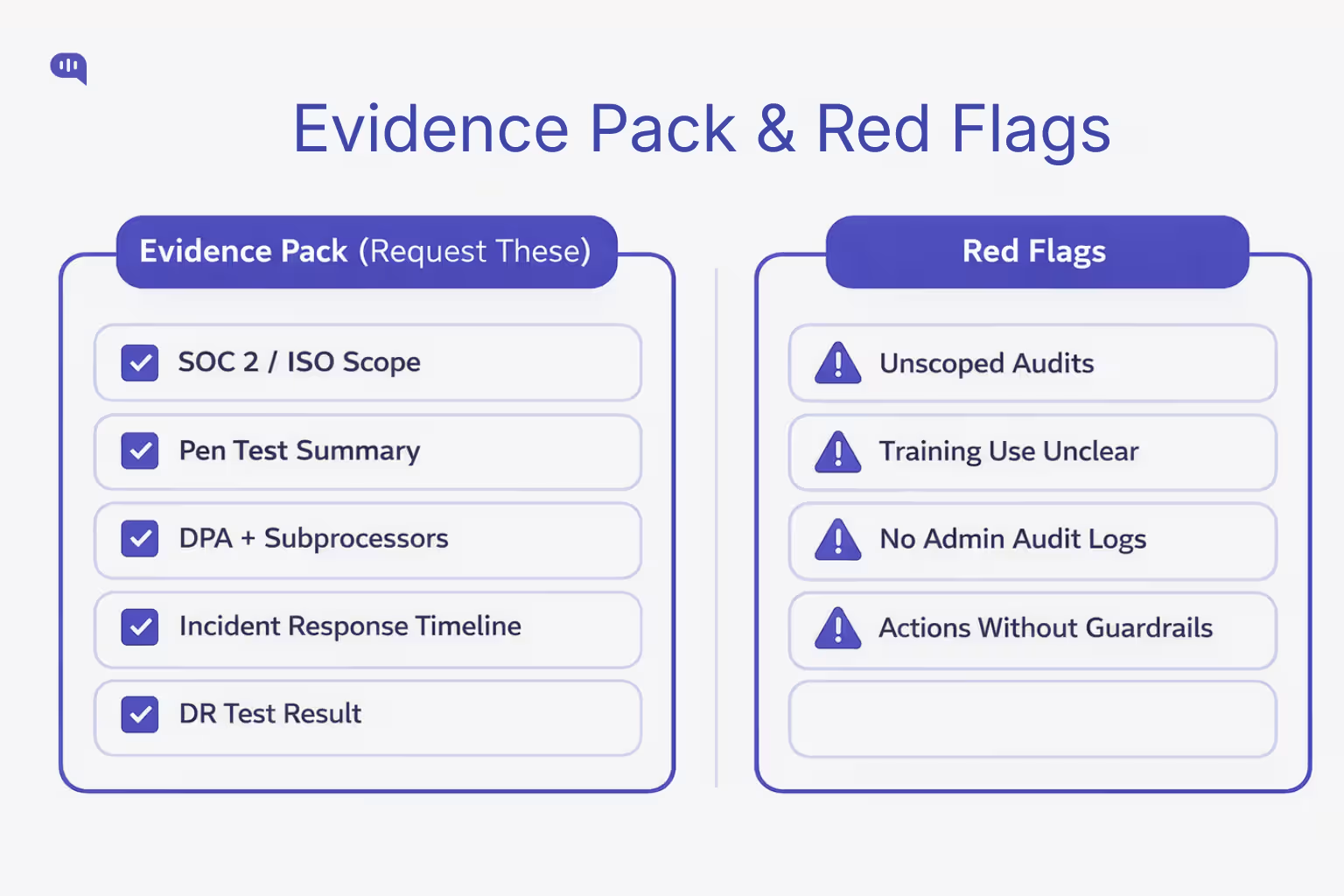

Red flags

- Audit scope excludes the product you will use.

- “We are working on SOC 2” with no timeline or interim controls.

Baseline reports can confirm control coverage, but you still need to know who is accountable for security day to day and how governance actually operates.

Who Owns Security Governance Here?

You are testing accountability, not marketing claims.

Ask

- Who is the named security owner and escalation path?

- What policies exist (secure SDLC, access control, incident response, vendor risk)?

- How are exceptions approved, time-boxed, and logged?

- How often is security training done for engineering and support?

Evidence to provide

- Security policy index or control map, plus ownership by function.

Governance tells you who owns the controls. Next, validate the most important outcome of those controls: strict, predictable handling of your customer data.

Where Does Customer Data Go?

This is the highest information gain section for AI support vendors.

Ask

- What data is collected, derived, and stored (transcripts, summaries, embeddings)?

- Retention defaults and whether retention is configurable by tenant.

- Encryption in transit and at rest, plus key management approach.

- Deletion process and deletion SLA, including backups and derived data.

- Subprocessors list and change notification process.

Evidence to provide

- DPA, subprocessors list, retention schedule, deletion policy excerpt.

Data policies only matter if access to that data is tightly controlled. The next step is to verify least privilege and support access discipline.

Who Can Access What Internally?

Most “AI vendor” incidents become access control failures in practice.

Ask

- SSO/SAML (Single Sign On and Security Assertion Markup Language) and MFA (Multi-Factor Authentication) support, and whether enforcement is tenant-controlled.

- RBAC (Role-Based Access Control) granularity (admin vs agent vs analyst) and least-privilege defaults.

- Vendor support access: is it just-in-time, approved, and time-boxed?

- Audit logs for exports, config changes, permission changes, and admin actions.

Evidence to provide

- RBAC matrix + sample redacted audit logs.

Strong access controls reduce exposure, but you still need assurance the underlying platform is engineered and operated securely.

How Secure Is the Platform?

Here, you test engineering maturity and operational hygiene.

Ask

- Secure SDLC (Software Development Life Cycle) controls: code review, dependency scanning, secret scanning.

- Vulnerability management: SLAs by severity and patch cadence.

- Pen testing: frequency and whether an executive summary is shareable.

- Tenant isolation: how cross-tenant access is prevented and tested.

Evidence to provide

- Pen test executive summary + vulnerability remediation policy and SLAs.

Even mature platforms have incidents. What separates safe vendors from risky ones is how quickly and transparently they respond when something breaks.

How Will Incidents Be Handled?

You want a predictable, time-bound response posture.

Ask

- Incident classification and on-call coverage.

- Customer notification timelines and triggers.

- Forensics readiness: log retention, evidence preservation, legal hold support.

- “What changed after the last incident?” (high signal question)

Evidence to provide

- Incident response excerpt (roles + comms timeline) and log retention policy.

Traditional IR is necessary, but AI vendors introduce an additional risk surface: how prompts, transcripts, and model providers interact with your data.

How Is AI Data Used?

This is where many vendors become unsafe by default.

Ask

- Which models are used and where are they hosted (first-party, third-party)?

- Is customer data used for training by default? If no, how is it enforced?

- Are transcripts, prompts, or attachments shared with model providers?

- Are embeddings or fine-tuning used, and how can customers opt out?

FS-ISAC frames GenAI vendor evaluation as a structured supplement to existing third-party risk programs, with documented outcomes and qualitative risk assessment.

Evidence to provide

- AI data usage policy + AI subprocessors list.

Data governance answers “where data goes.” Next, you must answer “how the AI behaves,” especially when it is uncertain or under attack.

How Do You Prevent Hallucinations?

You are not only buying answers. You are buying safe failure modes.

Ask

- Can answers be restricted to approved sources (grounding via KB or policy docs)?

- What happens on low confidence (refuse, clarify, escalate)?

- How are model or prompt updates regression-tested?

- Can handoff include a structured summary and cited sources?

Evidence to provide

- Example grounded answers (redacted) + evaluation methodology summary.

A system can be well-grounded and still be manipulated. The next layer is adversarial resilience: prompt injection, jailbreaks, and tool abuse.

How Do You Stop Prompt Injection?

Prompt injection is not theoretical in customer-facing automation.

Ask

- How do you isolate system instructions from user content?

- How do you treat untrusted inputs (links, attachments, pasted HTML)?

- Are there policy gates that cannot be overridden by prompts?

- Are tool/action requests validated against policy and permissions?

Evidence to provide

- High-level testing or red-team approach and tool safety controls.

Even strong defenses will fail occasionally. Your final line of control is auditability: the ability to reconstruct decisions, data access, and actions.

How Do We Audit Everything?

If the bot takes actions, you need provable audit trails.

Ask

- Do logs capture configuration changes, retrieved sources, action attempts, and exports?

- Can customers export transcripts, summaries, and action logs for audit?

- Is there tenant-level change history (who changed what, when)?

- What is the log retention window, and can it be extended?

Evidence to provide

- Sample redacted audit export + configuration change history view.

Auditing capabilities are only useful if you actually verify claims up front. Close the loop with a short validation pack that prevents “checkbox security.”

How Do We Validate Answers?

Most questionnaires fail because “Yes” cannot be verified. Vendor assessment guidance repeatedly emphasizes consistency and verifiability as the practical bottlenecks.

Validation Checklist (Request these Documents)

1. SOC 2 Type II and/or ISO 27001 scope statement

2. Pen test executive summary

3. DPA + subprocessors list

4. Incident response excerpt + notification timeline

5. DR (Disaster Recovery) test summary (date + outcome)

Practical pilot rule

- Start with low-risk intents, disable privileged actions, enforce strict logging, and require human approval for sensitive tools until controls are proven.

If a vendor can provide these artifacts, align them to your scope, and demonstrate AI safety controls with audit trails, you can move from “trust” to “verified confidence.”

Conclusion

AI support automation is safest when you treat it like an operational system, not a chatbot. Scope what the agent can access and do, demand baseline evidence that maps to recognized frameworks, and then pressure-test the AI-specific risk surface: data usage, grounding, injection resistance, and action safety.

Finally, verify everything with a tight evidence pack and a low-risk pilot that forces real audit trails. If a vendor can meet those requirements, you are deploying it with controlled exposure and defensible assurance.

If you’re evaluating AI support automation and want a platform that supports secure deployments (grounded answers, configurable access controls, audit-ready logs, and safe handoff patterns), book a demo of Kommunicate to see how to roll out AI support with the right guardrails from day one.

Devashish Mamgain is the CEO & Co-Founder of Kommunicate, with 15+ years of experience in building exceptional AI and chat-based products. He believes the future is human and bot working together and complementing each other.