Updated on February 19, 2026

Churn rarely looks like churn while it is forming. By the time a customer cancels, the underlying story is weeks old: low activation, stalled adoption, repeated friction, and silent disengagement across the account.

In PLG motions, those patterns show up in leading indicators you can actually measure: activation rate, feature adoption depth, retention cohorts, and the share of users who get stuck and ask for help. Every one of those signals compounds into revenue outcomes, because churn is not just lost ARR. It is lost expansion, lost referrals, and a CAC payback period that stops making sense.

AI agents promise a compelling counterpunch. Done well, they guide users to the next step, prevent common onboarding failures, and deliver just-in-time product tips that pull time-to-value forward.

Done poorly, they create a more dangerous outcome: churn camouflage. If the AI layer deflects frustration and “contains” issues without exporting the truth back to product teams, your dashboards improve while the product stays broken. Churn does not disappear. It just gets quieter.

AI to reduce churn refers to using AI agents and automation to improve leading retention indicators such as time-to-value, activation, feature adoption, and customer effort—while ensuring real product issues are surfaced, not hidden.

This article takes both sides seriously: how AI agents can reduce churn, and how to ensure they do not mask the very product issues you must fix to earn retention.

We’ll cover:

1. Can AI Agents Reduce Product Churn?

2. How Do AI Agents Improve Onboarding?

3. How Can AI Agents Help You Reduce Churn?

4. When Can AI Hide Real Problems?

5. Which Metrics Reveal Hidden Churn Risk?

6. How Do You Prevent Churn Camouflage?

7. What Guardrails Keep Product Issues Visible?

8. What Is A 30-Day Deployment Plan?

9. Conclusion

Can AI Agents Be Used as AI to Reduce Product Churn?

Yes.

In our experience with LULA Loop and Rain, AI agent-led interventions reduced repeated friction, improved responsiveness, and increased operational visibility in ways that directly support retention.

Rain reported performance improvements after deploying the chatbot on mobile and web, with the bot resolving many common inquiries and improving chat deflection, while still maintaining human handoff and continuously optimizing flows to ensure customers receive satisfactory answers.

LULA Loop explicitly anchored its operations to response metrics like MTTA and resolution

The key nuance is scope. AI agents do not “solve churn” as a universal outcome. They time, and described using automation to streamline repetitive queries and use analytics to understand demand patterns and bot effectiveness. influence the parts of churn that are driven by delayed time-to-value, avoidable confusion, and unresolved support friction.

In PLG, those drivers are visible in leading indicators such as activation rate, time-to-value, and feature adoption depth, which are commonly used to diagnose whether users are reaching value or stalling early.

Which Type Of Churn Reduction Can AI Agents Influence?

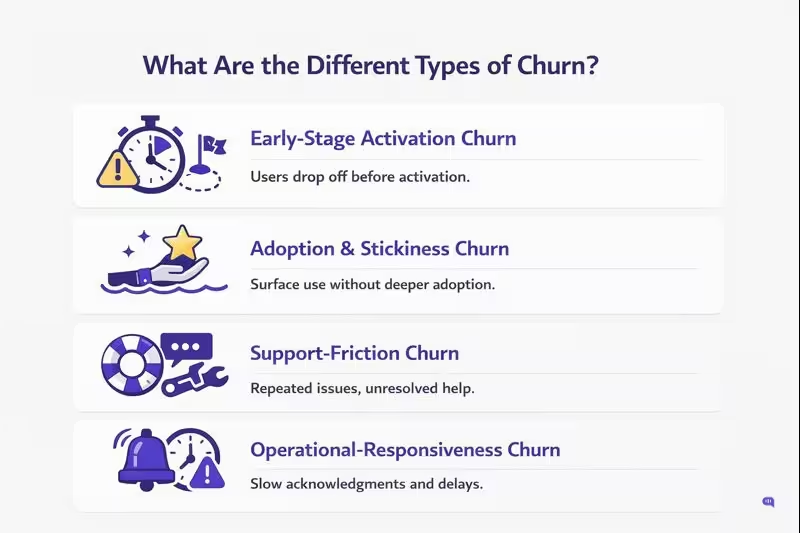

Churn isn’t monolithic, and in practice several different types show up:

1. Early-Stage Activation Churn (Pre-Habit Churn) – AI agents can guide users through setup, unblock onboarding friction, and move users to an activation event faster, which directly improves time-to-value and reduces early abandonment.

2. Adoption And Stickiness Churn (Shallow Usage Churn) – When users only adopt surface features, retention stays fragile. AI agents can recommend next best actions and nudge users toward higher-value workflows, increasing feature adoption depth.

3. Support-Friction Churn (Customer Effort Churn) – If users repeatedly hit the same issues and cannot get timely help, they disengage. AI agents can resolve repetitive, known questions instantly and route complex issues to humans with better context. Rain explicitly maintained human handoff while using the bot to resolve common inquiries and improve deflection.

4. Operational Responsiveness Churn (Slow Acknowledgement Churn) – In high-volume environments, response-time expectations become a retention factor. LULA Loop tied outcomes to MTTA and resolution time targets and used automation plus analytics to improve visibility into query volumes and bot resolution.

Out of these, Product-market fit churn, missing core capabilities, and fundamental pricing or packaging mismatch are primarily product and strategy problems. AI can surface signals faster, but it cannot replace a product fix.

However, AI agents can help you solve the other types of churn.

Where Do AI Agents Actually Fit?

1. In-Product Guidance At The Moment Of Need

AI agents are most effective when embedded in key workflows where users stall. This is where they compress time-to-value and prevent onboarding drop-offs.

2. High-Volume L1 Resolution With Clean Escalation

The right fit is repetitive, low-risk questions and workflows where resolution can be verified. Rain’s approach highlights the pattern: resolve common inquiries, keep an option to connect with a human agent, and continuously optimize flows so answers stay satisfactory rather than purely deflective.

3. Operational Visibility And Feedback For Retention

AI agents should be instrumentation, not just an interface. LULA Loop emphasized reporting on operational parameters like response time and understanding how many queries came in versus how many the bot resolved. That visibility is what lets teams connect automation to retention outcomes instead of treating it as a vanity metric.

AI agents can reduce churn when they shorten time-to-value and remove repeatable friction from the user journey. The next question is how they do this during onboarding, where most PLG churn begins.

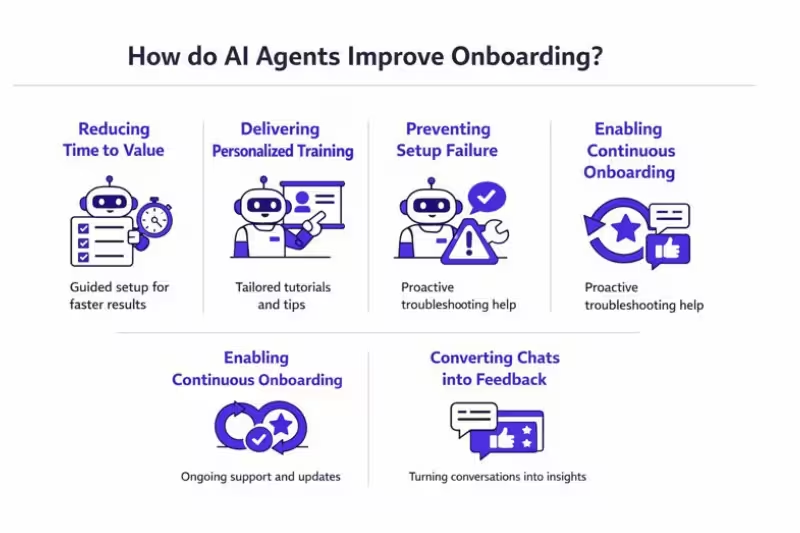

How Do AI Agents Improve Onboarding?

Onboarding succeeds or fails on one variable: how quickly a new user reaches their first meaningful outcome. In PLG terms, that is time-to-value, the time it takes to reach an activation event or “aha” moment.

In our guide on using AI agents to improve product onboarding and training, we break this down as a practical problem of scale: teams need onboarding that is personalized, available in-product, and consistent across many users and use cases.

AI agents reduce churn in the following ways:

1. Reducing Time-To-Value

AI agents remove onboarding delay by answering questions at the exact moment users get stuck, inside the workflow they are trying to complete. That matters because reducing time-to-value is a leading indicator for improving activation and downstream retention.

2. Delivering Personalized Training At Scale

Traditional onboarding assets (tours, walkthroughs, docs) break when users have different intents. The core AI advantage is that you can embed the agent in the product and ground it in your help center and knowledge base so it can respond in a personalized way across many use cases without writing a separate flow for each persona.

This is the “personalized training at scale” mechanism that most teams cannot replicate with human-led onboarding alone.

3. Preventing Avoidable Setup Failures

New users make basic mistakes, and those mistakes often become the first reason they disengage. Your guide calls out “basic troubleshooting” as a direct onboarding benefit, because the agent can correct errors as they happen rather than letting users stall and abandon the journey. This also aligns with common onboarding measurement practice: if you want to improve onboarding outcomes, you track where users get stuck and reduce friction at those steps.

4. Enabling Continuous Onboarding

Onboarding is not a single session. It is continuous training as users encounter advanced features, edge cases, and new workflows. Your guide explicitly positions AI agents as a way to provide continuous training at marginal cost, including coverage when customer success teams are unavailable. This is especially relevant in PLG, where value expansion depends on sustained adoption beyond the initial setup.

5. Converting Chats Into Product Feedback

One of the most underused onboarding benefits is that conversations can become structured “product reviews.” Your guide highlights that onboarding chatbot conversations can reveal bottlenecks and workflows that need simplification, turning support interactions into actionable product insight. This sets up a retention advantage that is not just “better help,” but faster product iteration on real friction.

AI agents improve onboarding when they compress time-to-value, prevent early friction from becoming abandonment, and keep training available as users grow into the product.

Next, we will translate that onboarding capability into churn reduction mechanics, specifically the in-product micro-playbooks and interventions that move retention metrics.

How Can AI Agents Be Used as AI to Reduce Churn in PLG Products?

AI agents reduce churn when they influence leading indicators that show up before a cancellation, especially time-to-value, activation, and early adoption depth.

Your onboarding experience is also where loyalty often gets decided, which is why onboarding-focused AI interventions tend to show ROI earlier than broad “automation everywhere” rollouts.

| Task | Features | Benefits | Value |

| Reduce Time-To-Value In Onboarding | In-product AI guidance, instant answers grounded in onboarding content, step-by-step workflows | Users reach the first win faster, activation improves | Faster time-to-value and higher activation velocity |

| Prevent Setup And Configuration Failures | Troubleshooting flows, guided checklists, context-aware prompts | Fewer abandoned setups and fewer rage exits | Higher completion rates for core setup milestones |

| Drive Feature Adoption Depth | Role-based guidance, next-best-action prompts, persona-aware paths | Users adopt sticky features rather than staying shallow | Increased adoption depth and stronger retention signals |

| Provide Instant L1 Help Without Waiting | Knowledge-based responses, FAQ automation, 24/7 availability | Lower effort to get help, fewer unresolved friction loops | Reduced support-friction churn and lower repeat contacts |

| Improve Responsiveness For High-Volume Support | Smart routing, SLA-aware queues, prioritization | Faster acknowledgements and fewer “nobody replied” moments | Better MTTA and faster resolution in support-heavy products |

| Contain Repetitive Queries With Safe Handoff | Automated resolution for common intents, clear “talk to a human” path | Users get quick answers, complex cases still escalate cleanly | Improved customer experience without breaking trust |

| Reduce Trial Drop-Off And Upgrade Friction | Upgrade guidance, pricing and plan education, contextual nudges | Users understand value and steps needed to convert | Higher trial-to-paid conversion and reduced early churn |

| Personalize Retention Interventions | Behavior-triggered messages, segmentation, intent detection | Right help for the right user at the right time | Higher engagement with key workflows and reduced disengagement |

| Convert Conversations Into Product Insights | Intent taxonomy, tagging, analytics dashboards, friction clustering | Product sees what users struggle with and why | Faster root-cause prioritization for retention-critical fixes |

| Improve Human Resolution Quality | Auto-summaries, context capture, “steps already tried,” evidence collection | Less repetition, higher trust during escalation | Better continuity and fewer failed handoffs |

AI agents can meaningfully reduce churn when they compress time-to-value, remove repeatable friction, and improve responsiveness in the moments users are most likely to disengage.

However, there is a risk. The same automation can hide real product issues if you optimize for containment instead of truth, which is exactly what we address next.

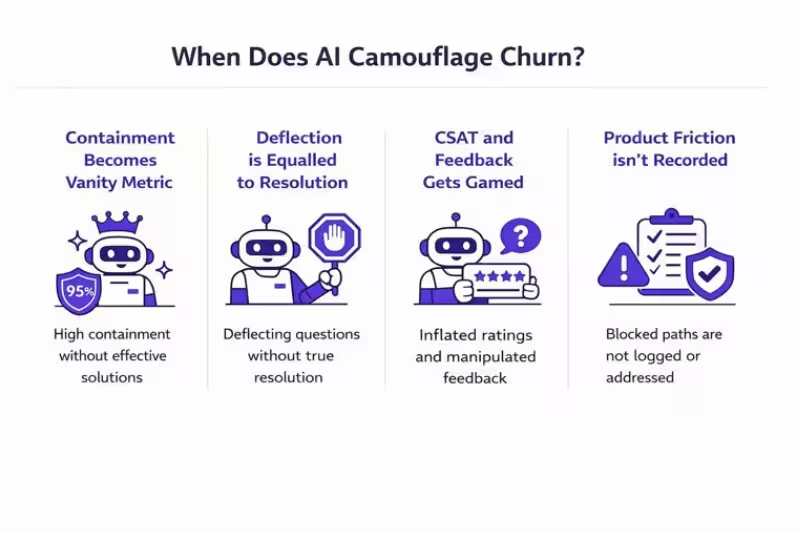

When Can AI Used to Reduce Churn Hide Real Product Problems?

Goodhart’s Law is the cleanest way to explain this failure mode: when a measure becomes a target, it stops measuring what you think it measures.

In retention programs, this shows up when teams optimize AI agents for “making conversations go away” instead of eliminating the underlying friction that causes churn.

Containment Turns Into A Vanity Target

- Containment is often interpreted as success because the user did not reach a human, but that does not prove the user achieved the outcome they came for.

- When containment is rewarded, teams unintentionally bias the AI to avoid escalation, even when escalation is the correct outcome for complex or high-stakes issues.

- At extreme levels, you can get “great” containment while actual experience quality is poor if you do not audit transcripts and outcomes.

Deflection Gets Confused With Resolution

- Deflection measures tickets avoided, not problems solved, so it can improve while users remain stuck.

- If you do not require an outcome confirmation (task completion, successful action, explicit user confirmation), the system can “win” deflection by closing loops fast, not by resolving root causes.

CSAT And Feedback Signals Get Gamed

- Once a score becomes a goal, behavior shifts toward producing the score rather than improving the experience, which is a classic Goodhart pattern.

- In practice, this can look like survey timing tweaks, selective prompting, or steering language that lifts CSAT without changing product friction.

Product Friction Stops Reaching The Product Team

- When AI absorbs confusion, the volume of explicit complaints and tickets can drop, which reduces the raw signal that usually feeds triage and backlog prioritization.

- The most dangerous version of this is not incorrect answers. It is correct answers that cover for broken UX, so the product never gets fixed.

Silent Churn Becomes More Likely

- Some customers do not escalate. They disengage quietly, and a drop in tickets can actually be an early warning sign rather than a win.

- If AI success is defined as “fewer conversations,” you can accidentally optimize for users leaving the channel, not staying in the product.

This is why the next section has to be measurement-first: you need metrics that reveal hidden churn risk even when containment, deflection, and CSAT appear to be improving.

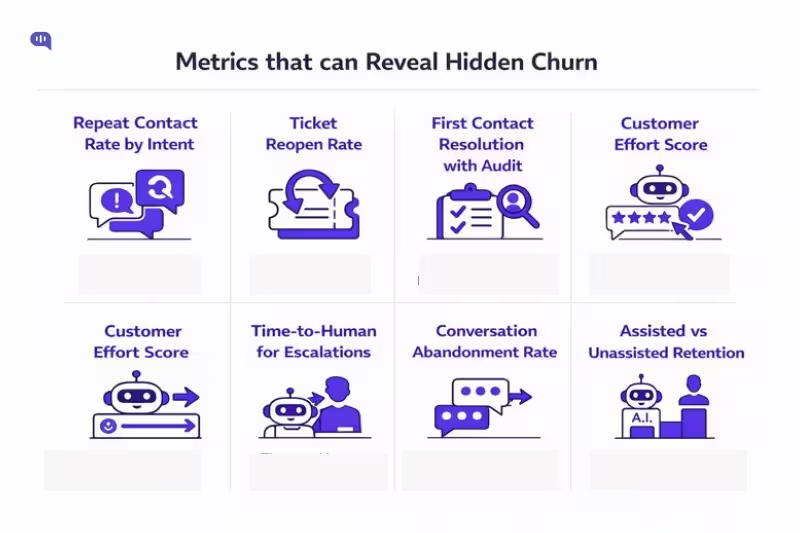

Which Metrics Reveal Hidden Churn Risk?

If AI agents are optimized for targets like containment or deflection, you need anti-Goodhart metrics that reflect outcomes, not “quiet.” Goodhart’s Law captures the risk: once a measure becomes the goal, behavior shifts to “win” the measure instead of improving the underlying reality.

1. Repeat Contact Rate By Intent

- What it is: The share of issues that require customers to contact you again for the same problem within a defined window.

- Why it reveals masking: If containment rises but repeat contacts also rise, the bot is likely ending conversations without durable resolution.

- How to use it: Track by intent category (onboarding, integrations, billing, bugs) to pinpoint friction hotspots that AI might be “buffering” instead of fixing.

2. Ticket Reopen Rate

- What it is: The percentage of solved/closed tickets that get reopened by the customer.

- Why it reveals masking: Reopens are a hard-to-game signal that “resolved” was not actually resolved.

- How to use it: Segment reopens for bot-assisted vs human-only resolutions to detect where AI outcomes degrade.

3. First Contact Resolution With Audit

- What it is: The percentage of issues resolved on the first interaction.

- Why it reveals masking: FCR can look good if definitions are loose, so it should be paired with repeat-contact and reopen checks to prevent “paper wins.”

- How to use it: Audit a sample of “resolved” conversations weekly for outcome completeness (did the user actually complete the task).

4. Customer Effort Score

- What it is: Customer Effort Score is a metric that measures how much effort customers expend to get something done or get an issue resolved.

- Why it reveals masking: Effort rises when users are stuck in loops, forced to repeat context, or bounced between bot and human, even if deflection looks “good.”

- How to use it: Trigger CES specifically after bot interactions on high-friction intents (setup, integrations, permissions, exports).

5. Time-To-Human For Escalations

- What it is: How long it takes an escalated user to reach a human, closely related to first response time / initial engagement speed.

- Why it reveals masking: If time-to-human increases, the AI may be slowing down real help to protect containment.

- How to use it: Track separately for “high-stakes” intents (billing disputes, access issues, outages) where delay is most costly.

6. Conversation Abandonment Rate

- What it is: The share of users who drop the conversation before confirming resolution or before escalation completes (a practical “gave up” signal).

- Why it reveals masking: Users abandoning the chat is often silent churn in motion, especially if response times slip (live-chat users frequently expect fast engagement).

- How to use it: Monitor abandonment spikes after specific bot prompts to identify “loop points” and broken flows.

7. Assisted Vs Unassisted Retention Cohorts

- What it is: Cohort analysis compares retention/churn patterns across user groups over time.

- Why it reveals masking: If bot-assisted users show no improvement in retention (or worse retention) despite better support KPIs, your AI is likely treating symptoms, not causes.

- How to use it: Run cohorts by “used AI in first 7 days” vs “did not,” and by intent type, to see where AI truly changes outcomes.

These metrics work best as a bundle: repeats, reopens, effort, time-to-human, and cohort retention will expose churn risk even when containment or deflection looks strong.

You can prevent this failure mode by designing the operating model so AI exports product truth instead of absorbing it.

How Do You Prevent Churn Camouflage?

Preventing churn camouflage is a measurement and operating-model problem. There’s a few ways to solve for this:

Set Outcome-Based Success Definitions

Define “resolved” as a verified outcome, not an answered message. Require one of the following before closing:

- Explicit user confirmation

- A captured success event in-product (where applicable)

- Aa human escalation for non-verifiable intents

Treat containment as a cost metric, not a product-health metric. Containment alone does not tell you whether the issue was actually resolved.

Protect Escalation Paths With Hard Guardrails

Create non-negotiable escalation triggers for:

- Billing disputes

- Account access

- Security

- Compliance

- Outages

- High-value accounts

Track time-to-human for escalations as a safety metric, so your AI does not “stall” customers to protect containment.

Use Metric Bundles That Resist Gaming

Pair every “efficiency” metric with an “outcome” metric:

- Containment and deflection paired with repeat-contact rate and reopen rate

- Faster first response time paired with customer effort and post-interaction confirmation

Review these as a bundle so a single number cannot be optimized in isolation.

Convert Conversations Into A Product Truth Loop

Implement an intent and friction taxonomy that maps directly to product action:

- UX confusion

- Bug/performance

- Missing capability

- Integration complexity

- Packaging mismatch

Route each category to an owner and attach evidence (links, steps, screenshots) so “support truth” becomes “product work.”

Use Insights To Turn Noise Into Signal

Kommunicate Insights is designed for exactly this truth-preservation layer. It evaluates customer interactions to surface trends and improvement areas, and it supports analyzing either Zendesk tickets or conversation history as the data source.

You can ask natural-language questions or select from prebuilt prompts, and the output includes a detailed analysis with conversation links so teams can audit what is actually happening instead of trusting aggregate metrics.

Run Holdouts And Quality Audits

- Run a small holdout where a cohort receives reduced automation, then compare retention, repeat contact, and escalations across cohorts

- Audit a weekly sample of “resolved” AI conversations for correctness, outcome confirmation, and whether the issue should have been escalated

This is how you keep AI agents retention-positive while ensuring product reality stays visible. Let’s use these insights to create an actionable plan that helps you keep your product performant.

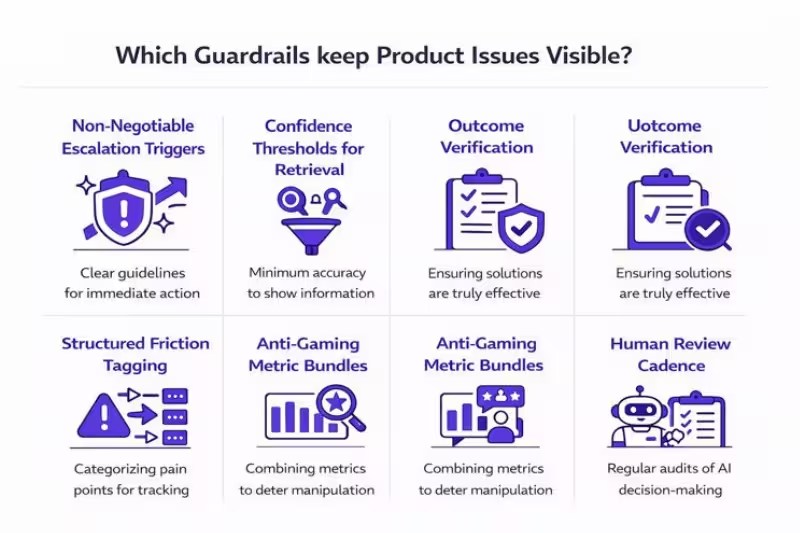

What Guardrails Keep Product Issues Visible?

Guardrails keep your AI agent from “winning” proxy metrics (like containment) while product friction stays unresolved. The goal is simple: every conversation should end in a verified outcome, a clean escalation, or a structured signal that reaches the product backlog.

Non-Negotiable Escalation Triggers

- Define escalation triggers for high-stakes intents (account access, security, billing disputes, outages) and make them impossible to override in bot flows.

- Treat escalation as a safety feature, not a failure state, because AI systems can appear confident even when they should defer.

- Make the path to a human obvious and fast, so users do not get trapped in loops.

Confidence Thresholds And Retrieval Quality Checks

- Use confidence thresholds to trigger human handoff when retrieval quality drops or the bot cannot ground an answer reliably.

- Run regular evaluation suites to catch regressions in accuracy and escalation behavior as your knowledge base and product change.

Outcome Verification Before Closure

- Require an outcome confirmation for intents that should be verifiable (for example, “integration connected,” “report generated,” “setting saved”). This reduces “fast closures” that look good in dashboards but fail users.

- If verification is not possible, default to escalation or an explicit follow-up check instead of auto-closing the interaction.

Structured Friction Tagging That Feeds Product

- Force every bot-assisted resolution and escalation into a friction taxonomy that maps to product action (UX confusion, bug/performance, missing capability, integration complexity, packaging mismatch).

- Attach evidence, including user goal, steps attempted, and failure point, so product teams get reproducible signals instead of vague complaints. This aligns with the broader lifecycle approach of mapping, measuring, and managing risk and impact after deployment.

Anti-Gaming Metric Bundles And Threshold Alerts

- Do not use containment as a standalone success metric, because deflecting a customer does not prove the issue was resolved.

- Pair efficiency metrics with outcome metrics that expose masking, such as reopen rate, repeat contact, and effort, then alert on divergence (containment up while reopens or effort rise).

Human Review Cadence And Accountability

- Review a weekly sample of “resolved” conversations to validate correctness, outcome confirmation, and whether the interaction should have been escalated.

- Assign clear owners for guardrail breaches so the system improves instead of normalizing failure patterns, consistent with established AI risk governance practices.

With these guardrails, AI stays retention-positive while continuously exporting product truth. Next, you can operationalize this with a 30-day rollout plan that ships value quickly without sacrificing visibility.

What Is A 30-Day Deployment Plan?

A 30-day rollout works when you ship onboarding value fast while instrumenting outcome metrics that keep product friction visible, especially time-to-value and activation velocity. We recommend the following approach:

| Phase | Timeline | Focus | What You Implement | Key Deliverables | Go Or No-Go Success Criteria |

| Baseline And Scope | Days 1-7 | Define levers and boundaries | • Define activation event and time-to-value baseline • Pick 3-5 high-volume, low-risk intents• Define exclusion list and escalation triggers• Set “resolved” definition (outcome confirmed or escalated)• Instrument repeat contact, reopens, and time-to-human | • Pilot scope and exclusions• Baseline dashboard (TTV, activation velocity, retention cohort baseline) • Escalation policy and routing map | • Baseline data is reliable• Escalation path is tested end-to-end• Pilot intents are deterministic and verifiable |

| Onboarding Agent Deployment | Days 8-15 | Shorten time-to-value | • Place AI agent in key onboarding steps (setup, integration, first report)• Ground answers in the source of truth (docs and KB)• Add micro-playbooks for common blockers• Add outcome confirmation prompts for verifiable tasks | • Live pilot for a defined cohort• Playbooks for the top intents• Verification events mapped to key tasks | • Activation velocity improves or remains stable • Time-to-human does not degrade for escalations• QA audits pass for sampled conversations |

| Truth-Preserving Measurement | Days 16-23 | Prevent churn camouflage | • Build a metric bundle that resists gaming (repeat contact, reopens, effort, time-to-human, assisted vs unassisted retention)• Establish weekly review cadence between Support Ops and Product• Tag friction into product action buckets (bug, UX confusion, missing capability, integration complexity) | • “Product truth” dashboard• Weekly review agenda and owners• First backlog items created from top friction themes | • Containment can rise only if repeat contact and reopens do not rise• Product team has reproducible evidence for top friction items |

| Close The Loop With Fixes | Days 24-30 | Convert signals into product change | • Triage top friction themes and ship quick wins (copy, UX, docs, suspected bugs)• Update playbooks and guardrails based on audit findings• Expand intent coverage only after stability | • Top 3 friction fixes shipped or scheduled with owners• Updated playbooks and escalation rules• Cohort comparison readout | • Assisted cohort shows improving leading indicators (TTV, activation velocity, early retention)• Guardrails hold under real traffic |

By day 30, you should have measurable time-to-value lift plus a governance cadence that keeps AI behavior aligned to real outcomes, consistent with lifecycle risk management guidance from NIST.

Conclusion

AI agents can reduce product churn when they attack the leading indicators that form weeks before a cancellation, especially activation, time to value, and adoption depth. Time to value is the time it takes new users to reach their first activation event or “aha” moment, and pulling that forward is one of the most reliable ways to improve early retention in PLG motions. In practice, that is exactly why interventions like in-product guidance, instant troubleshooting, and structured onboarding assistance tend to work: they remove repeatable friction at the point of use, rather than forcing users to detour into docs or wait for support.

The real risk is not that AI fails loudly, it is that it succeeds in a way that hides the truth. Goodhart’s Law captures the failure mode: when a measure becomes the target, teams start optimizing for the number, and the metric stops reflecting the underlying customer reality. The solution is truth-preserving automation: define resolution as a verified outcome, protect escalation for high-stakes intents, and use anti-gaming metric bundles that surface repeat friction and silent disengagement.

Book a consult with Kommunicate to design a truth-preserving AI agent rollout that reduces churn without masking product issues.

Devashish Mamgain is the CEO & Co-Founder of Kommunicate, with 15+ years of experience in building exceptional AI and chat-based products. He believes the future is human and bot working together and complementing each other.